In March, a computer achieved what many thought impossible when it won a best of five series against world-class go champion Lee Sedol. The victory by the DeepMind computer was the most significant milestone in artificial intelligence (AI) since Deep Blue beat chess Grandmaster Garry Kasparov in 1997, and once again sparked many predictable headlines about humans being knocked off our IQ perch. The question is, what do such human versus computer matches tell us about AI? Is it the harbinger of a machine-led future or are computers just very good at playing board games? To see how this might play out, we first need to look to the past.

Despite being extremely bad at playing board games as complicated as chess and go for decades, there was almost a sense of inevitability to computers eventually surpassing the abilities of their human creators in this area. When chess-playing computers started being shown in science fiction films and television programs, such as 2001: a Space Odyssey or Star Trek, they were invariably depicted as unbeatable. And that vision is now becoming a reality, with it almost taken for granted that a top-level computer can beat any human being at chess – even up to Grandmaster level.

But there's much more to computers playing boardgames than a wetware versus hardware contest to see who takes home the trophy. Such challenges are also a laboratory for understanding AI, human decision making, the nature of the games played, and the ability to handle extremely complex problems.

The Turk

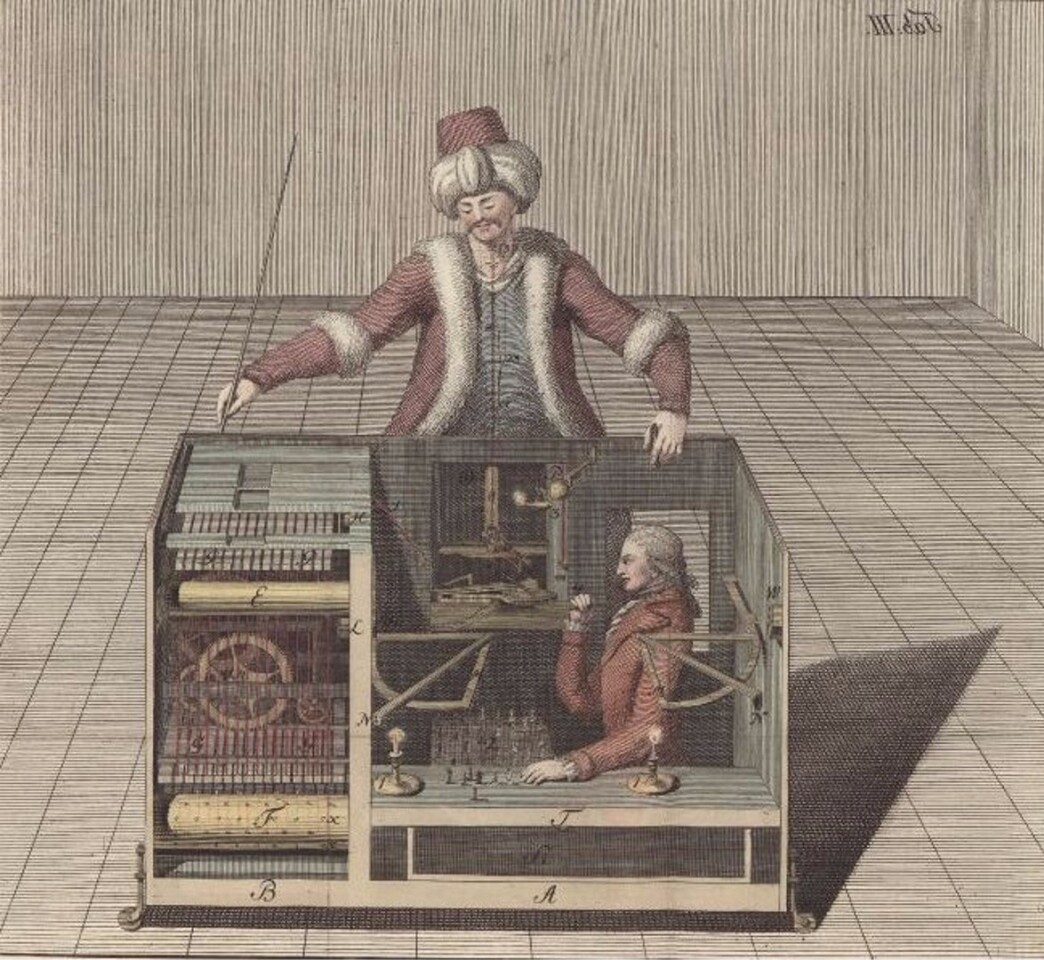

Go was relatively late to computer gaming, but there's history going back over 200 years of attempts to create machines that can best humans at chess. The first attempt and one of the most famous is Wolfgang Kempelen's (or von Kempelen's) Mechanical Turk, which was unveiled in Europe in 1769.

If nothing else, the Turk was an impressive bit of hardware. It consisted of a wooden cabinet filled with clockwork like a giant music box and the wooden figure of a Turk clad in robes seated behind it. The figure's left hand held a long pipe while its right was free to pick up and move the pieces on a board built into the top of the cabinet. In addition, it would rap the counter to declare check or mate, and rap even harder if its opponent made an illegal move.

For years, the Turk toured the capitals of Europe, where it played and won against such eminences as Empress Marie Therese and computer pioneer Charles Babbage, and lost a grueling match against the unofficial world chess champion François-André Danican Philidor. It even played against Napoleon Bonaparte, who was delighted when it scattered the pieces off the board after it caught him cheating.

Not surprisingly, the Turk was a conjuring trick. Hidden inside the cabinet was a very small man who was very good at chess and who used levers to operate the mechanism. When one side of the cabinet was opened to show its workings to the audience, he would squeeze himself into the other.

The Turk ended up in a museum in Philadelphia, where it was destroyed in a fire in 1854, and machine chess became largely a denizen of fiction stories from the likes of Ambrose Bierce until after the Second World War when the invention of the modern computer raised the possibility of creating a machine that could actually play a complicated game like chess.

Early computer chess

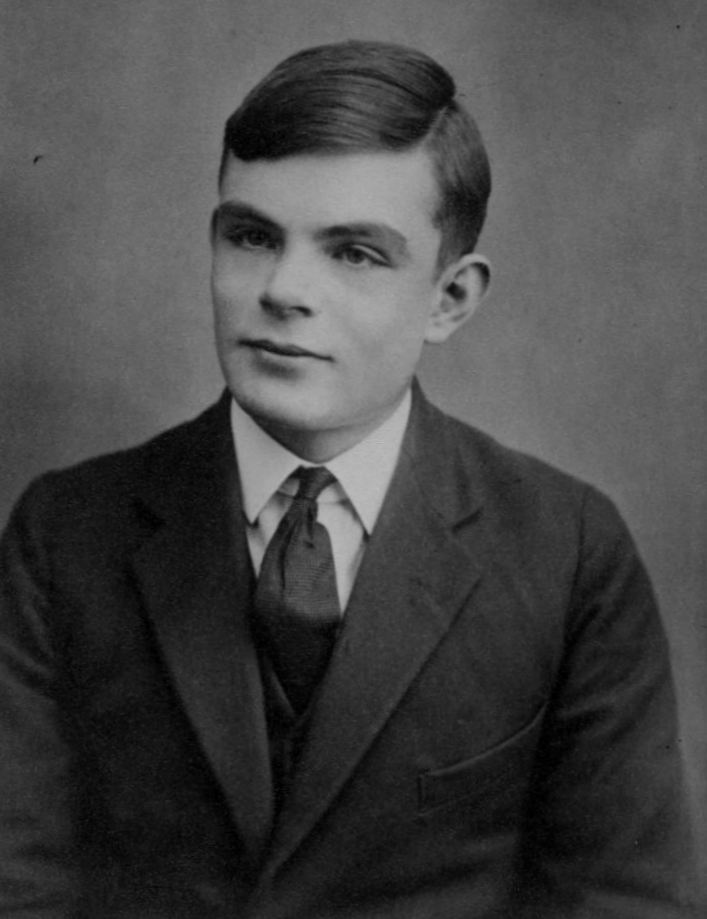

In 1948, Norbert Wiener in his classic book Cybernetics described a chess program and how it might work. Then in 1950, the mathematician Alan Turing wrote the first chess software, however, he never programmed it into a machine. Instead, he and a friend played a game, with Turing standing in for the computer as he followed the algorithm. Each move took 30 minutes, but it worked.

The next year, Dietrich Prinz programmed the Manchester Ferranti computer in Britain to play chess, but it could only manage to do very simple things like solve for mate in two moves. By 1957, Alex Bernstein at MIT programmed an IBM 704 to play the first proper game of chess by a computer.

By the early 1960s, computer chess playing had progressed in baby steps. The first chess computer programs were very simple and ran on machines that would be outclassed by a children's toy today. Until the IBM 704, computers used small boards with only a few squares and either played a simplified version of chess or concentrated on the last couple of moves in a game.

But by 1962, computer chess had taken on a form that modern programmers would recognize, as was depicted in Fritz Leiber's short story of that year, 64-Square Madhouse. Written by one of fantasy's more notorious chess enthusiasts, the story is a satirical science fiction account of the world's first chess tournament with a computer as a contender.

Along with a very good description of how computers actually play chess, it also foreshadowed a lot of the problems that computers in competition would face, such as is the human playing against the machine or its programmer, can the programmer help the computer during a game, what happens when a computer malfunctions or is given false data, and how do you keep a computer from thinking about the game when it shouldn't?

Hexapawn

Also in 1962, Scientific American's mathematical games columnist Martin Gardner invented a simplified game called "hexapawn" and a computer that not only could learn how to play an unbeatable game, but also demystified much about how such a machine works.

Hexapawn is played on a board that only measures three squares by three and the only pieces are six pawns, three white and three black. The object of the game is to either capture all the enemy pieces, move a pawn to the far side, or prevent your opponent from making another move.

It's a simple form of fairy chess, but what's surprising is the Gardner invented for playing it. Instead of a thing of wires and transistors, it consisted of a series of matchboxes. Each matchbox corresponds to a turn in the game and printed on top of each is a diagram showing all the machine's possible moves for that turn. Inside the box are colored beads that represent a particular move. During play, the human makes a move, then selects a bead at random from the appropriate box for the machine. If the machine wins, all the beads are returned. If its loses, the last bead drawn is confiscated. If the last turn box is empty, the bead from the previous move is confiscated.

When it starts out, the hexapawn computer is hopeless and loses almost every time. But as the games proceed and the beads mount up, it gets better and better until it reaches the point where all the human can hope for is a draw.

This sounds like AI at work. The computer learns, it improves, and finally becomes unbeatable, but it's also obvious that this is purely mechanical, and very simple mechanics at that. As roboticist Rodney Brooks says about computers and chess, there's no intentionality. The computer lacks understanding or awareness of what it's doing. It also raises the question of whether even the world champion computers of today are essentially the same.

Another thing about hexapawn is that it illustrates the incredible complexity of chess and the difficulties in designing a machine that can cope with it. The reason why the early computers worked with small boards and simple moves is because chess becomes extremely complex as it scales up.

How computers play

A computer that plays chess, go, or even tic-tac-toe works by looking ahead to predict the consequences of its next move. How effective this is depends on how complex the game is. Tic-tac-toe isn't too hard to program for because there are only 26,830 possible games. But chess has 10123 possible games and it's impossible for any computer to analyze them all. If it had to calculate all possible moves, it would take many times longer than the lifetime of the universe to play a single game.

To keep the computer from having a nervous breakdown on the first move, the programmers have to find ways to simplify the problem. Historically, there have been two approaches. The first is to go for straight AI and try to imitate human decision making. Human players use what is called "ends and means" thinking. That is, the player identifies the goal and tries to figure out how best to achieve it based on a large body of acquired knowledge and experience.

The reasoning is that if there was a way to get this knowledge into the computer, then it should play well. Unfortunately, scientists haven't had much luck leaping this knowledge gap and have found that computers are very bad at emulating human thinking.

The other approach is brute force calculation, which exploits the computer's high speed and infallible memory to analyze potential future moves and discard the bad ones. It does this by weighing each possible move based on things like how much a piece is worth, control of the center of the board, and threats to and from the other player. By carrying this process through a series of moves, the computer builds up a tree of moves from which it can select the best one to play next.

Because the number of possible moves increases exponentially the further one looks ahead, most computers until recently usually only looked ahead about four moves. Even this is a massive number of potential moves to calculate, so in the late 1950s programmers started using "alpha beta pruning." Basically, this means the computer looks at possible moves, gives each one a numerical value and discards all search lines that produce a lower value. This way, the move tree is radically pruned down to a more manageable number as both bad and less-good moves are eliminated.

As chess programs or "engines" developed, other tricks were added, such as the ability to assess positions and other complexities. In addition, bits of AI started to be added by building databases of opening moves, midgames, and endgames for the computer to draw on. The latter was particularly important because toward the end of a game, the fewer pieces make evaluating the best moves that much harder and even today it isn't uncommon to see two computers playing against one another in games that end with each chasing the other around the board like idiots as they repeatedly pass up the chance of a win.

"Idiots" isn't too far off the mark to describe the chess computers of the late 1960s and it shows what Brooks meant by a lack of intentionality. Before he became famous for creating the fantasy world of Westeros, George R. R. Martin made a living as a chess correspondent and in 1972 he published an account of the first all-computer championship in the sci-fi magazine Analog , which was held in New York in 1970 by the Association of Computing Machinery (ACM).

The tournament was won by a program from Northwestern University called Chess 3.0, which ran on a CDC 6400 and took out contenders from Bell Labs, Texas A&M, the University of Graz, Carnegie Mellon University, and the US Navy. It was an historic contest that demonstrated computers still had a long way to go. Despite 20 years of work, computers were still barely capable of playing a legal game – much less winning against any but the greenest and most nervous human player.

Suddenly winning

By the time the 1980s rolled around the picture had changed dramatically. In 1981, the Cray Blitz became the first computer program to be rated a Master in tournament play and the machines progressed in leaps and bounds through the decade. By 1988, Deep Thought beat a Grandmaster and the following year IBM began work on what would become the champion-beating Deep Blue.

There were a number of reasons for this sudden leap in capabilities. Not only were computers getting smaller and more powerful as the microchip revolution gathered steam, but engineers were also building computers with bespoke chips that cranked up the processing speed specifically for playing chess.

Another facet was in the software. Chess computers were now being programmed with heuristics or the ability to learn from past mistakes. They were given rules of thumb – those imprecise but helpful rules that aid in certain situations. They were also taught to recognize patterns and their databases were expanded.

This led to a lot more computer victories, but despite other improvements, the true winning quality was that computers were simply becoming faster and could handle many times more calculations than previously. In a way, they weren't playing better, they were just less bad than the human players.

"The machines do not play good chess," Fred Hapgood wrote in New Scientist in 1982. "In fact, they play terrible chess. In a single game, a computer will make enough mistakes to illustrate a whole textbook of what not to do. Their play is clumsy, inefficient, diffuse, and just plain ugly."

He then went on to say that the reason the computers started winning was because humans make far more staggering blunders on a regular basis than they realize and that the programmers had taught the computers to seek out and exploit this fact. This exploitation is the result of the ability of the computer to analyze games at lightning speed.

Silicon champions

By the turn of the 21st century, chess computers had gone from a curious mixture of public awe and private joke to the most formidable chess players in history. In 1996, reigning world champion Garry Kasparov took on IBM's Deep Blue in a one-on-one match in Philadelphia. Equipped with 200 processors and calculating 50 billion positions in three minutes, the computer still lost to Kasparov four games to two.

Then in 1997 an improved Deep Blue calculating 200 million moves a second using 30 IBM-RS-6000 SP processors beat Kasparov in a rematch in New York City. Deep Blue's inventors walked away with the US$100,000 Fredkin Award and shockwaves reverberated through the chess world as news stories predictably raised fears that our time at the top of the intellectual tree was coming to an end.

Then in March this year, a computer knocked down the champion of a game so complicated that many people thought it would be impossible for a computer to master – go. Google's DeepMind computer running AlphaGo beat South Korea's Lee Sedol, one of the world's best go players.

This win was notable because, though go is in many ways a simpler game than chess, it is more difficult for a computer to handle because it is played on a 19 x 19 board, whereas chess is played on an 8 x 8 board. This means that the number of possible moves is mind boggling with 1010∧48 games as opposed to chess's 10123 and to play at championship level requires a much more intuitive approach than chess as go masters "feel" their way around the board.

But what are the implications of these victories? Is this, as Newsweek called the Deep Blue/Kasparov match, "The Brain's Last Stand?" Is the ability of computers to beat humans at chess, go, and even Jeopardy a demonstration of artificial or even superhuman intelligence?

In one sense, no. Even with the development in recent years of more advanced game-playing programs, including those that incorporate learning abilities and neural networks, they are still strictly mechanical with fully understood processes that rely on brute force calculation to win.

This reinforces just how elusive artificial intelligence is. Chess computers live in a bubble with no ability to perceive the outside world. This means that they cannot comprehend, much less play, a psychological game, which is something many human players rely upon to gain an advantage.

Indeed, playing computers has been described as like playing against "a Martian" because their way of playing chess is so different from a human's – a point that has been offered to explain why they win. The humans are playing human chess, but the computers are playing computer chess.

Why make computers play games?

So is computer chess or game playing just a stunt? A sort of climb Everest on a pogo stick affair that may grab some headlines and impress the masses but doesn't really amount to anything? According to IBM, the answer is a solid "no." Computer scientists regard games like chess as a perfect laboratory for developing computers. Chess is completely logical with rigidly defined moves and rules and has been intensely analyzed by players, strategists, and mathematicians for centuries. Chess player performance is also measurable and quantifiable.

With all this, chess is an excellent way to study the limits of parallel processing and other aspects of computer design and programming. In the field of AI, it also acts as an important milestone. IBM says that if a computer can't be built along the lines of AI that can handle a limited, defined world like chess, then real-world expert systems like Watson that are intended to deal with a wider array of subjects can't be relied upon.

Computers also have implications for the chess enthusiast as well. Is chess solvable? Can a perfect game ever be found that will always guarantee at least a draw? Computers can help to find out if such a game exists or if it is demonstrably impossible. In addition, computers have gone from opponents to servants for chess masters.

Grandmasters now spar with computers to prepare for matches and they now have the previously unheard of luxury of always having an opponent of equal ability to try new ideas on at a moment's notice. Garry Kasparov has also pointed out that computers put so much chess information and experience in the hands of young people that Grandmasters are getting younger and younger.

But what would a truly intelligent chess computer of the future look like? One possible answer is one that not only plays at the level of a world champion like Bobby Fischer or Alexander Alekhine, but also plays like them. Such a "Fisher test" wouldn't mean that the computer would favor the King's Indian Defence or opt for aggressive endgames. Instead, it would measure how well it could gain advantage over an opponent by squeaking its chair, threatening to smoke a cigar, or accusing its opponent of beaming microwaves at its brain. Extra points would be added for complaining loudly about its hotel bill after the match.

In other words, true chess master behavior.