Currently, using Google’s “Search by Image” function, it’s possible to search the internet for information on something if you already have an image of that thing. Also, researchers at Carnegie Mellon University are developing a system that allows computers to match up users’ drawings of objects with photographs of those same items – the drawings have to be reasonably good, though. Now, however, a team from Rhode Island’s Brown University and the Technical University of Berlin have created software that analyzes users’ crude, cartoony sketches, and figures out what it is that they’re trying to draw.

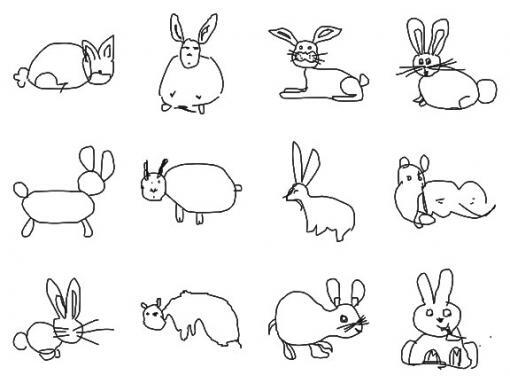

To develop the system, the researchers started with a database made up of 250 categories of annotated photographs. Then, using Amazon’s Mechanical Turk crowd-sourcing service, they hired people to make rough sketches of objects from each of those categories. The resulting 20,000 sketches were then subjected to recognition and machine learning algorithms, in order to teach the system what general sort of sketches could be attributed to which categories. After seeing numerous examples of how various people drew a rabbit, for instance, it would learn that combinations of specific shapes usually meant “rabbit.”

Finally, an interface was designed, in which users draw images using a stylus, and the system attempts to guess what they’re drawing as they’re drawing it – not unlike playing Pictionary. So far, as long as the object belongs to one of the 250 categories, the system is able to correctly identify it about 56 percent of the time. When humans were asked to identify the items being sketched, they had a success rate of about 73 percent. Certainly better, but not wildly so.

In the future, it is hoped that the technology might be used for applications like sketch-based internet searches. If you remembered some mysterious object that you saw while on vacation, for instance, you could draw a picture of it to find out what it was ... even if you weren’t a very good artist. You could also perform searches that weren’t constrained by language barriers, unlike text-based searches.

Before that can happen, however, the system needs to expand far beyond its current 250 categories. One possible way of doing that, the researchers suggest, is through an online game. That game could involve one player being challenged to draw a certain item, which other players have to correctly identify. Along the way, a plethora of sketches of all sorts of objects would be accumulated, and used to build the system’s library.

The system can be seen in use in the video below.

Source: Brown University