Fans of the classic 1982 science fiction movie Blade Runner will remember the ESPER machine that allows Deckard to zoom in and see around corners in a two-dimensional photograph. While such technology is still some way off, researchers in MIT’s Media Lab have developed a system using a femtosecond laser that can reproduce low-resolution 3D images of objects that lie outside a camera’s line of sight.

The experimental setup designed by the MIT researchers gained attention last December when video of it capturing a burst of light traveling through a plastic bottle was released. But as amazing as that capability is, it was for the even more amazing ability to literally see around corners that the team says the system was developed.

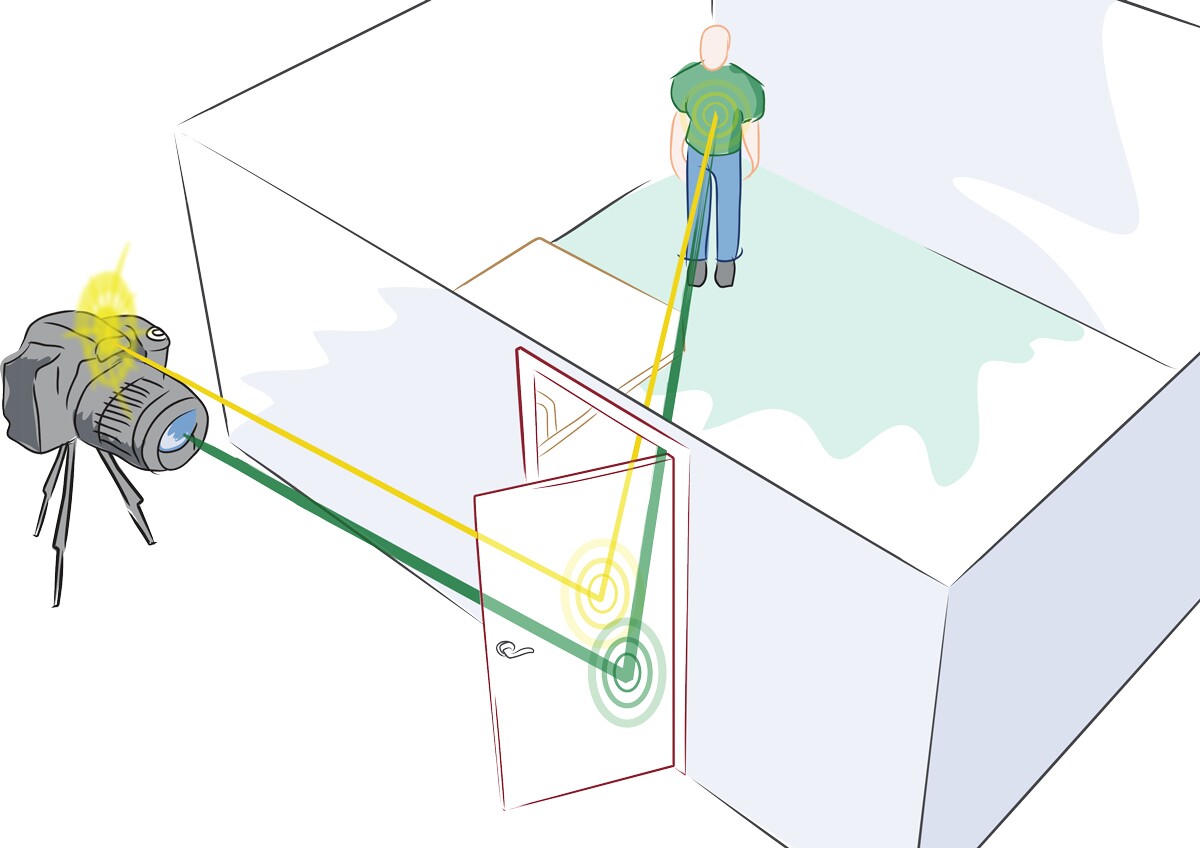

It works by emitting a burst of light from a femtosecond laser that reflects off visible surfaces – such as an opaque wall - onto objects that are hidden from the camera’s direct view. The light then bounces off the object before ultimately making its way back to a detector. This process is repeated a number of times with the laser targeted at different areas of the reflecting surface.

The duration of the bursts of light from the femtosecond laser are so short they are measured in quadrillionths of a second, while the detector can take measurements every few picoseconds – or trillionths of a second. It is the extremely short duration of the light bursts that allows the system to calculate how far they’ve traveled by measuring the time it takes them to reach the detector.

The detector also measures the returning light at different angles and by comparing the times the returning light hits different parts of the detector, the system constructs an image of what lies around the corner.

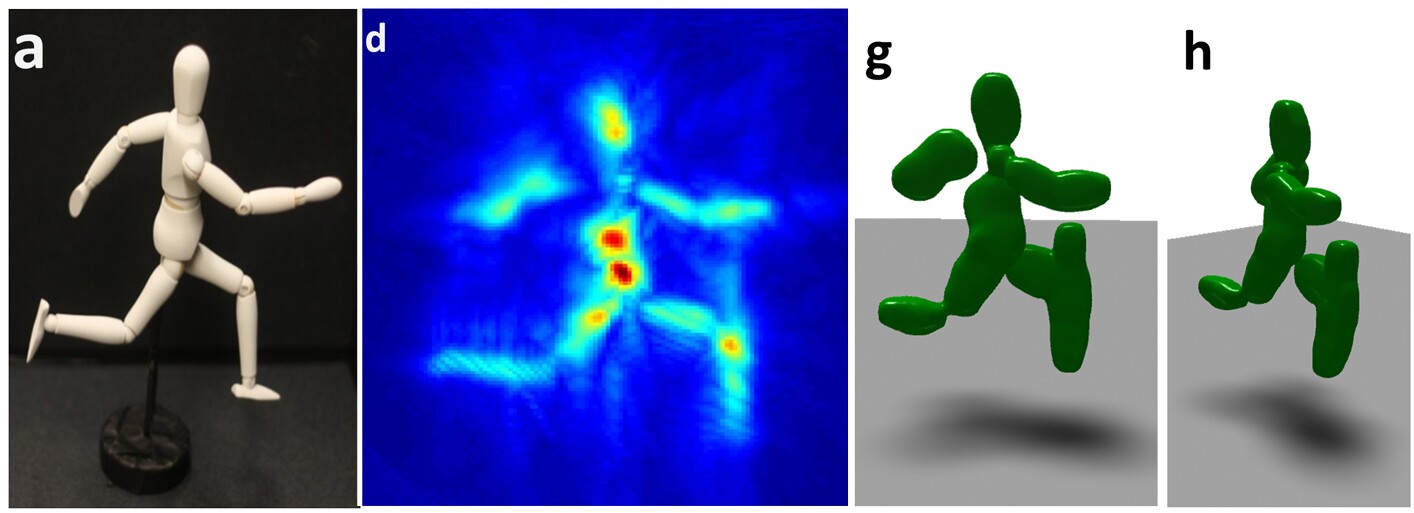

While the resultant 3D images are on the blurry side, the objects they portray are easily identifiable. And the team is hoping to improve the quality of the images as well as enabling it to deal with more cluttered visual scenes.

While the system won’t be put to use hunting down rogue replicants, the MIT researchers say it could lead to imaging systems that allow emergency responders to check out potentially dangerous environments, vehicle navigation systems that can negotiate blind turns, or endoscopes that can see around obstacles within the body, amongst other applications.

The team’s research is detailed in the journal Nature Communications. Nature also has a video explaining the system, which can be viewed below.

Source: MIT