After more than a decade of development researchers at UC San Francisco have demonstrated, for the first time, a brain implant turning neural activity into full words. The first participant in the trial, a paralyzed man in his 30s, can now speak with a vocabulary of 50 words by simply thinking about vocalizing words.

The innovative new technology is unlike prior brain-computer interfaces designed to help those with paralysis speak. Instead of having a person direct a cursor around a screen to spell out words, this device tracks brain activity in regions controlling vocal systems. So although paralyzed subjects may lose the ability to literally move their mouth and vocalize words, their brain can still try to send those unique signals to places like the jaw and larynx.

"With speech, we normally communicate information at a very high rate, up to 150 or 200 words per minute," says senior author Edward Chang. "Going straight to words, as we're doing here, has great advantages because it's closer to how we normally speak."

The new study, published in the New England Journal of Medicine, describes the first person to test this experimental implant. The subject suffered a stroke 15 years ago and can only communicate by typing out words on a screen with a pointer attached to a baseball cap.

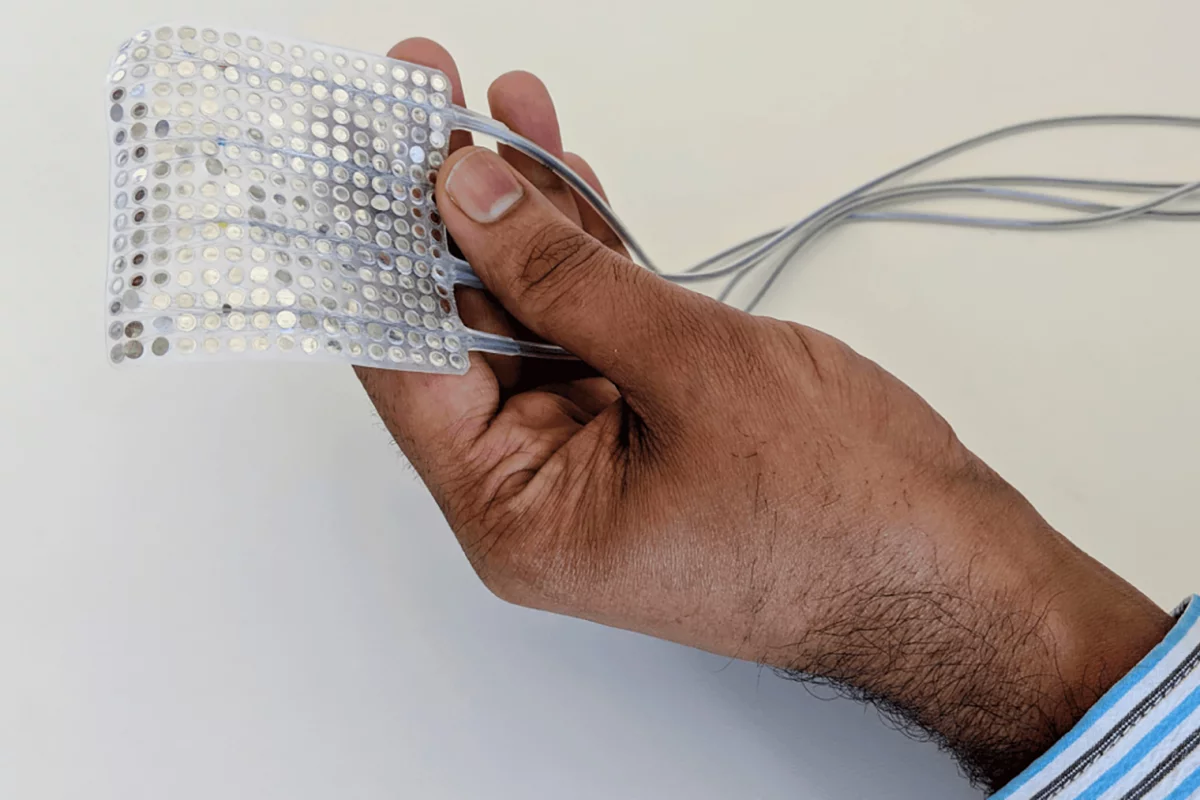

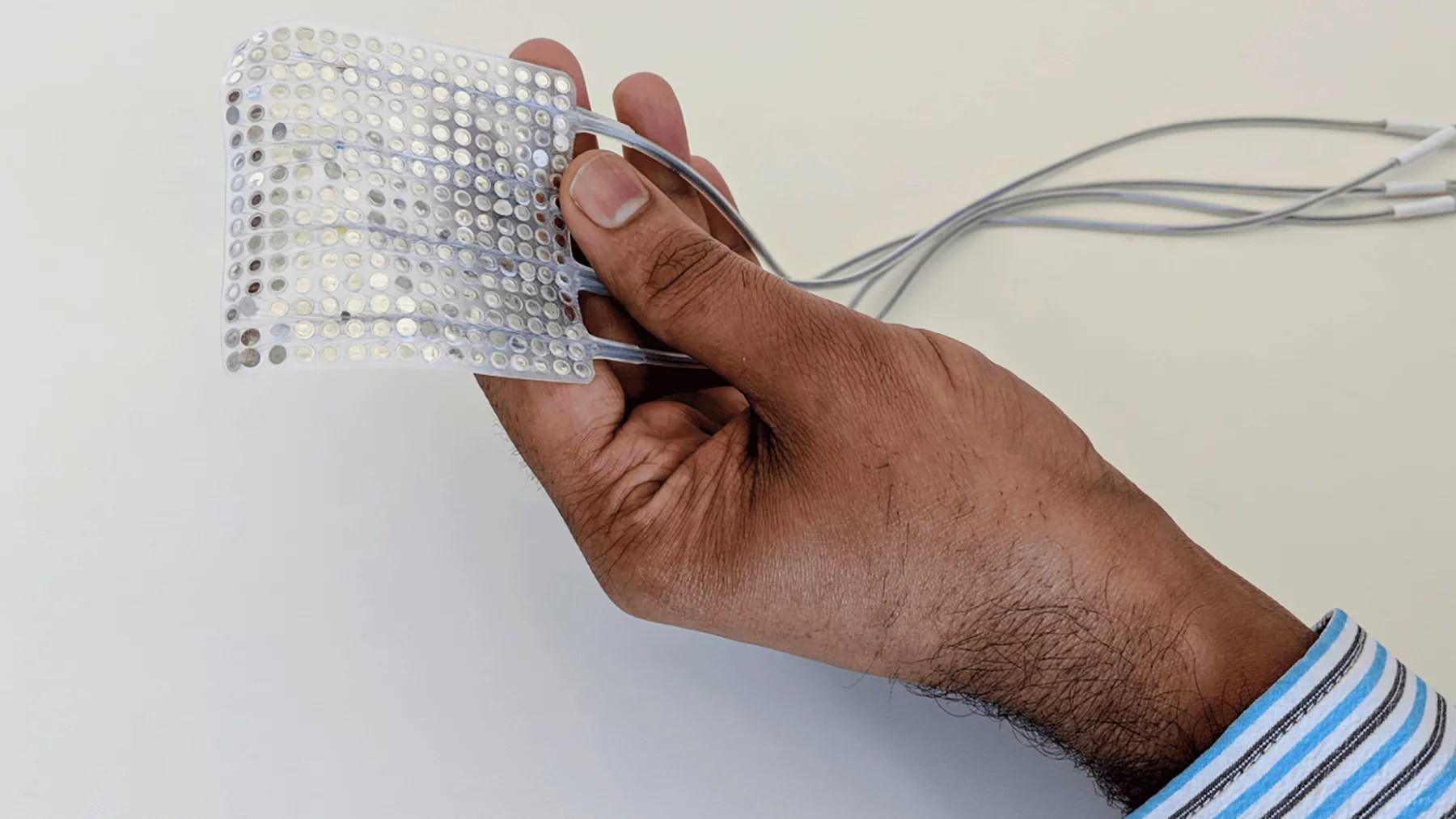

The high-density electrode array was surgically implanted over the subject’s speech motor cortex. Then, over several months, brain activity was recorded correlating particular signals with a 50-word vocabulary. Custom neural network models were then trained to recognize the brain activity and identify the words in real-time as they are being thought.

These early tests demonstrated the man responding to queries from the researchers with full sentences. Questions such as “Would you like some water?” were followed with responses from the man such as, “No, I am not thirsty”.

"We were thrilled to see the accurate decoding of a variety of meaningful sentences," says David Moses, lead author on the new study. "We've shown that it is actually possible to facilitate communication in this way and that it has potential for use in conversational settings.”

This initial proof-of-concept test didn’t offer lightning speed results. The implant can currently decode about 18 words per minute. And the median accuracy is only 75 percent, so there is plenty of room for improvement.

Moses says algorithmic improvements can enhance the accuracy and speed of the device. A kind of “auto-correct” function was incorporated into this early test, showing how the system can be improved by the computer learning and predicting what a person means to say.

The trial is set to be expanded with the inclusion of more participants. The researchers are also looking to build on the system’s vocabulary and speed up the rate of decoded speech.

"To our knowledge, this is the first successful demonstration of direct decoding of full words from the brain activity of someone who is paralyzed and cannot speak," says Chang. "It shows strong promise to restore communication by tapping into the brain's natural speech machinery."

The new study was published in the New England Journal of Medicine.

Source: UCSF