The 33rd annual Space Symposium wrapped up recently in Colorado and New Atlas was on hand to check out some of the exhibits and talks. Amidst the rocket models, jet engines and satellites, we found a quiet corner to sit down with Scott Fouse, the vice president of Lockheed Martin's Advanced Technology Center. For our One Big Question series, we wanted to get his thoughts on what reaching for the stars will look like in the future, so we asked him: What will space exploration look like in 2040?

Oh, and, he was so rich with information that we broke our regular format of asking only one question this time and threw in a few follow-ups. We didn't think you would mind.

Here's an edited version of our interview.

One of the things we're we're doing right now is starting a collaboration with Breakthrough Initiatives, led by former head of the NASA Ames Research Center, Pete Worden. They do these kind of far-out projects – one they're doing is called Breakthrough Starshot. The idea is they want to visit the closest star, Alpha Centauri.

To do that they're developing a single-chip spacecraft attached to a light sail. The concept is that there will be a satellite in orbit that will pop out one of these light sails, they'll turn on the laser, hit it for two minutes and that will accelerate it to .8 the speed of light. At that point it just goes. And there's lots of very interesting cool technology about how you build that single-chip spacecraft, and the light sail itself is very interesting. It can be more than just a sail, it can be an imaging sensor, it could be the aperture for communicating. So that's a pretty far-off concept. In talking with the guys, they're thinking that's a kind of 20 to 30-year vision. So it's it's definitely in the ballpark of 2040 I think.

A little closer to home, you watch what's happening in our daily lives and it's quite interesting how traditional computers are disappearing and they're becoming embedded in our fabric. Everything is a computer, so the times we sit with a computer in front of us are diminishing – they're just always around. So in a very similar way you can think today about what a traditional satellite would look like tomorrow. Right now, we build the structure and you put all these boxes inside, but for us we're thinking at one point that all those boxes will become embedded in the structure itself.

So a few years ago we did a concept project called PrintSat where we we actually developed a robotic cluster of additive manufacturing tools and demonstrated this concept of printing the satellite, where you've got embedded electronics and other things right in the structure. It was a very early concept, and there are lots of challenges right now because we don't yet have systems-engineering tools that would allow us to reliably do that. But I I have no doubt by 2040 those will be there.

What's the benefit of printing spacecraft?

Part of it is that they'd be significantly lighter, because with space it's all about the weight. Plus, think about the cost savings in how we manufacture. Today it takes us two to three years to build a fairly capable satellite. I might be able to print a satellite maybe somewhere in the order of a couple weeks or a month. And that would also be a significant benefit, because you wouldn't actually have to have people assembling it. Whenever you have this kind of touch labor, you have the potential for mistakes to happen.

What would some of these sensors look like?

One of the things we've been working on in our lab is a concept we call SPIDER (Segmented Planar Imaging Detector for Electro-optical Reconnaissance). You think about the satellites for which we're doing optical systems and optical sensors, and in order to do that you've got to have some kind of lens or mirror to form the image. And the quality of the image is going to be directly linked to the quality of that mirror, which is also kind of a long-lead item. So I want to not use a lens, but build it as a true flat optical sensor. So for the whole image-formation process, we're going to do that using integrated photonics that sit behind that image. We've actually done a prototype of one of those right now funded by DARPA, but it's very early. But I honestly believe by 2040 that will be there.

You can go back to this concept that it's all printed in the structure. And you basically get a sensor with all of the computation behind it. We do Earth sites at Lockheed where we're staring at the Earth, but we also do heliophysics where we're staring at the sun. Such an optical sensor would be a perfect thing for that. And with 360-degree viewing possible, you could also be looking around and making sure there aren't satellites or other things around and assume a kind of defensive posture.

It's actually fairly interesting because it uses some of the same principles that go all the way back to early radio astronomy where you had multiple radio telescopes and images were made by combining their signals. And that's what this is doing for interferometric imaging. And so it's just using tried-and-true principles of image formation but now doing it a very interesting scale.

Bargain lasers

One of the other things I fully expect to happen are very low-cost, highly capable lasers. I think we'll see more of that. Not only will it allow satellites to communicate with each other, but maybe more importantly, we can get to where we have very precise relative location between satellites. In terms of the optical sensors, that would allow us to create a much larger aperture by having multiple telescopes so we can get a very high-fidelity image.

Right now if I have a satellite with a one-meter aperture, and I have a number of those, I could probably get to being able to form an image where it will be as if I have a 100-meter telescope. But in order to do that, you've got to have really precise relative location. And that's another concept we're starting to work on.

Satellite service calls

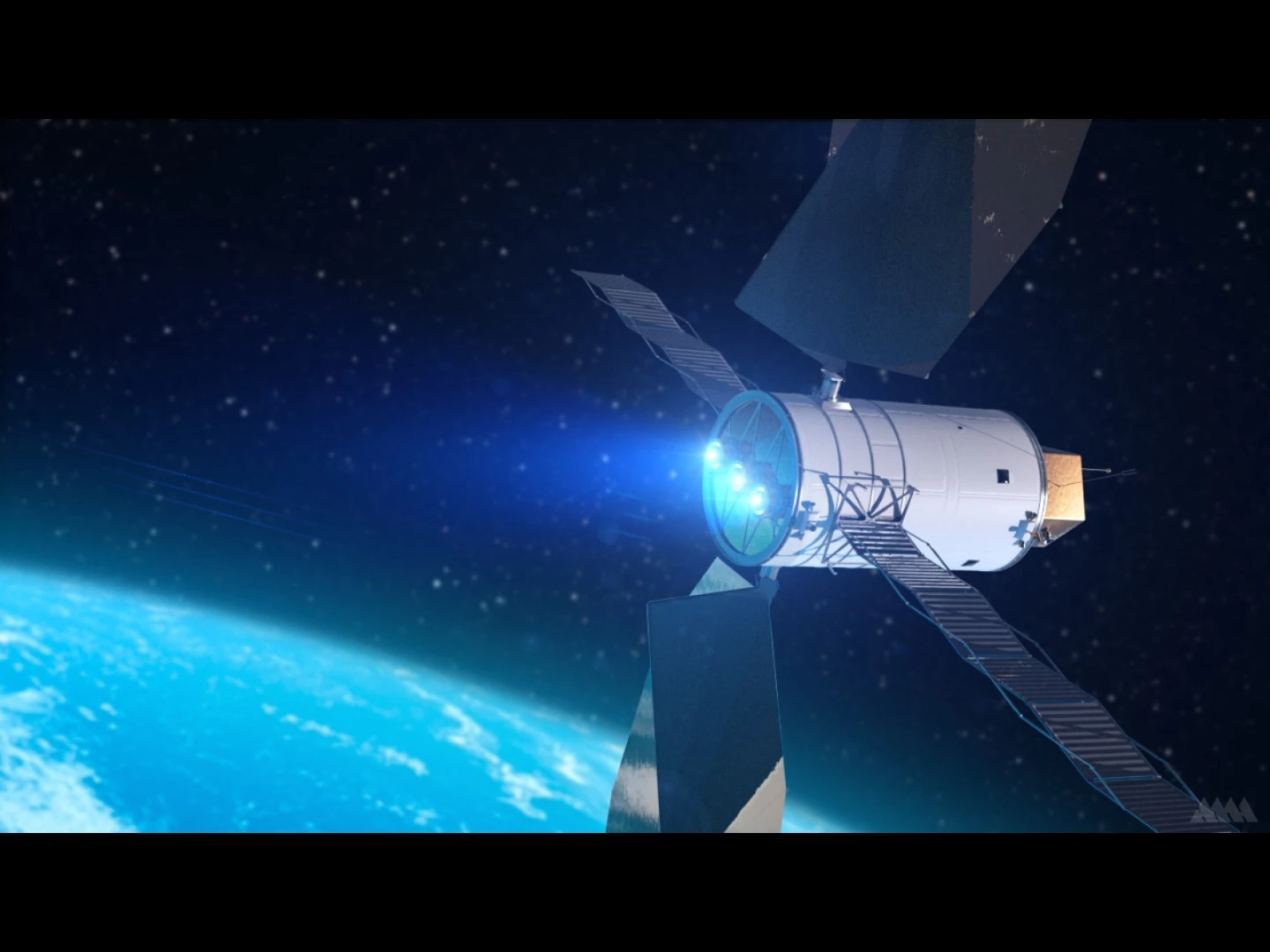

One of the other things we think is going to be happening by 2040, and again, you're starting to see the earliest examples of it now, is satellite servicing. Over the last few years Lockheed has been exploring the notion of just being able to go up there and refuel satellites. But we think realistically with the robotics technology that will be there, you'll have systems that will actually be able to service and repair satellites.

And the other thing we've talked about is that we may start making the satellites inside out, so that literally, this robotic servicer can go in there and replace boards, which means I could fly a satellite and then three or four years later upgrade it in terms of its computational power or storage power.

This is where (Lockheed executive vice president) Rick Ambrose likes to talk about the notion of a software-defined satellite. You see it on fighter aircraft right now. Probably 80 percent of the capability of the F-35 is being driven by the software, so it's a software-defined fighter, right? Well, we can do that with satellites.

You see it with Tesla too. Tesla does these software updates and all of a sudden you've got a half dozen new capabilities in your car. So that that will be a big part of it. It'll be interesting to watch this space and see if it will eventually be better to repair the satellites up there or launch new ones.

Man and machine

A big area that's for me a little bit more of a passion is this whole human/machine teaming. Just how do we leverage all of the capabilities of automation, AI, big data analytics, deep learning, and other technologies, and couple that with the human to make a more powerful human/machine pairing?

We have a guy named Bill Casebeer and he's building a team called "human performance augmentation." His work involves the area where the machine understands your state and based on that state, will do things to enhance the overall human/machine performance. And Bill is actually a neuroscientist so he's really trying to understand how people think and trying to drive the research to where you get peak performance.

A good friend of mine is a DARPA program manager – he's the guy actually whose project spun out Siri – and he just went back to DARPA again and he's doing a project on explainable AI.

When you think about how we do collaboration, you ask the question and I give you an answer, but then I'll explain more. If all you ever have is the answer, there's no way for us to develop trust; we need to understand how you're thinking about the world. So he's trying to now build that in. They are just now kicking off a whole new program on explainable AI. So to me that's a key part of it.

One of the things that's very interesting is that both the computational power and also the kind of software technologies are currently developed to where we can start to do this kind of stuff. I was part of a small AI company 30 years ago and we were talking about these things then, but the computational horsepower just wasn't there. But when you look at what the human brain is like and you look at what kind of computing power we have today, we're approaching that.

And so I fully expect that this is going to be an aspect of how we see what plays out in space clearly in the 2040 timeframe. We're already seeing it a lot in the military space.