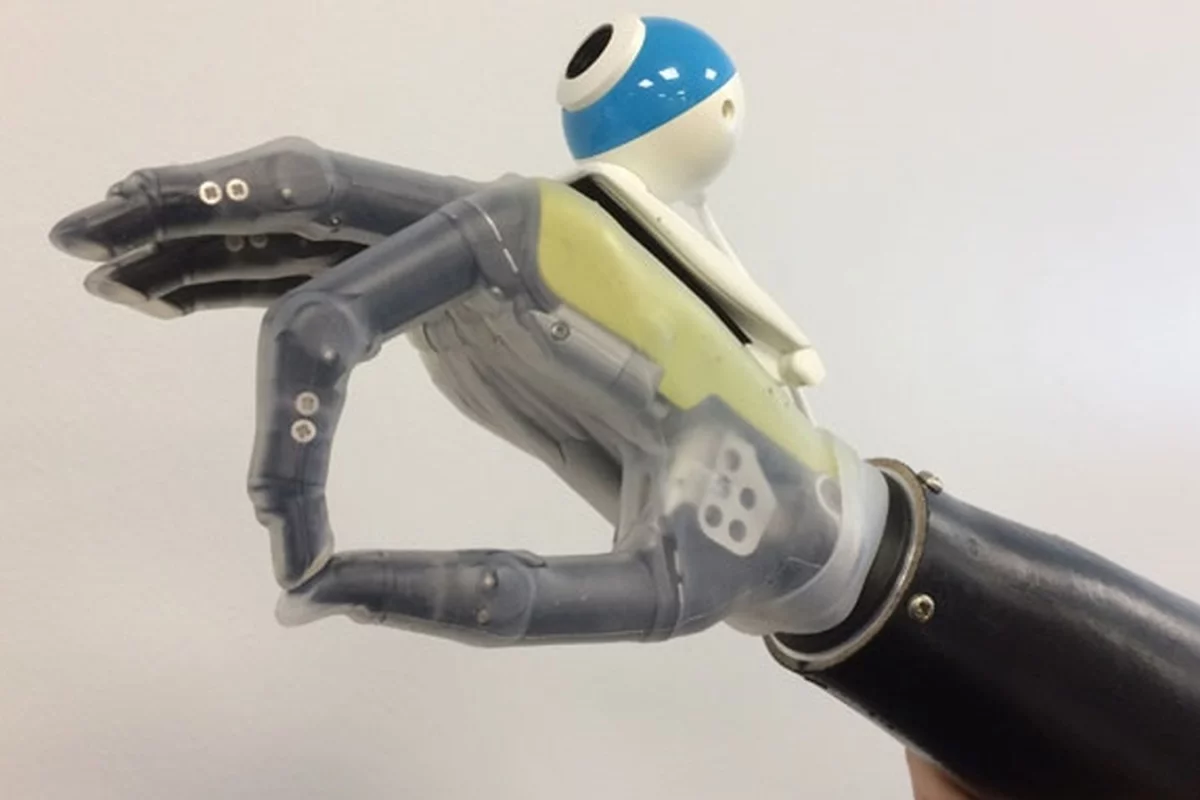

Biomedical engineers at Newcastle University are giving new meaning to hand-eye co-ordination by fitting a bionic hand with a camera. Designed to usher in a new generation of smarter prosthetic limbs, the hand undergoing clinical trials uses an off-the-shelf camera combined with machine learning to allow patients to grasp objects automatically without consciously operating the device.

Modern prosthetic limbs have come a long way from the metal hooks of yesteryear, but they still have a long way to go. The current generation are mechanical marvels that use myoelectric sensors to pick up electrical impulses from the patient's arm stump to allow them to control movement more naturally, but it's not easy. Despite robotics, new servo motors, and feedback systems, using an artificial hand is still something that requires concentration, patience, and practice.

That's because we don't so much control our hands as order them about. To see what this means, do something completely ordinary, like picking up a cup of tea and watch closely what your hand does – the way it approaches the cup, zeroes in on the handle, shifts its grip, and guides the cup as it lifts.

For a normal hand, all of this is automatic. We simply order the hand to do what we want, and the lower parts of the central nervous system do the dirty work. But with prosthetic hands, the patient has to constantly tell it what to do because even the most sophisticated artificial hand is less of a limb and more a tool that requires step-by-step directions.

What the Newcastle University team is trying to do is develop a bionic hand that uses an inexpensive digital camera to analyze objects as the patient reaches for them, sorts out their shape and size, and alters its grip appropriately. The idea is that instead of the patient controlling how the hand moves, the prosthetic itself takes over like a car with a self-parking mode.

"Prosthetic limbs have changed very little in the past 100 years – the design is much better and the materials' are lighter weight and more durable but they still work in the same way," says Dr Kianoush Nazarpour, a Senior Lecturer in Biomedical Engineering at Newcastle University. "Using computer vision, we have developed a bionic hand which can respond automatically – in fact, just like a real hand, the user can reach out and pick up a cup or a biscuit with nothing more than a quick glance in the right direction.

"Responsiveness has been one of the main barriers to artificial limbs. For many amputees the reference point is their healthy arm or leg so prosthetics seem slow and cumbersome in comparison. Now, for the first time in a century, we have developed an 'intuitive' hand that can react without thinking."

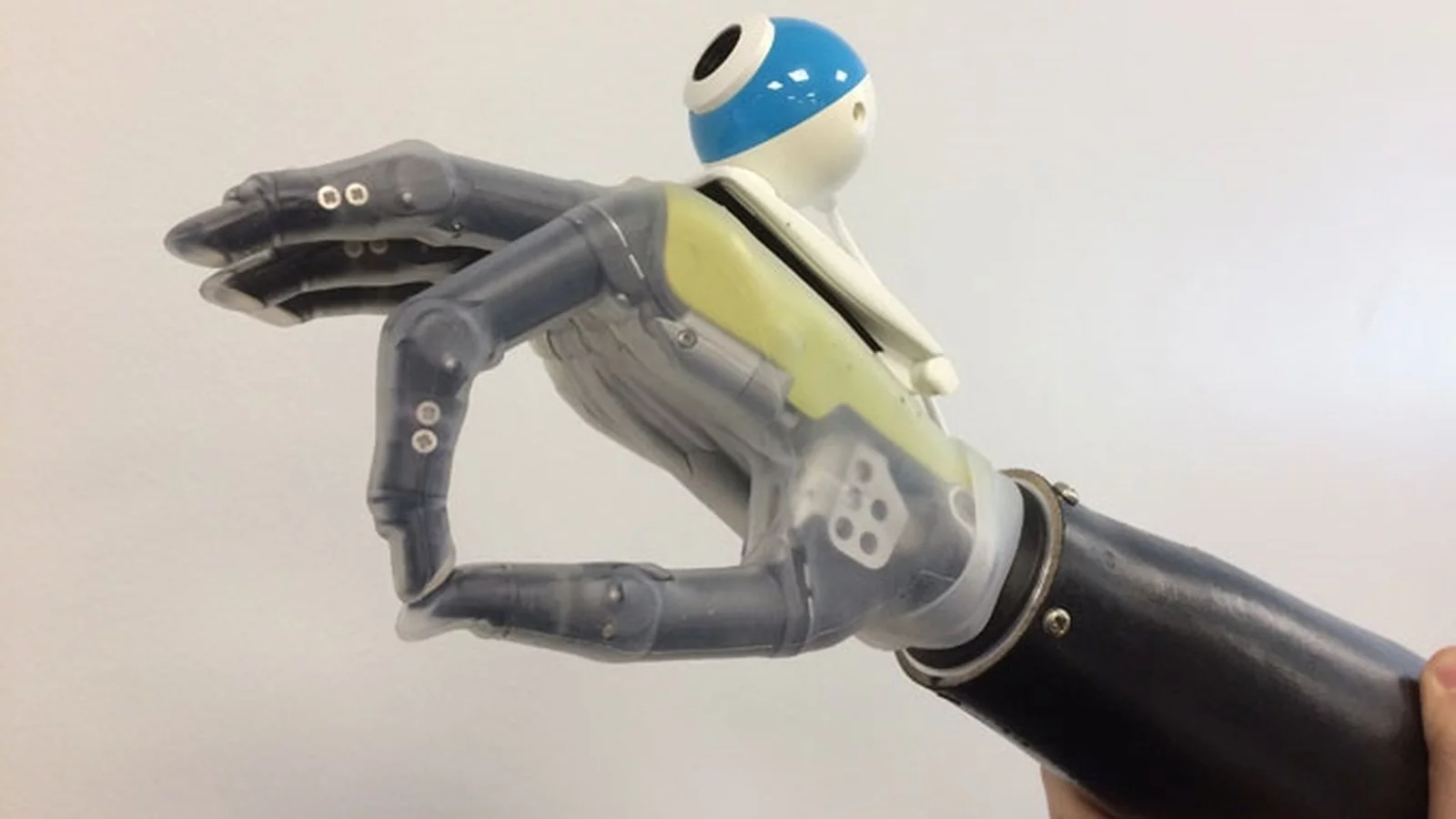

The key to the new hand is machine learning. To train the hand, what is called a Convolutional Neural Network (CNN) was created. This artificial intelligence network was then trained using 72 images, taken at five degree intervals, of over 500 graspable objects, which were then separated into four "grasp classes" – pinch, tripod, palmar wrist neutral and palmar wrist pronated. After fine tuning, it was then tested in real time with the training objects and new ones.

"We would show the computer a picture of, for example, a stick," says lead author of the study, Ghazal Ghazaei. "But not just one picture, many images of the same stick from different angles and orientations, even in different light and against different backgrounds and eventually the computer learns what grasp it needs to pick that stick up. So the computer isn't just matching an image, it's learning to recognize objects and group them according to the grasp type the hand has to perform to successfully pick it up. It is this which enables it to accurately assess and pick up an object which it has never seen before – a huge step forward in the development of bionic limbs."

In practical terms, this meant that by attaching a 99p (US$1.28) camera to the hand, the engineers could program it to recognize an object and select the appropriate grip to pick it up, such as palm wrist neutral grip to pick up a cup or a palm wrist pronated to retrieve a TV remote. Equally important, it does this in milliseconds, which the team says is 10 times faster than current prosthetics.

The researchers say the new hand is also more flexible than alternative systems and better able to handle strange objects without relying on a giant database of images.

Working with the Newcastle upon Tyne Hospitals NHS Foundation Trust, the team is providing the hand to patients at Newcastle's Freeman Hospital. However, the bionic hand and its camera are seen as an intermediate solution until a more advanced prosthetic can be built that includes sophisticated sensors and is controlled directly by the patient's brain.

"It's a stepping stone towards our ultimate goal," says Nazarpour. "But importantly, it's cheap and it can be implemented soon because it doesn't require new prosthetics – we can just adapt the ones we have."

The project was published in the Journal of Neural Engineering.

The video below shows how a fully developed camera hand would work.

Source: Newcastle University