It's already nigh on impossible to pick computer generated graphics in the latest blockbusters, but the job is set to get even harder thanks to researchers who have developed a graphics algorithm that not only realistically models light reflecting off complex surfaces like water, leather, glass, and metal, but which also runs about 100 times faster than current state-of-the-art systems.

Many rendering techniques for computer graphics opt to smooth out complex and bumpy surfaces to speed up their calculations. Such techniques, the researchers responsible for the new technique tell us, were already fast enough to use in the early 80s, but the lack of detail can make those surfaces look a bit off when seen up close.

The algorithm developed by Prof. Ravi Ramamoorthi and colleagues at the University of California, San Diego (UCSD), UC Berkeley, Cornell and Autodesk, leads to much more realistic results because it breaks down each pixel of an uneven or intricate surface into a myriad of so-called "microfacets." Each microfacet acts like a tiny, smooth mirror, reflecting light in a particular direction. Taken together, tens of thousands of these tiny mirrors can help generate a highly realistic representation of many different surfaces.

Microfacets had already been used in other rendering systems, but processing them with accuracy required an impractical amount of number-crunching. The system introduced here reduces the necessary calculations by a factor of 100 and is only about 40 percent more demanding on hardware than the simplified "smooth surface" methods shown above.

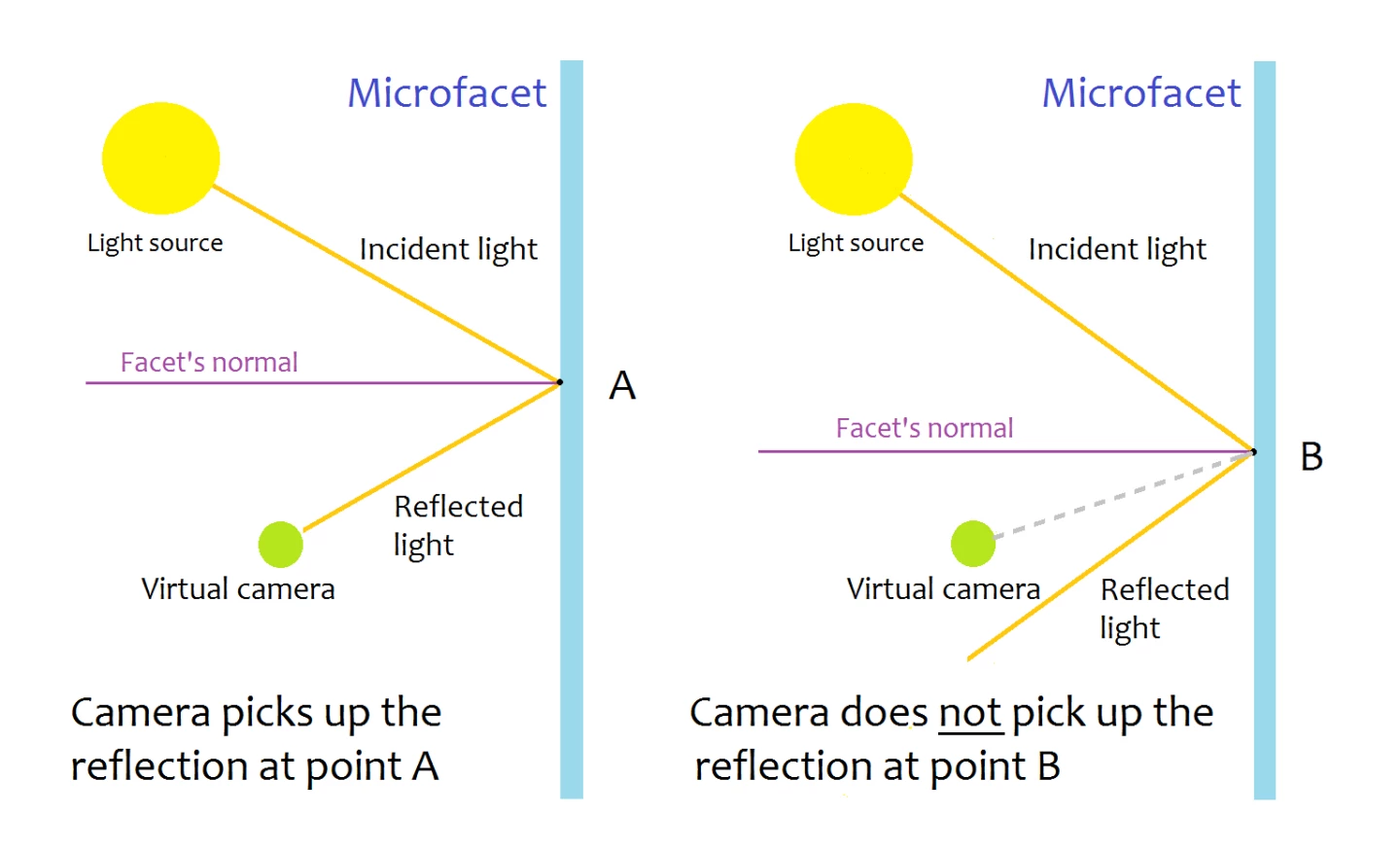

The starting point for the researchers was to calculate, for each microfacet, its so-called "normal vector" – which lays on a line perpendicular to the surface of the facet. With the help of this vector, researchers can predict exactly where light will reflect.

The scientists determined which points in the microfacets would reflect light and which wouldn't based on the angle formed by the incoming light ray and the facet's normal vector. A virtual camera, corresponding to the point of view from which the rendering was done, would only pick up light reflected by a facet if it happened to be in the path of the reflected ray.

"When there are small bumps on the surface, the places where the angles line up correctly depend on those small bumps," Prof. Marschner, who took part in the study, told Gizmag. "There could be thousands of different places where reflection happens, on a bumpy mirror."

Whereas other systems would calculate the reflections one by one, requiring plenty of computing resources, the scientists in this study grouped microfacets into patches and then approximated the amount of light reflected by each patch. The result is not only 100 times quicker than before, but it can now also, for the first time, be used in computer animations rather than still pictures alone as is the case with current systems.

The next steps for the researchers will be to work on rendering very rugged surfaces, supporting multi-resolution representations, and making their software run even faster using less memory.

The advance will be presented later this week at SIGGRAPH 2016 in Anaheim, California.

Source: UCSD