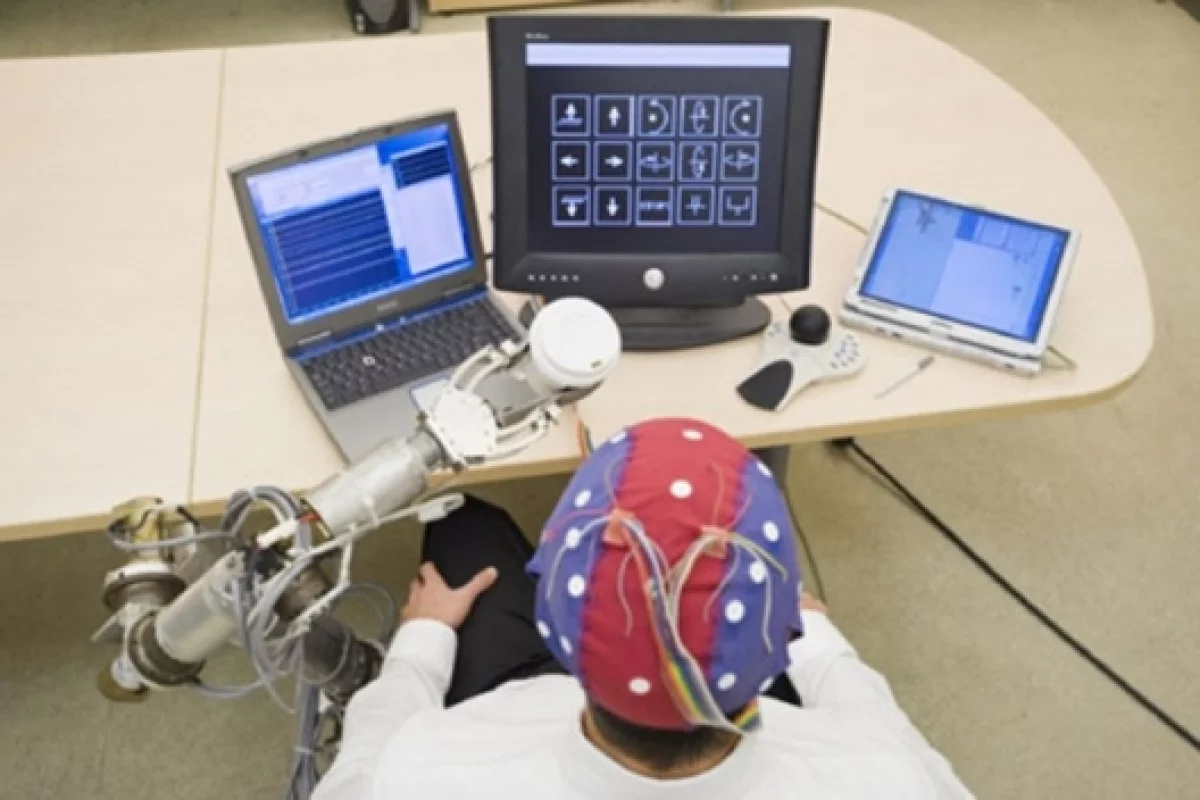

February 11, 2009 Researchers at the University of South Florida have designed a system that uses an Electroencephalograph (EEG) to read the brain waves of wheelchair-bound people and allows them to control a robotic arm with their thoughts. The Brain-Computer Interface (BCI) captures P300 brain wave responses, the consistently detectable brain waves associated with decision making, and transmits instructions to the robo-arm “without the user moving a muscle.”

“We modified the BCI system to display a matrix of several options that include actions or directions that the user would like to have the Wheelchair-mounted Robotic Arm perform,” said Redwan Alqasemi, a researcher in the USF Department of Mechanical Engineering’s Center for Rehabilitation Engineering and Technology. “The user wears a head cap fitted with electrodes to measure P-300 electroencephalogram activities in the brain. While the movement options intensify on a screen and flash at certain frequencies, the user concentrates on the option desired to trigger the desired P-300 brain signal. The electrodes detect the signal, relate it to the desired action, then, the WMRA control system translates the brain signal to the robotic arm, which carries out the desired movements.”

“Our Rehabilitation Engineering & Technology Program is aimed at designing and developing rehabilitation robotic systems that maximize the manipulation and mobility functions of persons with disabilities,” said Rajiv Dubey, professor and chair of the USF Department of Mechanical Engineering, and director of the Center for Rehabilitation Engineering & Technology. “The result will be that mobility-impaired persons can live more independently, with improved quality of life and even better employment outcomes.”

The WMRA is intended to provide patients who are totally paralyzed with a semblance of physical mobility.

Full text of press release available here.

Kyle Sherer

Via: MedGadget.