When you reach into your pocket and grab your keys, you can tell how they're oriented, without actually seeing them. Well, an engineering team at the University of California San Diego has created a soft robotic gripper that works in much the same way. It can build virtual 3D models of objects simply by touching them, and then proceed to manipulate those items accordingly.

Ordinarily, robots need to see objects that they're gripping via a camera, and/or they initially need to be trained to grip them. This means that low-light situations can be challenging, as can objects that the robot has never been "introduced to" before.

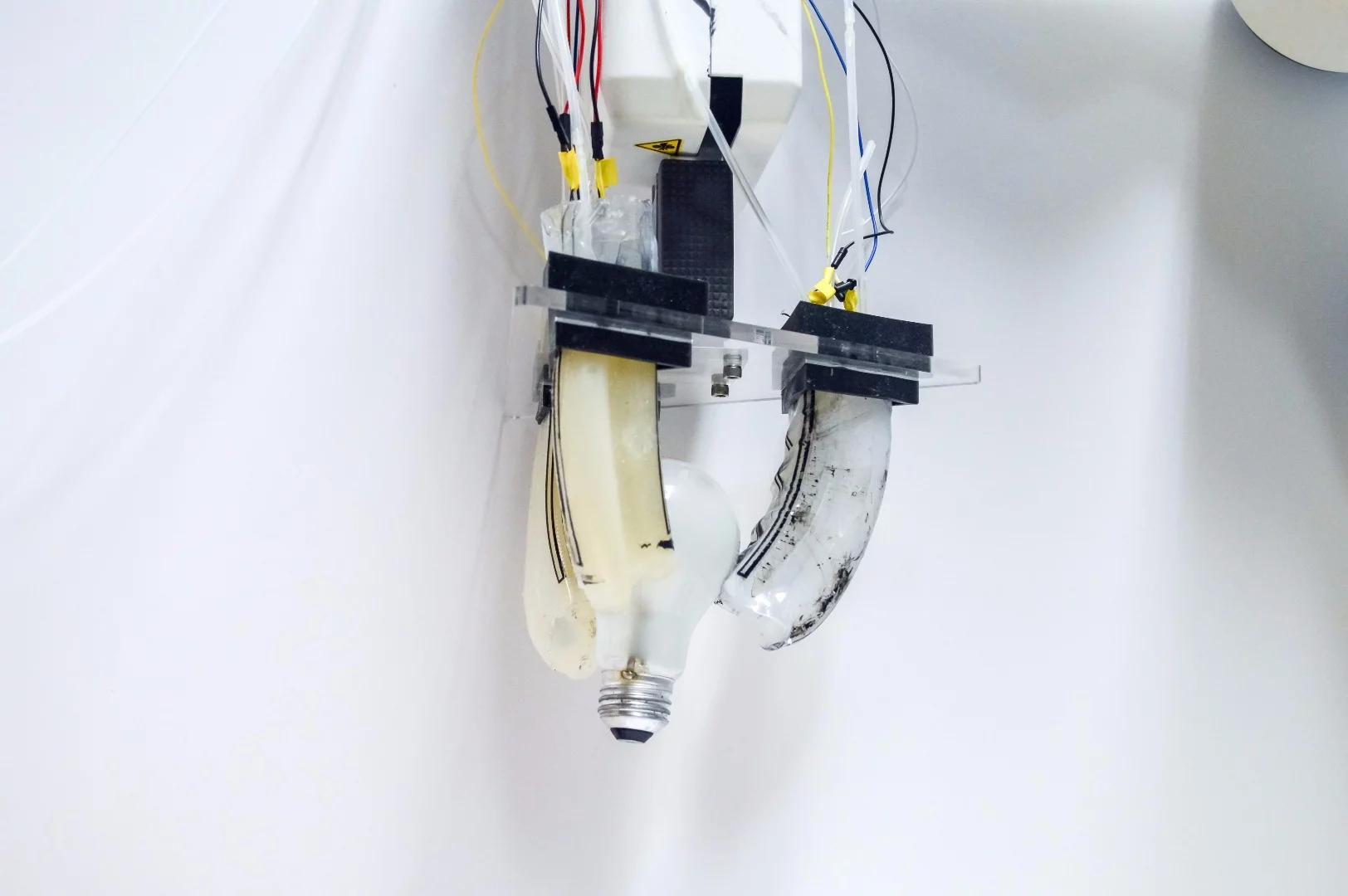

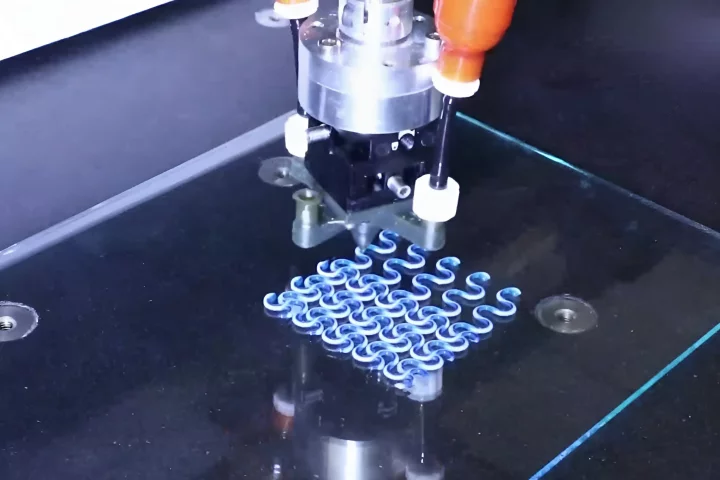

The new gripper is different, in that it can ascertain the three-dimensional shape of an unfamiliar object just by touching it. It does so using three pneumatic fingers, each one of which is covered in a sensing skin composed of flexible silicone with embedded carbon nanotubes.

When one of the soft fingers comes into contact with the unyielding surface of an object, the air pressure within the finger increases at that location. That increase in pressure causes the electrical conductivity of the nanotubes in that area to change. As the gripper feels its way around the object, a series of such electrical signals are transmitted from the fingers to a control board, where a virtual 3D model is created.

Once the gripper has established the overall shape of the item, it can then grasp it and manipulate it. By selectively inflating one or more of three air chambers within each finger, it can even make a twisting motion – this allows it to screw a lightbulb in, for example.

It is now hoped that by using artificial intelligence, the system could be improved to actually identify what an item is, simply based on what it feels like.

The technology was developed by a team led by Michael T. Tolley, and was recently presented at the International Conference on Intelligent Robots and Systems, in Vancouver. It's demonstrated in the video below.

Source: UC San Diego Jacobs School of Engineering