With the help of a new system from Scotland's University of St Andrews, a computer or smartphone may soon be able to tell the difference between an apple and an orange, or an empty glass and a full one, just by touching it. The system draws from a database of objects and materials it's been taught to recognize, and could be used to sort items in warehouses or recycling centers, for self-serve checkouts in stores or to display the names of objects in another language.

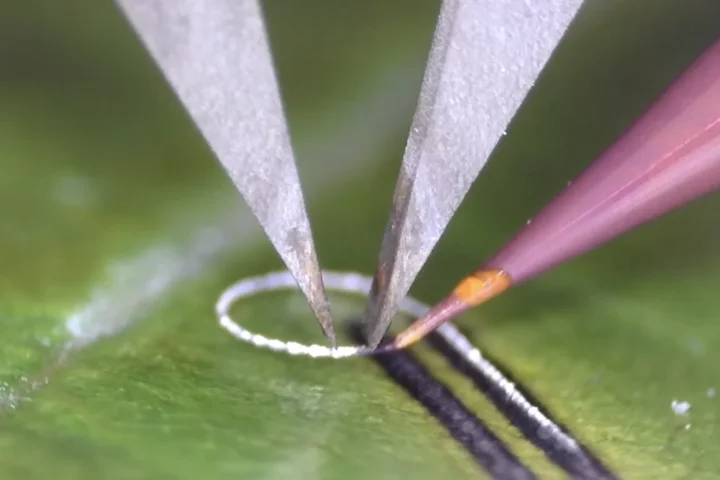

The device was built on the Soli chip from Google's Advanced Technology and Projects (ATAP) team, which uses radar to detect hand gestures for controlling a smartphone or a wearable. Armed with a Soli developer kit, the St Andrews team created a system they call the Radar Categorization for Input and Interaction, or RadarCat, which can identify objects, materials and even different body parts by analyzing how radar signals bounce off them and comparing the signals with a database built up through machine learning.

"The Soli miniature radar opens up a wide-range of new forms of touchless interaction," says Professor Aaron Quigley, Chair of Human Computer Interaction at the University of St Andrews. "Once Soli is deployed in products, our RadarCat solution can revolutionize how people interact with a computer, using everyday objects that can be found in the office or home, for new applications and novel types of interaction."

Once the system knows what object it's touching, it can use that information in a few ways. The team suggests an object dictionary that could bring up information on a scanned item, such as what it's called in another language, the specs of a phone, or the nutritional value of a food. Recycling centers could determine what individual items are made of and sort them accordingly, while stores might be able to replace barcodes for identifying items.

Holding the radar sensor in the hand like a mouse, the researchers also suggest it could change settings in a program based on what material it's sliding over. To change the color of a line in image software, for example, a user could hold it over a red patch on the desk.

A Soli-equipped phone, meanwhile, could detect when it's being held in a gloved hand and make its icons bigger, or open certain apps when tapped to different body parts – a leg might tell it to open Maps, while holding it to your stomach can trigger a recipe app. Sure, it might be easier to just do these things the way we already do, but the researchers are just brainstorming here. There's no doubt the technology has plenty of potential applications, and the team plans to continue investigating them.

"Our future work will explore object and wearable interaction, new features and fewer sample points to explore the limits of object discrimination," says Quigley. "Beyond human computer interaction, we can also envisage a wide range of potential applications ranging from navigation and world knowledge to industrial or laboratory process control."

The research will be presented at the ACM User Interface Software and Technology Symposium next week.

The system can be seen in action in the video below.

Source: University of St Andrews