Fingerprinting is one of the mainstays of forensic science, but despite what we see on TV and in movies, analyzing and matching latent prints is a difficult business and still the province of experts. But now scientists from the National Institute of Standards and Technology (NIST) and Michigan State University are using algorithms and machine learning as a way to automate the process and make fingerprint mathcing more efficient.

With fingerprint readers built into smartphones, it seems as if making an automated fingerprint analyzer for forensic scientists would be a doddle. True, if you can get nice, clean, whole prints you can match two together with an overhead projector and a couple of slides. The trouble is that criminals are very inconsiderate and latent prints from crime scenes are typically incomplete, distorted, smudged, or left on patterned surfaces like bank notes that make them hard to read, much less analyze and match.

Because of this, fingerprint analysis is a very human, subjective occupation with experts looking at fingerprints to not only match them against ones in police files, but even to determine if there's enough information to look for a match in the first place.

Current computerized systems can handle fingerprints collected under controlled conditions from all 10 fingers, but don't do too well in the real world. There is the Automated Fingerprint Identification System (AFIS), but if the fingerprints aren't properly vetted for their potential to provide information, the chances of getting back erroneous matches increases greatly.

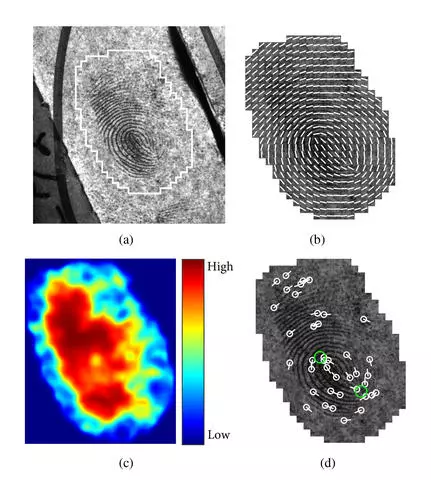

For the NIST/Michigan team, the step of determining if it's worth matching prints looked the most promising to automate with the hope of speeding up the process and cutting down on backlogs. To do this, the researchers turned to 31 fingerprint experts who analyzed 100 latent prints each. The prints were each scored on a scale of 1 to 5 in regard to quality and were then used to train the algorithm using machine learning.

The performance of the system was then tested against a new series of latent prints that the researchers already new the matches for. The scored prints were submitted to AFIS, which was connected to a database of over 250,000 rolled prints that included the latent prints. The results of these searches were scored by correct matches versus the system's quality scores. To allay privacy concerns, the prints from the Michigan State Police database were stripped of all personal information.

According to the team, there was a consistent correlation between quality and accuracy, with low-quality scored prints producing more erroneous results. In addition, the scoring algorithm did slightly better than the human experts. Next, the team will try to improve the algorithm and more accurately gauge its error rate by using a larger dataset.

"We've run our algorithm against a database of 250,000 prints, but we need to run it against millions," says Elham Tabassi, a computer engineer at NIST. "An algorithm like this has to be extremely reliable, because lives and liberty are at stake."

The research was published in the IEEE Transactions on Information Forensics and Security.

Source: NIST