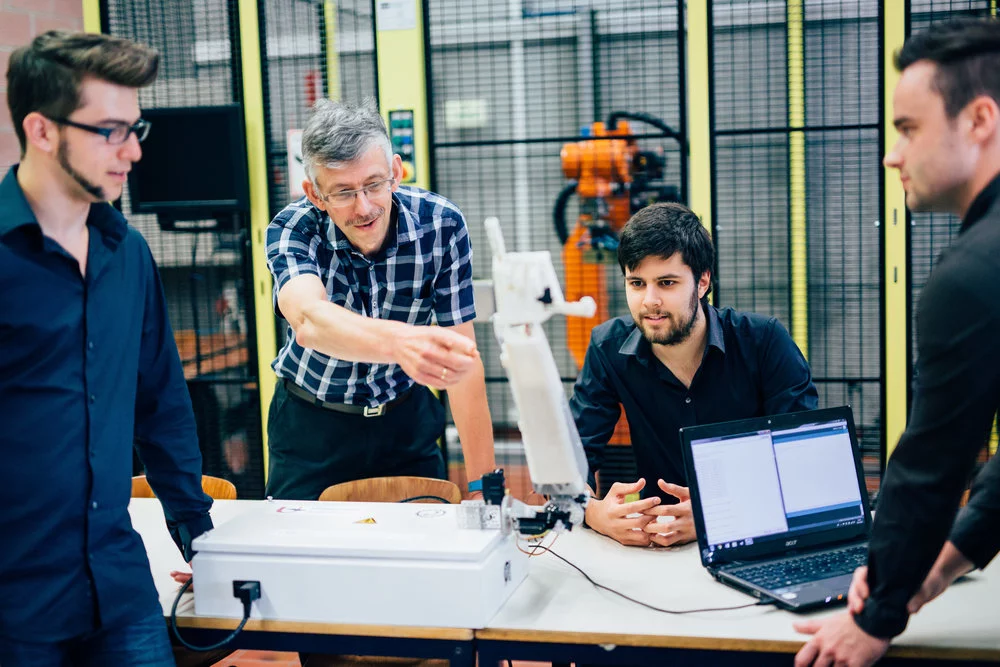

A team from the University of Antwerp is developing a robotic sign language interpreter. The first version of the robot hand, named Project Aslan, is mostly 3D-printed and can translate text into fingerspelling gestures, but the team's ultimate goal is to build a two-armed robot with an expressive face, to convey the full complexity of sign language.

There have been a number of technological attempts to bridge the gap between the hearing and deaf communities, including smart gloves and tablet-like devices that translate gestures into text or audio, and even a full size signing robot from Toshiba.

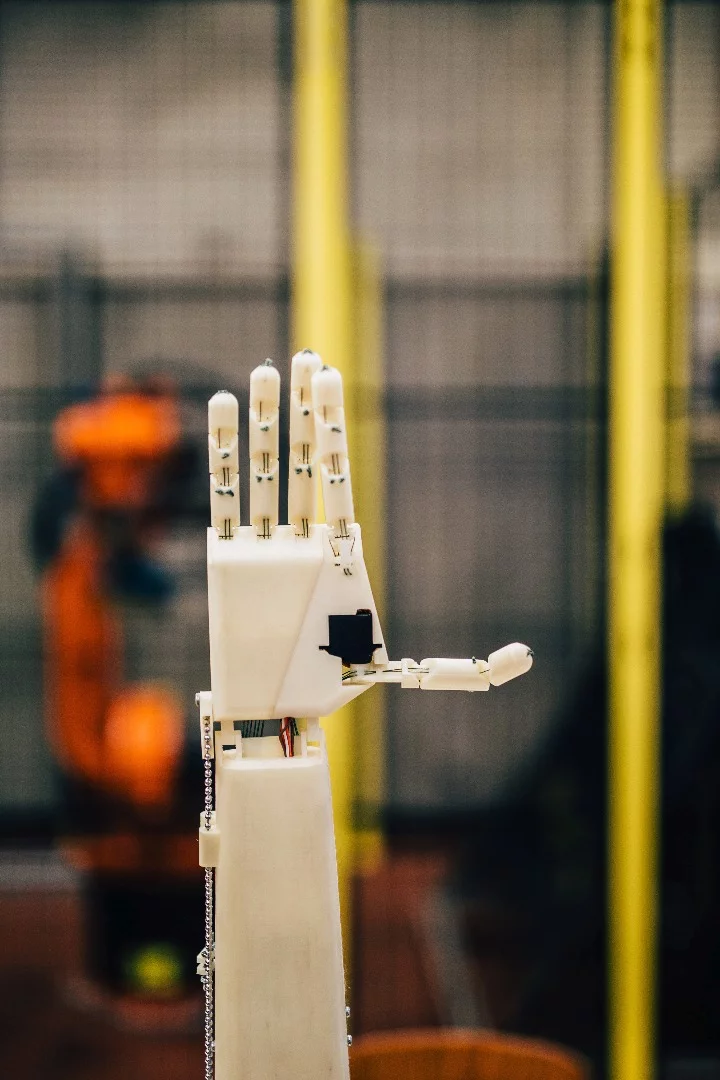

Project Aslan (which stands for "Antwerp's Sign Language Actuating Node") is designed to translate text or spoken words into sign language. In its current form, the Aslan arm is connected to a computer which is, in turn, connected to a network. Users can connect to the local network and send text messages to Aslan, and the hand will start frantically signing. Currently, it uses an alphabet system called fingerspelling, where each individual letter is communicated through a separate gesture.

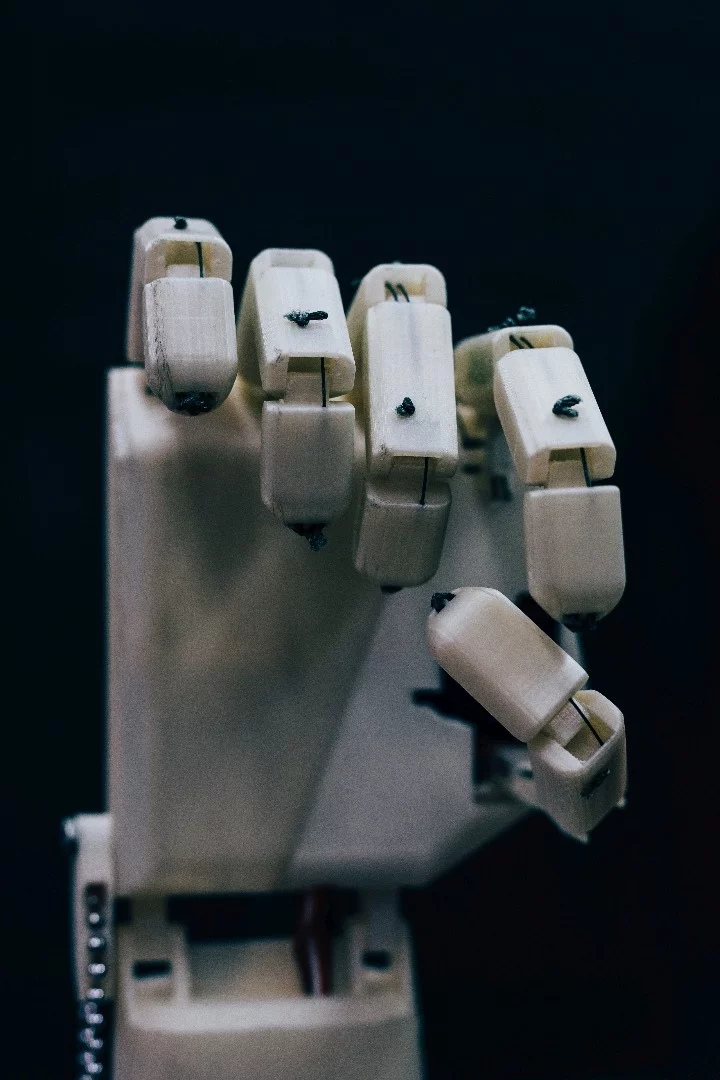

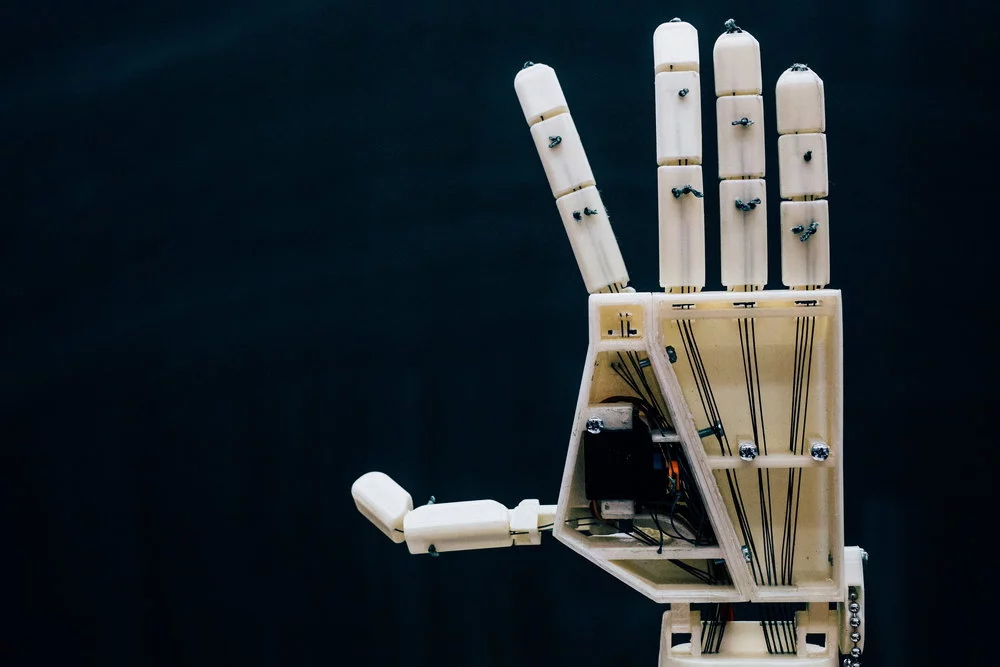

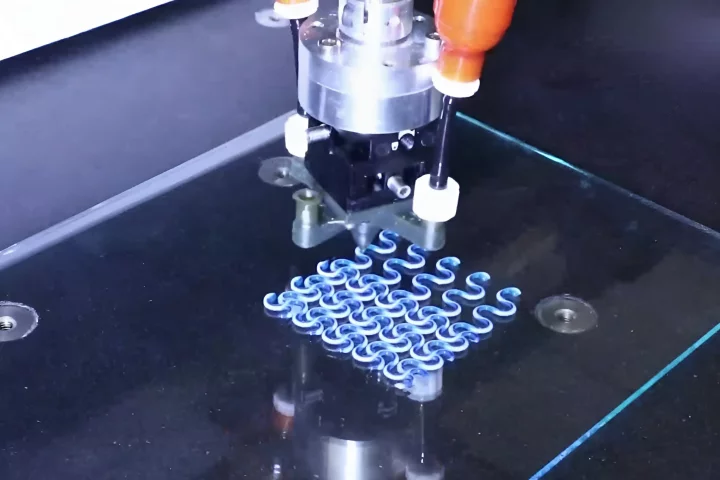

The robot hand is made up of 25 plastic parts 3D-printed from an entry-level desktop printer, plus 16 servo motors, three motor controllers, an Arduino Due microcomputer and a few other electronic components. The plastic parts reportedly takes about 139 hours to print, while final assembly of the robot takes another 10.

The manufacturing is being handled through a global 3D printing network called Hubs, which is designed to ensure the robot can be built anywhere. This DIY vibe isn't to replace human interpreters though: instead, the team see it as an opportunity to aid the hearing-impaired in situations where human help isn't available.

"A deaf person who needs to appear in court, a deaf person following a lesson in a classroom somewhere," says Erwin Smet, robotics teacher at the University of Antwerp. "These are all circumstances where a deaf person needs a sign language interpreter, but where often such an interpreter is not readily available. This is where a low-cost option like Aslan can offer a solution."

While the current version can only translate text into fingerspelling signs, the team is working on deepening the system for future versions, in order to communicate more nuanced ideas. In sign language, meaning is conveyed through a combination of hand gestures, body posture and facial expressions, so the researchers are developing a setup with two arms, and there are plans to add an expressive face to the system.

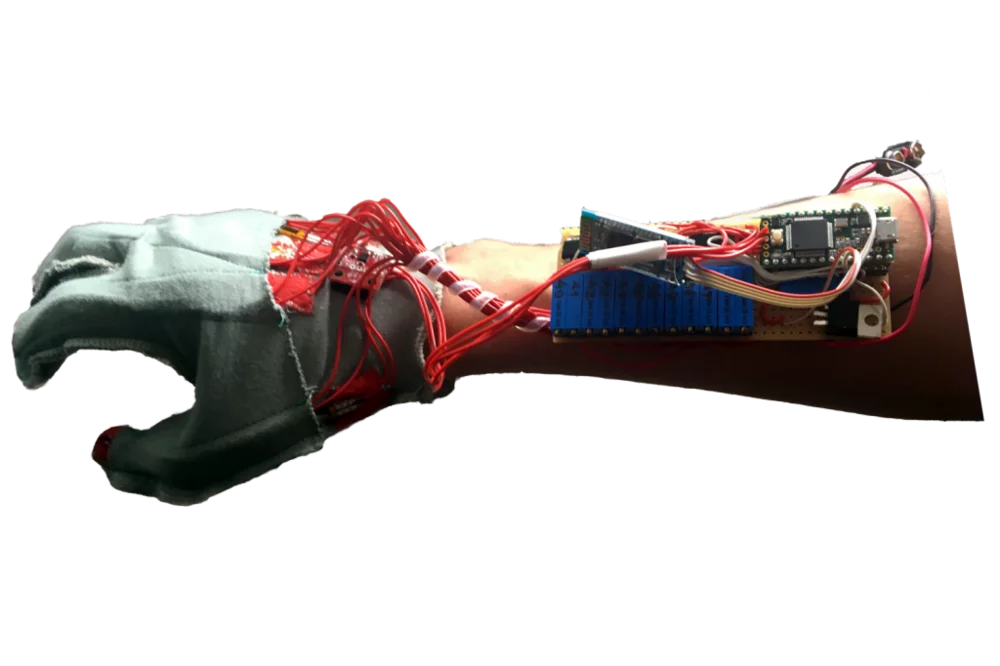

The team is also experimenting with translating spoken words into sign language, and using a webcam to detect facial expressions and teach the robot new gestures, which is currently done by way of an electronic glove. Check out Project Aslan in action in the video below.

Source: Project Aslan via Hubs