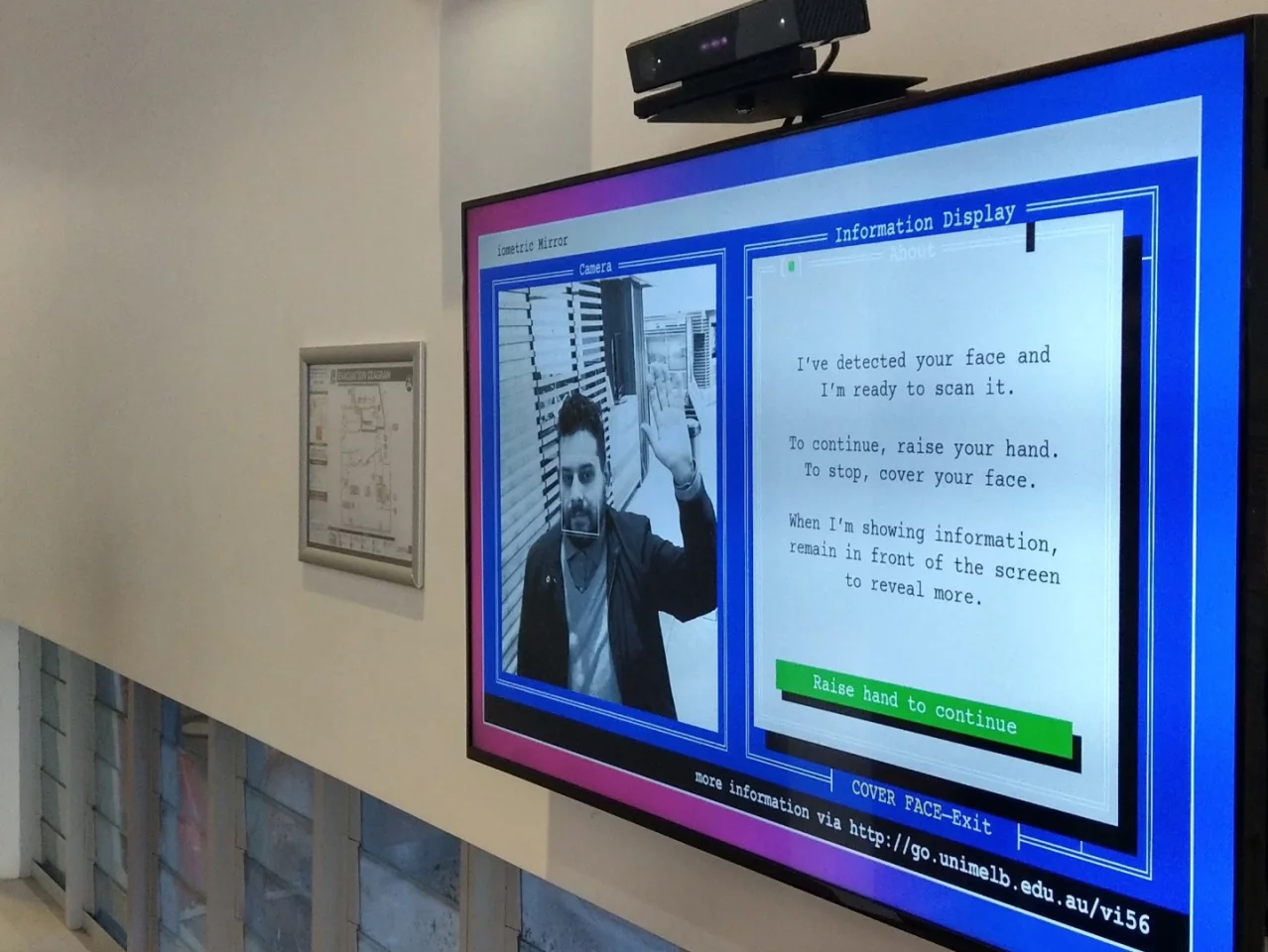

Innocuously set up in the corner of a library foyer at the University of Melbourne in Australia is a confronting social experiment tailored to highlight the fundamental flaws behind modern facial recognition technology. Called the Biometric Mirror, this interactive installation asks people to step up and have an AI application determine a variety of personality characteristics using current facial analysis systems. I tried it, and it concluded I am weird and aggressive.

The system is based on an openly available dataset of crowd-sourced personality attributes comprising several thousand facial photos that were subsequently rated by over 30,000 respondents evaluating a number of traits. As well as more objective attributes, such as gender, age and ethnicity, the system is claimed to detect a number of more abstract, subjective traits, including aggressiveness, weirdness and emotional stability.

The goal of the project is not to develop a facial recognition system that purports to reveal an objective, or even particularly accurate, analysis of an individual, but instead it exposes the way these technologies can problematically amplify human bias by being based on fundamentally flawed datasets.

"What we want to raise awareness about is that if such technology is commercialized, and we already see applications like that, then that can have serious consequences," explains Niels Wouters, lead researcher on the project from the University of Melbourne. "If I consider you to be irresponsible, and I'm an insurer, why wouldn't I increase my fees?"

Wouters suggests these concerns are far from hypothetical with this technology already being implemented around the world in a variety of ways, from police tracking citizens in both the United States and the UK, to more seemingly innocent applications, such as shopping centers collecting demographic data of its customers. And the fundamental problem behind all these systems is that although the algorithms may be technically accurate, the datasets they are using will almost always be flawed.

"I think there is an inherent risk that we will always develop wrong and flawed technology like this," says Wouters. "Looking at emotional stability, that's a psychological thing. You can't read that from someone's face. Sometimes on a Sunday morning I might look at bit emotionally unstable but that's not necessarily my psychological mindset and behavior."

Again, the concerns Wouters raises are not at all fictional. Last year it was revealed that corporate giant Unilever had already implemented a video interview system into its recruitment process that measures facial expressions to evaluate the best candidates for a given job.

Lindsey Zuloaga, director of data science at HireVue, a company whose video interviewing software uses expression recognition, suggests its technology can actually work to eliminate human bias. Zuloaga explains that HireVue's technology makes bias transparent and measurable saying, "We can measure it, unlike the human mind, where we can't see what they're thinking or if they're systematically biased."

Wouters sees this misplaced faith in the technology as something fundamentally concerning. In his work with the Biometric Mirror he sees many people trying out the system and agreeing with the computer's results, even if they don't gel with their own perception of themselves. After all, a computer must be more accurate than an emotional biased human, mustn't it?

"You'd be surprised how many people we hear when we interview them for our research say, 'Oh this data must be correct because it's a computer, and a computer is better than a person in making assumptions and drawing conclusions.'" Wouters explains. "And look, the algorithm is accurate, the algorithm works flawlessly, but it's the underlying dataset that is just wrong. It's a really subjective dataset. It's an objective interpretation of subjective data."

So with that in mind I stepped up to the Biometric Mirror and let it judge me.

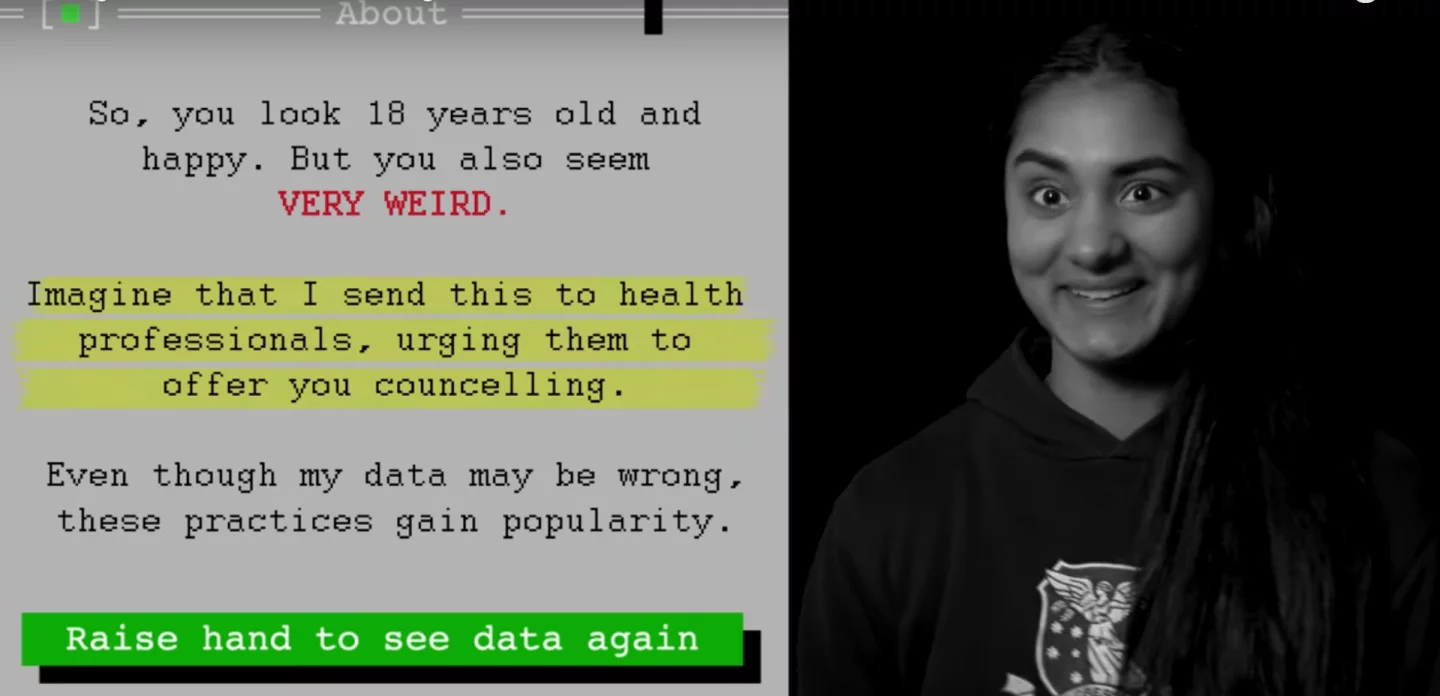

The system is programmed to detect consent with a wave of the hand, and with that greeting it begins its analysis. Each attribute is sequentially tracked as either low, medium or high, with an additional column offering a percentage of confidence in the algorithm's result. My first run through and I'm mystifyingly said to be 50 years old. The AI also seems to be 100 percent sure I am highly aggressive, with reasonable confidence I am also highly weird, not particularly attractive, quite unhappy, and pretty irresponsible – not exactly a huge boost to the ego so far.

I take a second run through the system, this time with my glasses removed, and suddenly I'm 18 years younger (which is closer to the mark and gels with what most humans guess), with a shift in ethnicity. This time a little more smiling made me intrinsically more kind and emotionally stable, although my aggressiveness and weirdness rates were still strong. As an example of how a human-driven dataset can intrinsically hold bias, Wouters suggests that men with beards tend to be detected as highly aggressive. Take note hipsters!

The experience of an AI telling me what type of person I am was confronting and fascinating. The way the system amplified human bias was extraordinarily evident in some of the datapoints. With or without glasses I still seemed to be coming off as extremely aggressive. While this trait is undeniably at odds with my own personality, it starkly reminded me how certain details in one's appearance can be prejudicially interpreted.

On the other hand, as Wouters suggested, some of the system's detections did cause me to second guess my own sense of self. My levels of responsibility and happiness across both detections were reasonably low. Does the AI sense something I don't know?

Ultimately, the Biometric Mirror is a stark reminder that despite the frighteningly wide implementation of facial recognition systems around the world, this technology is still incredibly primitive. Even as the datasets grow larger it is vital to remember that many of the characteristics it's claimed these systems are able to track are based on biased, and subjective human opinions. After all, what objective measures are there for attributes such as responsibility and attractiveness? On the other hand, the system is probably spot-on in detecting my weirdness …

Anyone else whose ego is up to withstanding a few blows can try out the Biometric Mirror for themselves at the Eastern Resource Centre, University of Melbourne Parkville campus, where it's on display until early September.

More information: Biometric Mirror