As many of us know from personal experience, accessing a smartphone's touchscreen can be difficult when your hands are full. Using a smartwatch is also often challenging, while voice commands may not work in noisy environments. That's why scientists from Germany's Fraunhofer Institute for Computer Graphics Research have developed a hands-free system that lets you control a phone via facial gestures – and those gestures are detected in your ear.

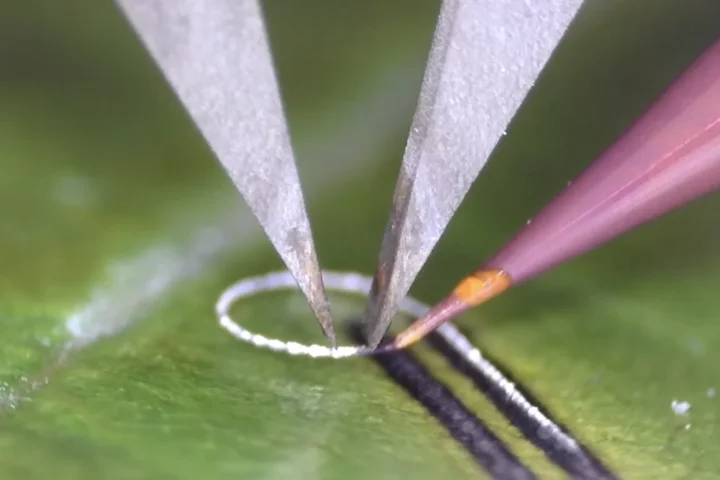

Known as EarFieldSensing (EarFS), the technology incorporates a sensor-equipped plug that sits in the ear canal. There, it detects tiny muscle movements along with subtle changes that occur in the shape of the canal, which are associated with specific facial gestures such as smiling and winking. It can also identify whole-head gestures, like nodding.

Each of those gestures is assigned to a different task, including the taking/rejecting of calls, or advancing through songs in a playlist. Commands are transmitted to a linked smartphone, where they're carried out.

There is one challenge, however.

"The sensors cannot be interfered with by other movements of the body, such as vibrations during walking or external interferences," says Fraunhofer scientist Denys Matthies. "To solve this problem, an additional reference electrode was applied to the earlobe which records the signals coming from outside."

It is hoped that EarFS could additionally be used for applications such as detecting drowsiness in drivers, or allowing patients with locked-in syndrome to communicate.

Source: Fraunhofer