Three years ago, we first heard about GelSight – an experimental new system for imaging microscopic objects. At the time, its suggested applications were in fields such as aerospace, forensics, dermatology and biometrics. Now, however, researchers at MIT and Northeastern University have found another use for it. They've incorporated it into an ultra-sensitive tactile sensor for robots.

First of all, here's how MIT's basic GelSight technology works, as described in our previous article ...

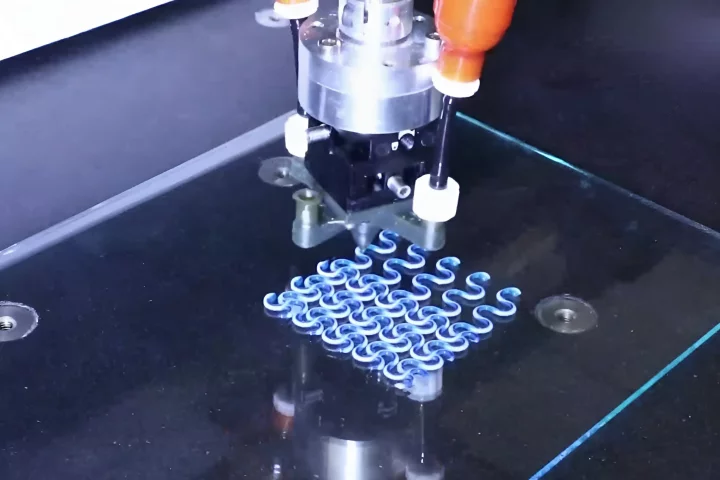

A slab of clear, synthetic rubber is initially coated on one side with a layer of metallic paint. When the painted side is then pressed against a surface, it deforms to the shape of that surface. Looking through the opposite, unpainted side of the rubber, one can see the minute contours of the surface, pressing up into the paint. Using cameras and computer algorithms, the system is able to turn those contours into 3D images, that capture details less than one micrometer in depth and approximately two micrometers in width.

The paint is necessary in order to standardize the optical qualities of the surface, so that the system isn't confused by multiple colors or materials.

For the recent study, the team installed a GelSight sensor on one of the gripping surfaces of a Baxter industrial robot's two-pincher gripper. Using its own computer vision system, the robot subsequently identified a USB cable hanging loosely from a hook, and grabbed that cable's plug with its pinchers.

At that point, it used the GelSight sensor to image the USB symbol embossed on one side of the plug. This allowed it to determine the exact orientation of the plug within its gripper. In order to help it do so with more precision, the cube-shaped sensor utilized four differently-colored LEDs, each one shining into the rubber from a different side. By analyzing the relative intensities of the different light colors on various parts of the USB symbol, the sensor was better able to ascertain the plug's three-dimensional positioning relative to the gripper.

The robot then proceeded to move the plug end of the cable over to a USB socket, and successfully inserted it. When the sensor was turned off, however, the robot couldn't get the plug in, since it couldn't tell how it was holding it. That's why robots typically need to have objects precisely set in place for them ahead of time, and why GelSight could allow robots to be much more adaptable when manipulating items in chaotic environments.

According to MIT, the relatively low-resolution GelSight sensor used in the experiments is still about 100 times more sensitive than a human finger. It can be seen in use, in the following video.

Source: MIT