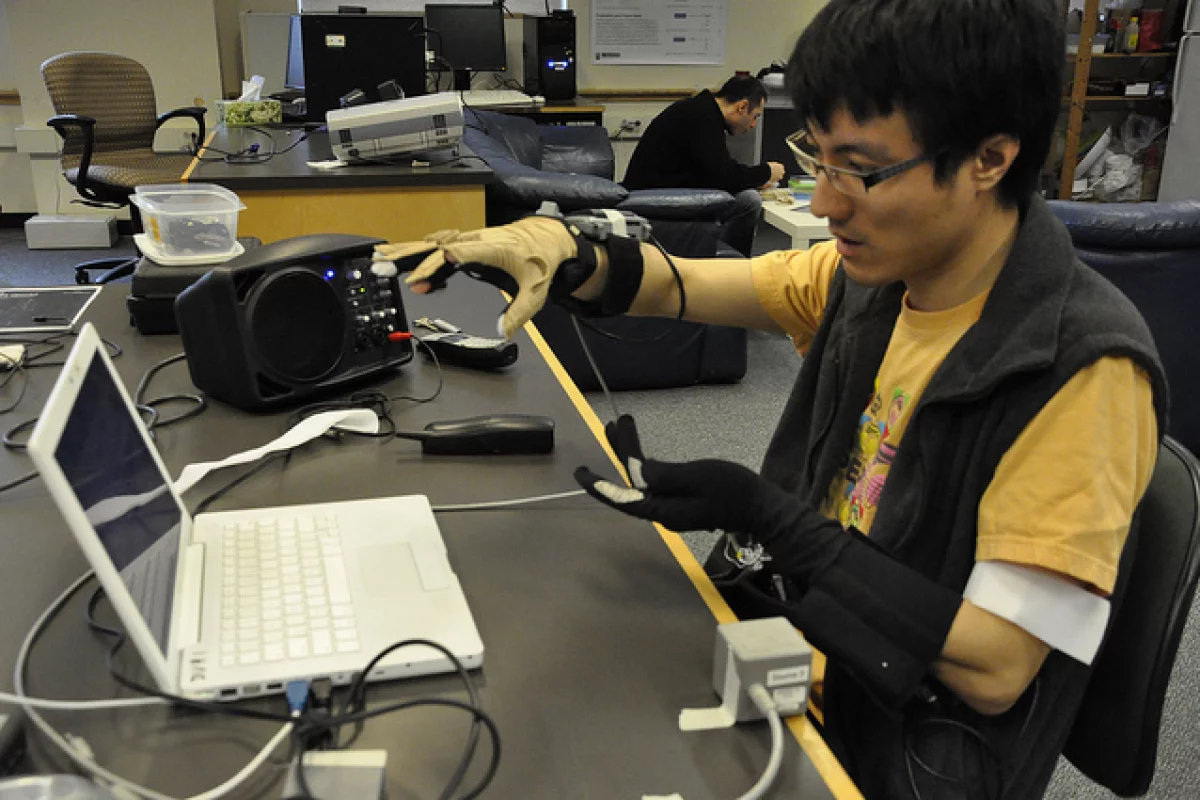

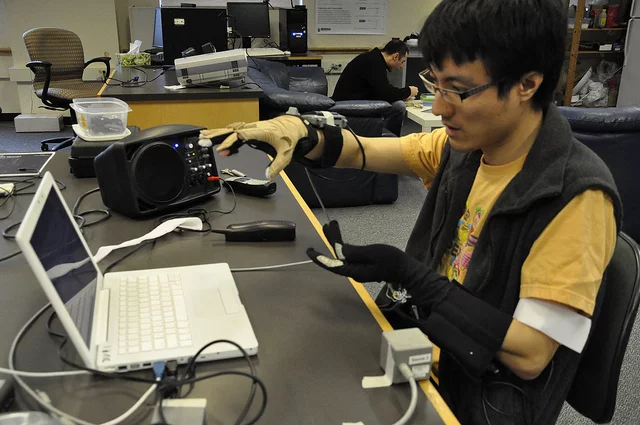

Whether it's people who can't speak, or musicians looking for a new way of expressing themselves, both may end up benefiting from an experimental new gesture-to-voice synthesizer. The system was created at the University of British Columbia, by a team led by professor of electrical and computer engineering Sidney Fels. Users just put on a pair of sensor-equipped gloves, then move their hands in the air - based on those hand movements, the synthesizer is able to create audible speech.

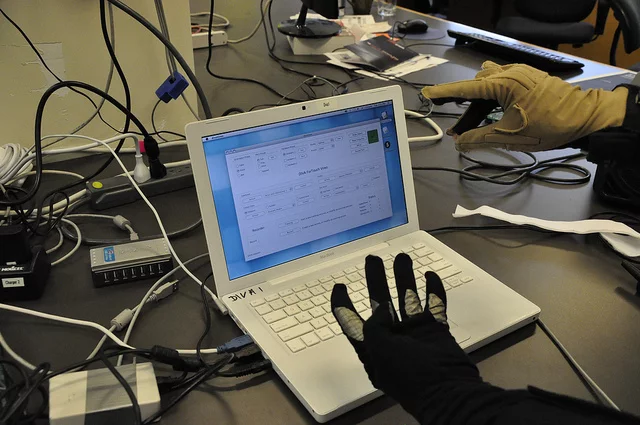

The gloves contain 3D position sensors, which are able to identify each hand's position in space, along with the gestures those hands are making. This information is transmitted to a computer, which has assigned different sounds to different glove postures.

The right-hand glove can detect bending motions, and is thus able to produce a variety of consonant sounds when the hand closes, and vowel sounds when it opens. Different consonants can be selected between by making different gestures with that hand, while vowel sounds can be controlled by its horizontal location. The glove's vertical location controls pitch.

Hard stop sounds, such as the consonants "B" and "D," are produced by gesturing with the left-hand glove.

Not only could the system allow people without the power of speech to form audible words, but it could also be used by musicians - in fact, it already has been. Seven different artists have utilized the device so far, doing things such as performing single-person duets, in which their vocal cords supply one voice while their hands (via the synthesizer) supply the other.

According to Fels, it takes about 100 hours for musicians to learn to use the system. Down the road, he believes that the hand-gesture technology could also be applied to other areas, such as the controlling of heavy machinery.

Prof. Fels presented the technology at the annual meeting of the American Association for the Advancement of Science in Vancouver, last Saturday. The video below shows the gesture-to-voice synthesizer in use.

Source: UBC