At last week's GTC developer conference, Nvidia revealed a nifty AI tool that takes a bunch of 2D photos of the same scene from different angles and almost instantly transforms them into a three-dimensional digital rendering.

The advance builds on research out of UC Berkeley, Google and UC San Diego which uses neural networks to render photorealistic 3D images of scenes using a small set of 2D stills at different viewing angles as a source. The neural radiance fields tool – or NeRF – essentially estimates any scene color/light information missing from the input data and fills in the blanks.

Though early NeRF models could produce rendered scenes in a matter of minutes, training the neural networks took considerably longer. Nvidia's Instant NeRF development essentially cuts down both training and rendering time "by several orders of magnitude" and can train the model on a few dozen still images (together with camera angle data) in just a few seconds and then render a 3D scene at 1,920 x 1,080 pixels tens of milliseconds later.

This has been made possible thanks to the development of a new input encoding method called multi-resolution hash grid encoding, which has been optimized for Nvidia GPUs and allows for "high-quality results using a tiny neural network that runs rapidly."

"If traditional 3D representations like polygonal meshes are akin to vector images, NeRFs are like bitmap images: they densely capture the way light radiates from an object or within a scene," said Nvidia's VP of graphics research, David Luebke. "In that sense, Instant NeRF could be as important to 3D as digital cameras and JPEG compression have been to 2D photography – vastly increasing the speed, ease and reach of 3D capture and sharing."

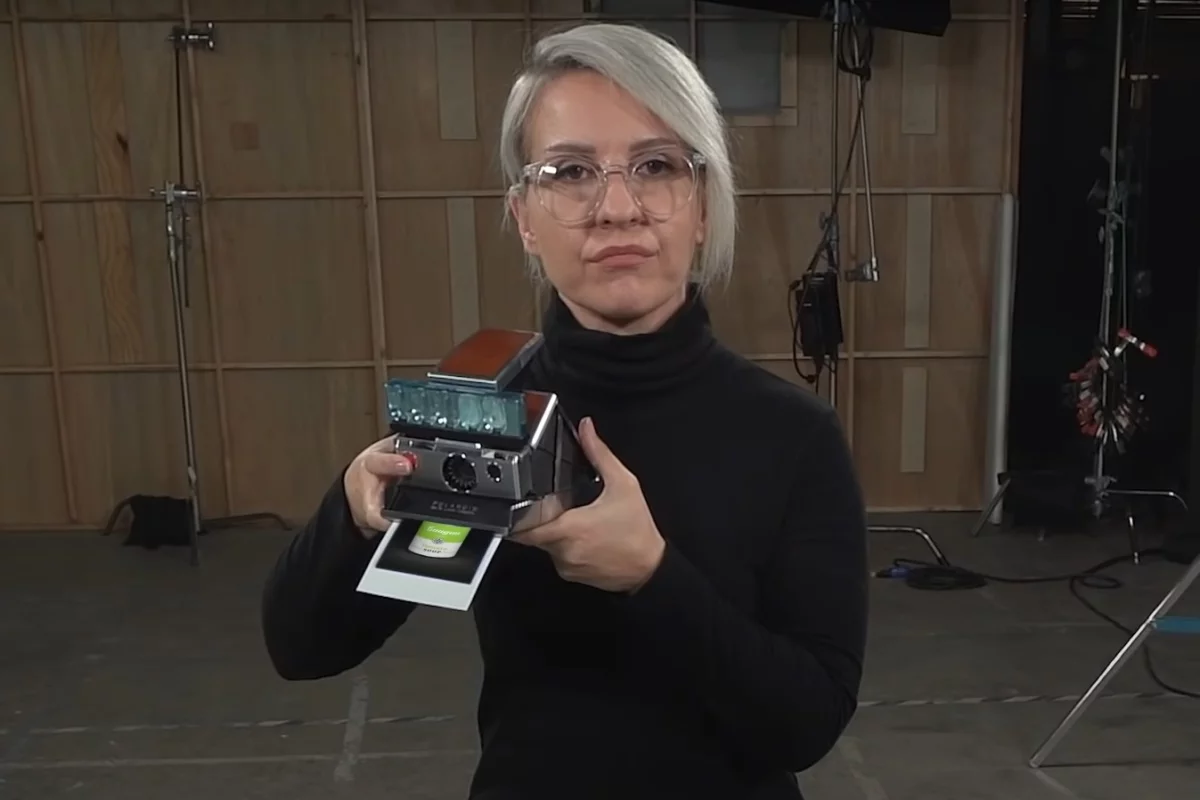

The company suggests that the technology could find use in training robots and self-driving cars to better understand objects in the real world, as well as for virtual reality content creation, videoconferencing, digital mapping, architecture and entertainment. The video below has more.

Source: Nvidia