In order to see and then grasp objects, robots typically utilize depth-sensing cameras like the Microsoft Kinect. And while such cameras may be thwarted by transparent or shiny objects, scientists at Carnegie Mellon University have developed a work-around.

Depth-sensing cameras function by shining infrared laser beams onto an object, then measuring the amount of time that it takes for the light to reflect off of the contours of that object, and back to sensors on the camera.

While this system works well enough on relatively dull opaque objects, it has problems with transparent items that much of the light passes through, or shiny objects that scatter the reflected light. That's where the Carnegie Mellon system comes in, by utilizing a color optical camera that also functions as a depth-sensing camera.

The setup utilizes a machine learning-based algorithm that was trained on both depth-sensing and color images of the same opaque objects. By having compared a number of the two types of images, that algorithm has learned to infer the three-dimensional shape of objects in color images, even if those objects are transparent or shiny.

Additionally, although only a small amount of depth data can be ascertained by directly laser-scanning such objects, the data that is gathered is used to boost the system's accuracy.

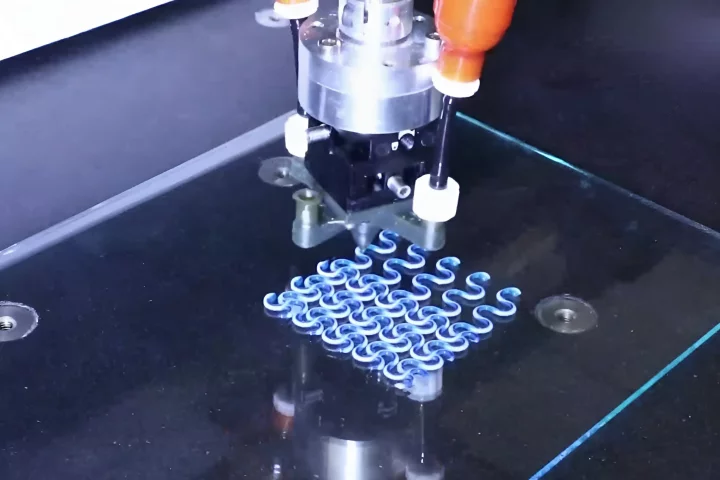

In tests conducted so far, a robot was far better at grasping transparent and shiny objects when using the new technology, as compared to when using only a standard depth-sensing camera.

"We do sometimes miss, but for the most part it did a pretty good job, much better than any previous system for grasping transparent or reflective objects," says Asst. Prof. David Held.

Source: Carnegie Mellon University