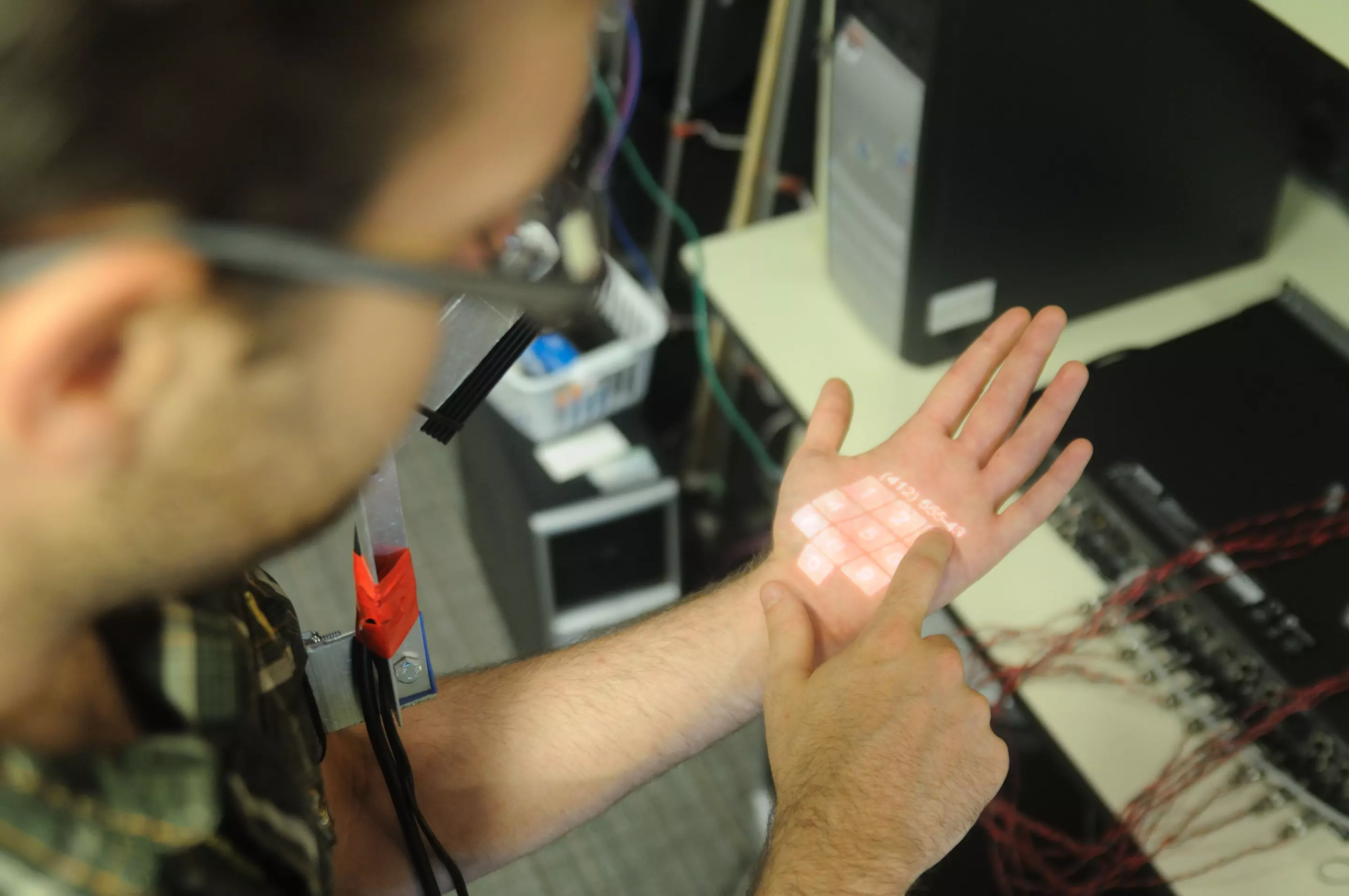

Always thought your skin was more than just a thing to stop your insides falling out? Well, you were right. Chris Harrison has developed Skinput, a way in which your skin can become a touch screen device or your fingers buttons on a MP3 controller. Harrison says that as electronics get smaller and smaller they have become more adaptable to being worn on our bodies, but the monitor and keypad/keyboard still have to be big enough for us to operate the equipment. This can defeat the purpose of small devices but with the clever acoustics and impact sensing software, Harrison and his team can give your skin the same functionality as a keypad. Add a pico projector attached to an arm band, and your wrist becomes a touch screen.

In the past, Harrison says he has used tables and walls as touch screens but has experimented using the surface area of our bodies because technology is now small enough to be carried around with us and we can’t always find an appropriate surface.

A third year PhD student in the Human-Computer Interaction Institute at Carnegie Mellon University, Harrison says we have roughly two square meters of external surface area, and most of it is easily accessible by our hands (eg: arms, upper legs, torso).

He has used the myriad sounds our body makes when tapped by a finger on different areas of say, an arm or hand or other fingers, and married these sounds to a computer function.

A beauty of this type of functionality is our ability to “operate” our body without the need to use our eyes i.e. we can snap our fingers, touch the tip of our nose or pull our ear without having to look. It’s called proprioception.

Harrison says this ability is great operating equipment while on the move, say, changing tracks on an MP3 while out jogging, answering a phone call or starting a stop watch. Not to mention that the possibility of using your hand as calculator means you really can count on your fingers.

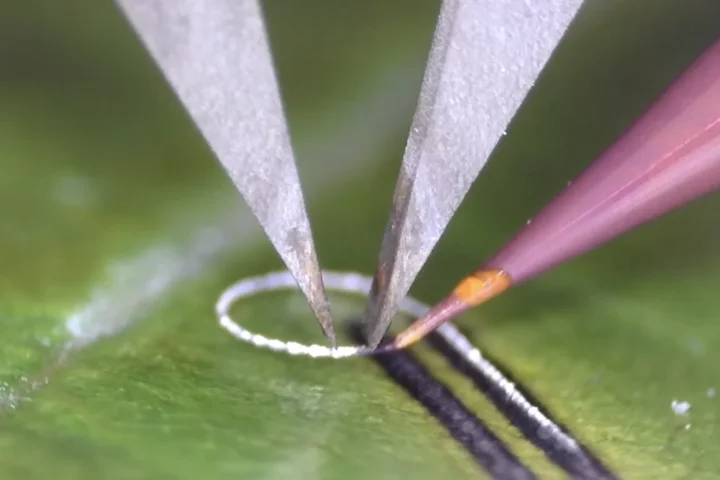

The team has created its own bio-acoustic sensing array that is worn on the arm meaning that no electronics are attached to the skin. Harrison explains that when a finger taps the body, bone densities, soft tissues, joint proximity, etc, affect the sound this motion makes. The software he has created recognizes these different acoustic patterns and interprets them as function commands.

He says he has achieved accuracies as high as 95.5 percent and enough buttons to control many devices, his video even shows someone playing Tetris using only their fingertips as a controller.

Harrison's research paper, co-authored by Desney Tan and Dan Morris from Microsoft Research, titled Skinput: Appropriating the Body as an Input Surface will appear in Proceedings of the 28th Annual SIGCHI Conference on Human Factors in Computing Systems (Atlanta, Georgia) in April.

Via Popular Science