Skype has been around for ten years now. Once a science fiction dream, the video calling service has 300 million users making two billion minutes of video calls a day. The only problem is, most of them can't look each other in the eye. Claudia Kuster, a doctoral student at the Computer Graphics Laboratory ETH Zurich, and her team are developing a way to bring eye contact to Skype and similar video services with software that alters the caller's on-screen image to give the illusion that they’re looking straight at the camera.

Making a video call from your computer may be incredibly convenient, but it still lacks that Gerry Anderson quality because it always seems as if the other person it staring at your chest or over your shoulder instead of making eye contact. That’s because the webcam is usually sitting over the screen, meaning it's transmitting an image of you looking at the screen, not the camera.

It is possible to restore eye contact, but currently this involves complex and expensive systems using mirrors or multiple cameras and special software. Kuster's aim is to develop a solution that uses standard, or at least, simpler equipment and operates in real time.

The new system, which is still under development, currently uses a Kinect to act as a depth sensor. Other than that the computer is fairly standard. “The software can be user adjusted in just a few simple steps and is very robust,” says Kuster.

The clever bit isn’t how it takes an image, but in what it does with it. On current gaze-correction systems, the entire image is tilted. This requires a lot of computing power and is prone to generating large gaps in the image. If you’ve ever used one of those insert-a-background camera applications without a green screen, the patchy results will be familiar

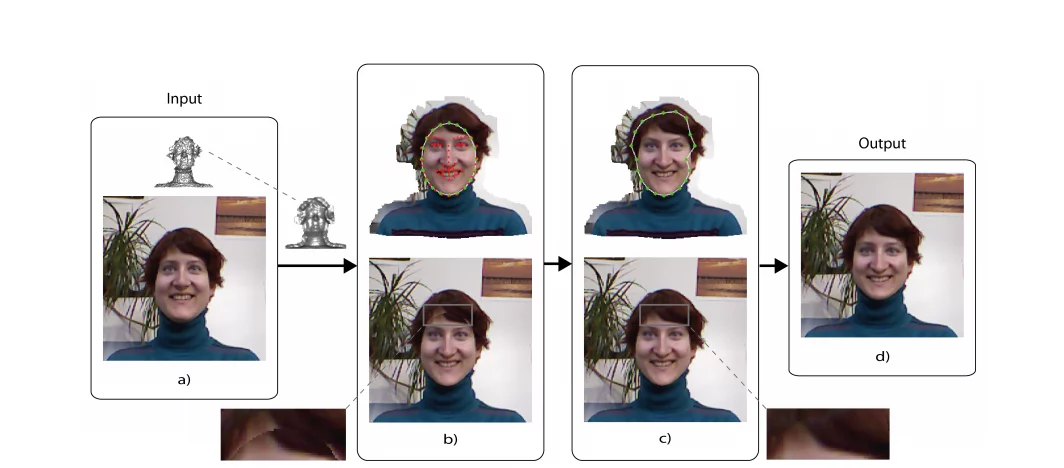

Instead of shifting the entire image, Kuster’s system only deals with the foreground. The Kinect builds up a depth map of the image and isolates the person in it with a border area around it that is used to blend the person into the background. The software then plots a coordinate mask onto the person’s features and calibrates it. The system can then tilt the face to the correct angle to make it look as if eye contact has been made.

The next step is to extract the face from the image using a state-of-the-art face tracker. This computes 66 feature points over the face and uses these to alter the tilted image so that it is consistent and synchronizes with the person’s movements. The image is then pasted back onto the person’s image and blended in, so that things like hairlines and shadows match.

As it does so, the software adjusts for lighting, matches colors, handles two faces at once and deals with a variety of face types and hairstyles. It can even compensate for things like cups moving into the picture, though with less success. However, it can’t handle spectacles at this stage, which is a pity.

Kuster and her team hope to develop software that will work with standard cameras not only in computers, but also tablets and smartphones. The eventual goal is to turn it into a Skype plug-in.

Source: ETH