The concept cars of the 2017 Consumer Electronics Show are all about supporting their drivers and passengers with welcoming, cutting edge technology. Following the Chrysler Portal, the new Toyota Concept-i is another "inside-out" concept that wraps driver and passengers in an intuitive interface. It's driven by artificial intelligence (AI) and brought to life with the dashboard-dwelling personal assistant "Yui" (yu-ee). Toyota believes the interface could lay the groundwork for a safer, friendlier connected car.

Designed by Toyota's CALTY Design Research center in California, the Concept-i appears to be written from the same "eye-catchingly futuristic" script as the FCV Plus, albeit with more curves and smoothed edges. It tries a bit too hard to stand out, in our opinion, but its intentions are good. It serves as a warm, welcoming presence that attends to a person's needs like no car before it. Toward this end, the powerful AI assists with vehicle operation, navigation, critical information delivery, and driver mood and alertness monitoring.

"At Toyota, we recognize that the important question isn't whether future vehicles will be equipped with automated or connected technologies," says Bob Carter, Toyota's senior vice president of automotive operations. "It is the experience of the people who engage with those vehicles. Thanks to Concept-i and the power of artificial intelligence, we think the future is a vehicle that can engage with people in return."

Instead of using technology as a starting point in and of itself, as is certainly tempting to do with a CES concept, Toyota starts with the user experience (UX) and looks at how technology can improve that experience and create a more meaningful bond between person and car. Like other in-vehicle personal-assistant concepts, Toyota's Yui learns the driver's preferences, moods and needs and reacts accordingly. It monitors the driver's alertness and emotions using biometric hardware, helping it identify when to switch between manual and automated driving modes.

This last factor will be crucial for Toyota moving forward, as it believes that a Yui-like interface could be the key to keeping drivers safely engaged during Level 2 partial autonomous driving, which can handle acceleration and steering but requires the driver to monitor other systems and be prepared to take over full-time driving.

Automakers are concerned about how human error will affect such partial automation systems, worried that driver attentiveness will drop off precipitously, whether purposefully, as the driver focuses on alternative tasks like reading or text messaging, or inadvertently. There's no simple answer to the question of whether the human driver will remain alert and stay prepared to take over driving responsibilities when required by a Level 2 system.

Toyota hopes a Yui-like interface might be able to keep drivers engaged during Level 2 automated driving, serving as a secondary task that keeps them alert and focused on the car. Such an interface could talk naturally to the driver, provide audible or visual alerts regularly, and otherwise keep the driver's attention focused.

"Concept-i seamlessly monitors driver attention and road conditions, with the goal of increasing automated driving support as necessary to buttress driver engagement or to help navigate dangerous driving conditions," Toyota explains.

At CES, Toyota expressed uncertainty as to whether or not this strategy will work well enough to ensure the level of safety it demands, but it plans to develop, research and evaluate the technology over the coming years.

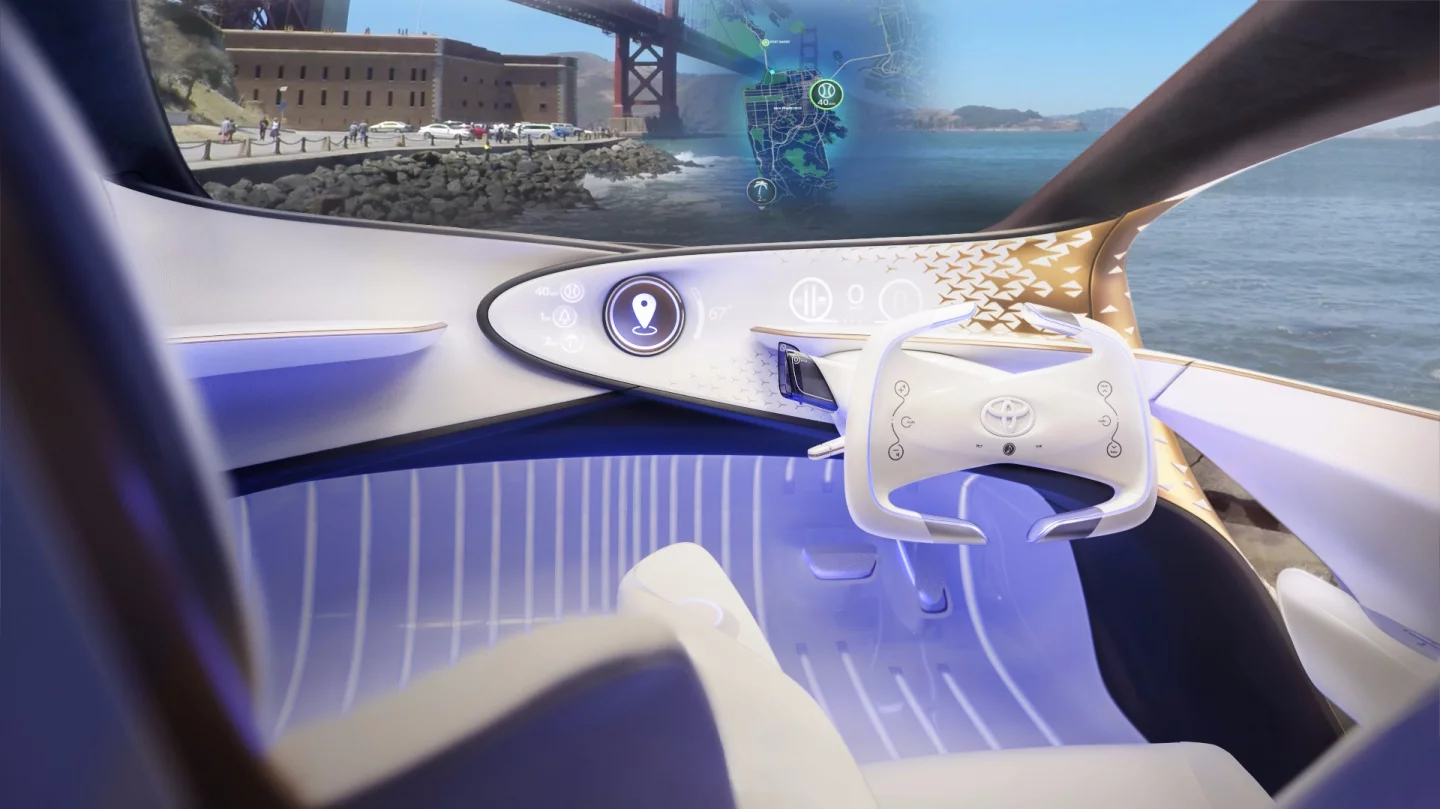

With this type of on-road safety and driver engagement in mind, the Concept-i interface dispenses with the convention of centrally gathered information displayed on the dashboard, letting the information flow to relevant areas of the vehicle. The graphic representation of Yui calls the center of the dashboard home, but light, sound, touch and text sweep through the interior and over the exterior to communicate with both occupants and the surrounding environment.

A large, windshield-spanning head-up display serves up key information within the driver's gaze. "Manual" and "automated" driving modes are differentiated by different colored lights in the footwells, as well as by digital text on the hood. A rear projector system casts images onto the seat pillars to reduce blind spots, while the rear of the vehicle provides signals and warnings to trailing vehicles.

As is probably obvious, the Concept-i is more a jumping off point for research on the future of AI-driven vehicle technologies, less a preview of any upcoming vehicle.

Take a closer look at the Concept-i in the video below:

Source: Toyota