We've seen various head-mounted wearables, suchas the Motorola HC1, Golden-i and the AITT system, which are designed to giveindustrial workers or military personnel a helping hand in carrying out highlyspecialized tasks. But what about the elderly or disabled that struggle witheveryday tasks? That's the niche a pair of smart glasses developed through the"Adaptive and Mobile Action Assistance in Daily Living Activities"(ADAMAAS) project are intended to fill.

The ADAMAAS glasses are designed to determine what the wearer is doing, such as cooking a meal, and not only provide context-appropriate assistance in the form of text, visuals or avatars on a virtual plane in the wearer's field of view, but also react when a mistake is made. The goal is to allow them to continue working on a task while receiving instructions on how to do it better and correct mistakes as they happen.

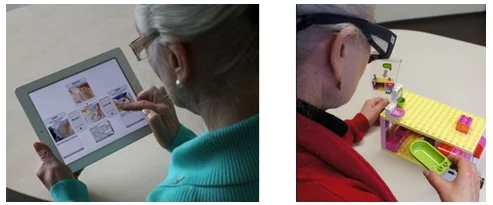

Unlike other smart glasses, such as Google Glass, the ADAMAAS glasses are particularly targeted at elderly and disabled people, and will ultimately provide directions for basic tasks, like how to bake a cake or brew a pot of coffee, as well as more complex tasks, such as fixing a bicycle or practicing yoga.

The ADAMAAS project is being conducted at the Cluster of Excellence Cognitive Interactive Technology (CITEC) at Bielefeld University in Germany. The group recently received €1.2 million (US$1.3 million) in funding from the German Federal Ministry for Education to further development of the glasses.

“In this project, different technologies are being combined, including memory research, eye tracking and vital parameter measurements (such as pulse or heart rate), object and action recognition (Computer Vision), as well as Augmented Reality (AR) with modern diagnostics and corrective intervention techniques,” explains CITEC researcher Thomas Schack.

It's a different approach to the wearable space, taking a diagnostic system that is usually stationary and making it mobile, while also integrating monitoring technology that enables reaction to and individualized support for the user's actions in real time. This could mean huge things for people who face challenges with everyday tasks but who want to remain independent.

Source: Bielefeld University