Over the past 12 months we've witnessed an explosion of AI generated art from machines composing pop music to writing film screenplays. Here is a run-down of some of the most interesting, and amusing, developments in computer generated creativity.

The AI sitcom

2016 kicked off with a bang of surreal absurdity that rivaled the best of David Lynch in sheer weirdness. Software developer and cartoonist Andy Herd wondered what would happen if he tasked an AI with writing new episodes of the TV sitcom, Friends. Utilizing Google's open source machine-learning toolbox, TensorFlow, Herd fed the system every script from nine seasons of the show. The results mostly bordered on gibberish but Herd managed to isolate several "scenes" that came close to a sublime form of inanity by placing Chandler "in a muffin" and having Monica randomly yell out, "Chicken Bob!" for some unknown yet oddly perfect reason.

The AI movie trailer

What started as a promotional gimmick ultimately turned into a fascinating commentary on the generic nature of modern movie trailers when 20th Century Fox recruited IBM's Watson supercomputer to generate a trailer for it's AI influenced thriller Morgan. The IBM researchers trained Watson by feeding it over 1000 trailers helping it learn the general tone and pace of a successful trailer. Then Watson processed the entire feature length film and selected six minutes of footage that it determined were the prime moments a film trailer should focus on.

The final result was intriguing in comparison to the trailer originally produced as the AI generated piece seemed to concentrate on mood and atmosphere in a way that the commercially generated, and more conventionally plot-heavy, trailer did not. In our opinion this was one of the most successful AI generated projects of 2016 avoiding the frustrating modern trend of movie trailers giving away most of the plot of the film.

The AI horror film

In August a Kickstarter campaign was launched to fund the production of the world's first feature film co-written by an AI Impossible Things was created by a mathematician who generated a neural network that pulled apart thousands of successful horror movies and weighted that data against each film's box office success. The system then generated a premise and plot synopsis upon which a human writer then constructed a screenplay from. The producers released a plot synopsis and AI generated trailer to show off their ideas.

The resulting trailer played to us like a compendium of every horror movie cliche you could think of, but the crowdfunding campaign was ultimately successful. The team has now partnered with two serious Hollywood production companies and the film is set to shoot in early 2017, so by the end of next year we should be able to take a look at the first ever computer conceived feature film.

The A.I. Short Film

2016 saw the emergence of an odd short film entitled Sunspring which was quickly revealed to have been entirely AI constructed. Filmmaker Oscar Sharp and AI researcher Ross Goodwin appropriated a general text-recognition AI algorithm and then fed the system scores of science fiction screenplays from the 1980s and 1990s. Using films like Ghostbusters, Bladerunner and every episode of TV's The X-Files as inspiration, the machine learned how to communicate in screenplay format and composed the screenplay for the short film.

The filmmaker shot the film exactly as the AI had written resulting in a final product that was stiflingly incoherent with literally no clear structure. The process did reveal several fascinating recurring cinematic tropes that the AI seemingly recognized across numerous screenplays. Characters are constantly exclaiming confusion, with "I don't know what you're talking about," becoming a chorus-like refrain, while the main character shouts "it's not a dream" recalling countless reality-bending sci-fi stories.

The AI pop song

Possibly the most eerily successful AI creation in 2016 was this pop song produced by the Sony CSL Research Laboratory. Sony's Flow Machine software was filled with over 13,000 different popular songs and then the system was simply asked to compose a track in the style of The Beatles. As with most AI generated work, the necessity of a guiding human hand was required at points, in this instance French composer Benoit Carre was recruited to arrange the AI generated segments and write the lyrics (which strangely enough come across as the most mechanical aspect of the song).

The result is frighteningly catchy and Sony plans to release a complete AI penned album of tunes some time in 2017.

Google also jumped into the machine-made art world in 2016 launching its Magenta project, an offshoot from their Google Brain Team with the express intention of "developing algorithms that can learn how to generate art and music," with the systems, "potentially creating compelling and artistic content on their own."

The first thing to come out of the Magenta project was a short 90 second melody. The drums and arrangement were human generated but the entire piano-melody and progression came from their algorithms. The result is a clever little earworm of a melody.

The AI Christmas carol

Dubbed "neural karaoke," a team from the University of Toronto went all in on AI creation and created an entirely AI generated Christmas carol. Initially training their neural network on over 100 hours of Christmas music, the system devised a melody and then built up its own entire music track. The team then taught the system to rhythmically put words to music before training it to connect certain words to certain images. Finally the system was fed numerous Christmassy images from which it generated its own festive lyrics which are creepily sung by a computer generated voice.

The result is probably more terrifying than celebratory, but more than any other AI production this year one gets the sense from this that it's truly the beginning of something new. Fast forward through a bunch of iterations of this process and it isn't unimaginable that AI could be creating much of our music in the future.

The AI novel

Earlier in 2016, a novel written almost entirely by an AI system passed the first selection round in a Japanese National Literary competition. Titled The Day A Computer Writes A Novel, this meta-tale had its human overseers direct the plot and characters while the AI generated the actual sentences. One judge described how the novel's ultimate shortcoming lay with its character descriptions, but the overall result suggested that a degree of human oversight or participation could make this kind of AI written fiction actually work.

The final line of this AI penned novel left us with the sense that the machines may have a healthy sense of self-awareness:

"The day a computer wrote a novel. The computer, placing priority on the pursuit of its own joy, stopped working for humans."

The AI art auction

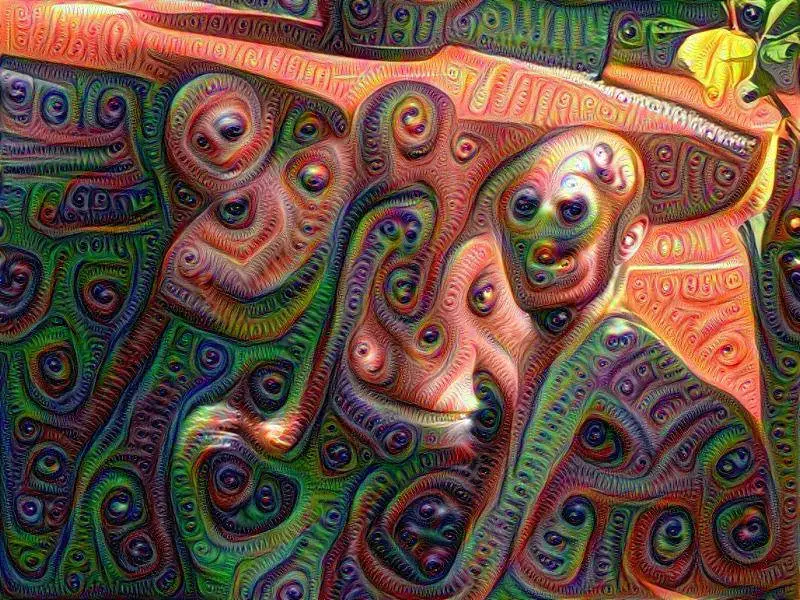

AI generated visual art has been brewing nicely for several years now and the drop of Google's Deep Dream in 2015 pushed a psychedelic machine-made aesthetic well into the mainstream. Back in February of 2016 Google held an exhibition of machine-made art accompanied by an auction of 29 works of art generated by their Deep Dream algorithm.

The auction made $97,000, with the highest single piece selling for $8,000. The money, of course, was for charity, but potential of machine made art becoming something of real value in the art world could not be ignored.

The AI poet

In 2016 a poet and software engineer named Karmel Allison blended her two skills into one and launched CuratedAI, a website that collects together submissions from people who have developed their own machine-made algorithms dedicated creating AI generated poetry and prose.

Allison started the site publishing her own machine-made poetry generated from a neural network she programmed that contains a vocabulary of 190,000 words. Named Deep Gimble I, her system can pen a poem in under one minute!

Here is one of Deep Gimble's most recent efforts entitled, Chaos Everywhere:

chaos everywhere

away he sees some long soul

no in air

out no or where

as yet or clear when time

seems back when time

it with sweet tears

his bosom through high waves

came near into fire

over like no word all

their hopes can fade together

Google also accidentally created a machine poet in 2016 when working with Stanford University and the University of Massachusetts. The team were working on a neural network algorithm that hopes to improve the ability of AI to communicate in full grammatically correct sentences. They fed their system thousands of steamy romance novels thinking the content would be ideal for generating a more conversational style of AI response.

The initial results were some remarkably eerie and abstract poetic ramblings from a machine that potentially has been scarred by a lifetime of reading novels with titles such as Unconditional Love and Jacked Up. An example of the machine's creepy resulting ramblings read:

he was silent for a long moment.

he was silent for a moment.

it was quiet for a moment.

it was dark and cold.

there was a pause.

it was my turn.

The AI magazine editor

Is it possible to train an AI to have aesthetic taste? EyeEm magazine took on this bold question in 2016 by building an algorithm that can examine a photograph and evaluate its artistic worth. Training their algorithm to examine photographs and rate them out of 100 against a set of aesthetic criteria they established, the creators handed the editorial reigns for the latest issue of their magazine over to the AI.

The result was a magazine entirely curated by an AI and the results surprised some of the EyeEm team. The algorithm dug through the massive EyeEm online community and discovered a young London-based photographer than the human-team hadn't even short-listed. Paul Aguirre-Livingston, EyeEm's Associate Creative Director, told The Creators Project of the AI's find, "To say [this photographer] was a hidden gem is a true understatement."