Introduced by Japan’s Masahiro Mori, the “Uncanny Valley” principle states that the more a humanoid robot strives and fails to mimic human appearance, the less appealing it is to humans. In yet another attempt to cross the valley, an interdisciplinary team of researchers at the University of Pisa, Italy, endowed a female-form humanoid called FACE with a set of complex facial expression features. They did so in the hope of finding the answer to one fundamental question: can a robot express emotions?

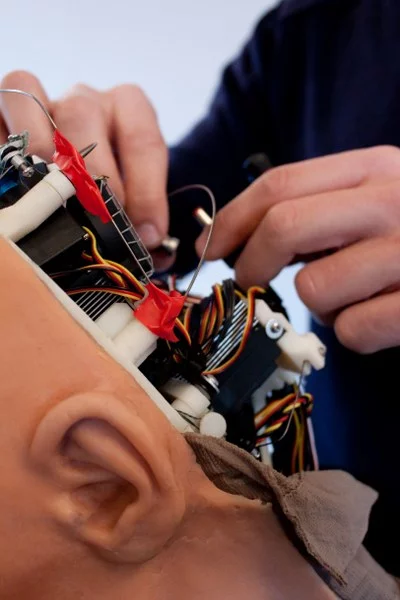

Nicole Lazzeri and her team have placed 32 motors around FACE’s skull and torso to allow her to mimic real muscle activity. There are over 100 facial muscles in the human face responsible for expressing emotions, and many of them differ in shape and functionality. In order to recreate as many of the possible facial expressions as possible with only 32 motors, the scientists wrote a program called HEFES (Hybrid Engine for Facial Expressions Synthesis). Its job is to control the motors and do all the math necessary to establish exactly how the motors should act in order to achieve the desired emotion.

HEFES draws on the Facial Action Coding System which catalogs how particular emotions are achieved in terms of muscle movements. The system has been around since 1978 and is widely used by psychologists and animators. HEFES allows its operator to choose an expression for FACE that lies somewhere between the easily classifiable basic emotions such as fear, anger, disgust, surprise, happiness or sadness.

Twenty children, five of whom were autistic, were asked to identify the emotions normally associated with the facial expressions presented to them first by FACE and then by a psychologist. Both autistic and non-autistic children were able to identify sadness, happiness and anger without a problem. Identifying surprise, disgust and fear turned out to be more of a challenge. Take a look at the video below to see FACE present the basic emotions.

Source: University of Pisa via NewScientist