Back when DARPA first announced its Autonomous Robotic Manipulation (ARM) program in 2010, the average cost of a military-grade robot hand was around US$50,000. That's expensive even by the US military's standards – especially for something that is bound to be in close contact with explosives – which is why the hardware team of the ARM program tasked participants with developing a reliable low-cost hand. Now, thanks to work by iRobot (yes, the company that makes the Roomba robotic vacuum) and researchers at Harvard and Yale, the ARM program has a surprisingly effective new hand to play with that costs just $3,000 (in batches of 1,000 or more).

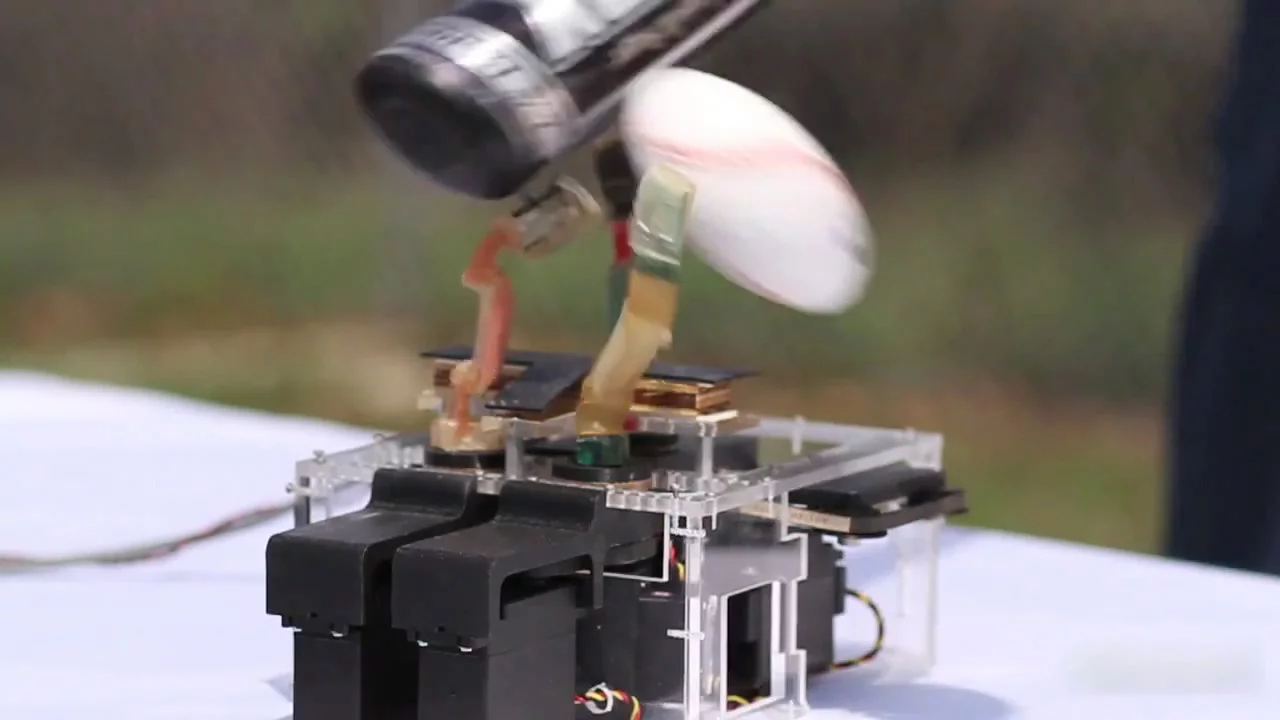

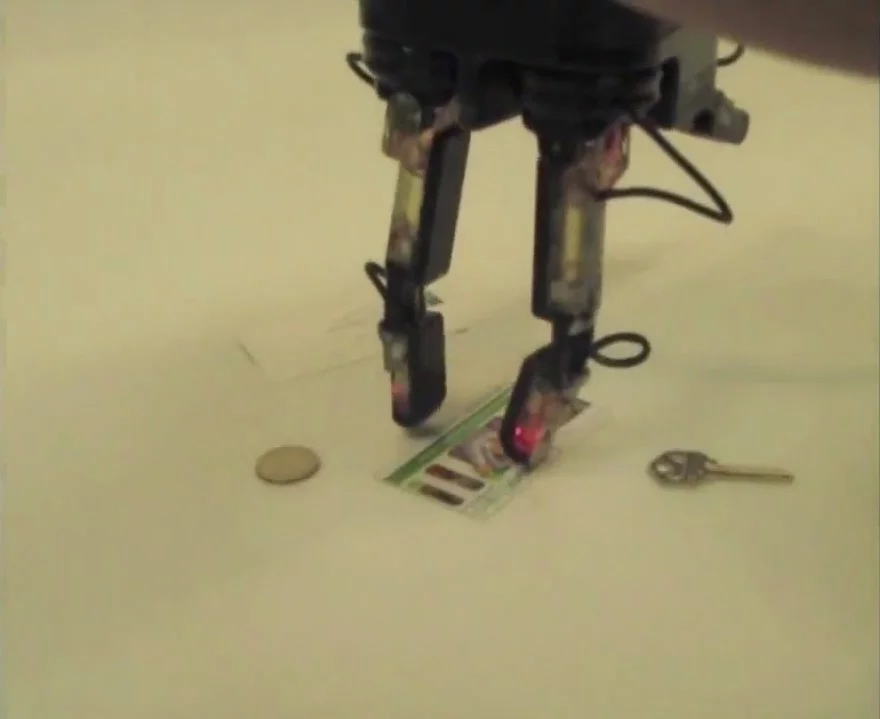

The hand is equipped with three fingers which are flexible enough to pick up a wide range of objects, from a basketball to a pin. And they're strong, too, capable of sustaining blows from a baseball bat and alternatively lifting 50 pounds (22.6 kg) – actions which would likely snap more delicate robotic fingers. In one demo, the hand picked up and used a power tool.

The goal of the ARM program (not to be confused with the DARPA Robotics Challenge) is to build a robot that can perform a variety of intricate manipulation tasks with minimal human input. A complete robot will be built by the hardware team, while teams for software and community outreach will develop the necessary artificial intelligence to handle dangerous situations. From the original call for participation:

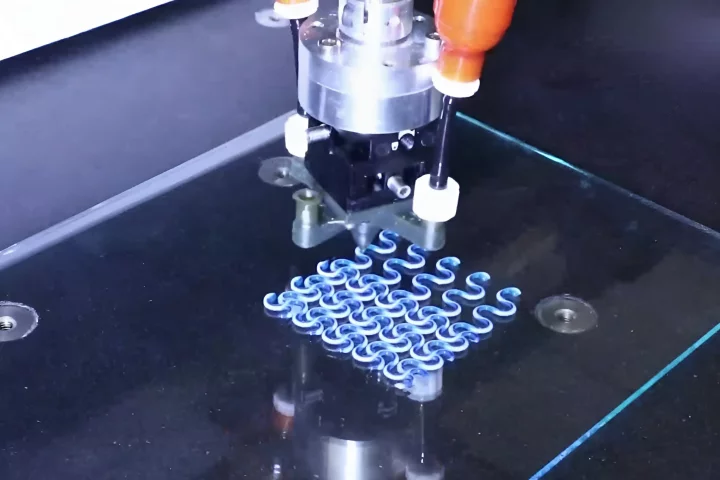

The software system must enable the GFE (Government Furnished Equipment; i.e. the robot) to perform the Challenge Tasks following a high-level script with no operator intervention. For example, the operator would issue a command such as “Throw Ball.” That command would in turn decompose into a sequence of lower-level tasks, such as “find ball,” “grasp ball,” “re-grasp ball, cock arm, and throw.”

There is still more than a year and half of R&D left before the ARM program is over. The end product will require some serious artificial intelligence software in order to be effective on the battlefield. Not only will the robot need to be able to identify the objects which pose a threat with just a few verbal descriptors, it will also need to know how to interact with them (grasping a ball is much easier than unzipping a cloth bag).

If the ARM program is successful, the completed robot will be yet another valuable tool created in part by iRobot, which include the Packbot and 710 Warrior.