Motion-tracking systems like Wii and Kinect have certainly changed the way we play video games – among other things – but some people still complain that there's too much of a lag between real-world player movements and the corresponding in-game movements of the characters. The creators of the experimental Lumitrack system, however, claim that it has much less lag time than existing systems ... plus it's highly accurate and should be cheap to commercialize.

Lumitrack was developed by researchers at Carnegie Mellon University and Disney Research Pittsburgh. It consists of projectors that emit barcode-like light patterns, and optical sensors that detect those patterns.

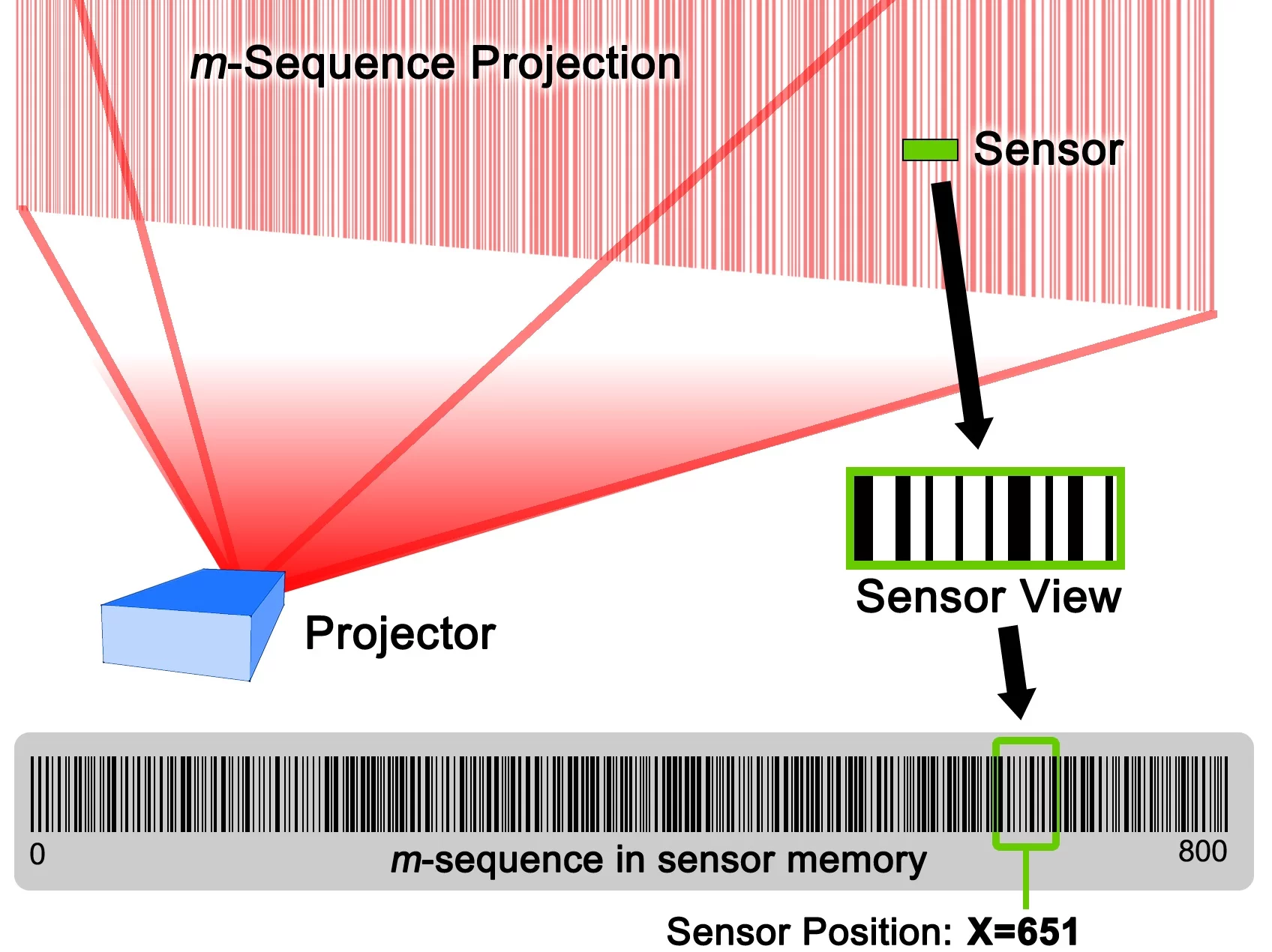

In a "typical" setup, a projector located above the computer screen shines a rectangular image known as an m-sequence onto the player and their surroundings. That m-sequence represents the computer screen, and consists of an assortment of vertical lines of varying thicknesses, with no combination of seven adjacent line types being repeated anywhere in the image.

The player's hand-held game controller, meanwhile, is equipped with the optical sensors. By detecting the specific combination of seven neighboring lines hitting them, those sensors are able to ascertain the corresponding location within the game screen – on the horizontal axis, at least.

In order to get the vertical axis, a second projector overlays an m-sequence of horizontal lines, on top of the first one. Once again, no seven-line combination is repeated anywhere within that image. The sensors use that second sequence to establish the vertical in-game location.

As a result, using the information contained in the two m-sequences, a sensor located on the end of a gun-shaped controller could be used to precisely point a virtual gun within a game – just as one example.

In an alternate setup, the game controller could contain the projectors, and the sensors could be located on the screen. Either way, the system reportedly has sub-millimeter accuracy, and response times in the range of 2.5 milliseconds. Although it's currently using visible light, it could be adapted to utilize invisible infrared light.

According to the university, the sensors require little power and should be cheap to mass-produce. They could even be built into smartphones.

A paper on the research can be accessed via the project website.

Source: Carnegie Mellon University