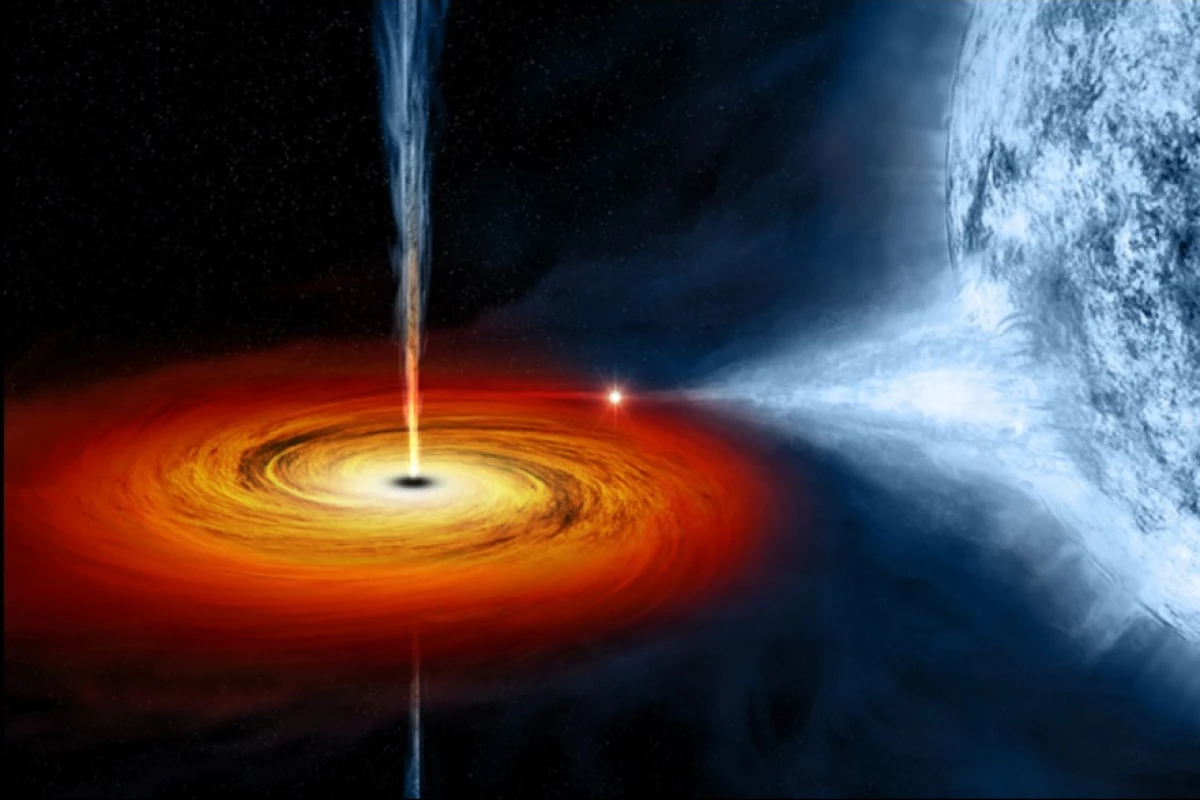

With a gravitational pull so great not even light can escape, it's impossible to directly observe a black hole. But scientists have created a new algorithm that may allow astronomers to generate the first full image of a black hole. Using data collected from a connected array of radio telescopes around the world, the algorithm effectively turns the Earth into a gigantic radio telescope with a resolution factor more than a thousand times greater than that of the Hubble Space Telescope.

Astronomers often use radio telescopes to image distant objects as, unlike normal optical telescopes, the wavelengths for radio telescopes are much longer and less susceptible to the scattering effects of the Earth's atmosphere and dust clouds between us and distant interstellar objects.

"Radio wavelengths come with a lot of advantages," said Katie Bouman, an MIT graduate student who led the development of the new algorithm. "Just like how radio frequencies will go through walls, they pierce through galactic dust. We would never be able to see into the center of our galaxy in visible wavelengths because there's too much stuff in between."

However, there is a trade-off, as long radio wavelengths also require large antenna dishes to collect the received signals. This means that resolution of images is also poorer at longer wavelengths, and radio telescopes are usually teamed-up in multi-beam or multi-antenna arrays to help improve the clarity of the captured radio "pictures."

"A black hole is very, very far away and very compact," Bouman says. "[Taking a picture of the black hole in the center of the Milky Way galaxy is] equivalent to taking an image of a grapefruit on the moon, but with a radio telescope. To image something this small means that we would need a telescope with a 10,000-kilometer diameter, which is not practical, because the diameter of the Earth is not even 13,000 kilometers."

This is where an international collaboration known as the Event Horizon Telescope (EHT) comes in. It forms a connected array of radio telescopes criss-crossing the globe using what is known as very long baseline interferometry (VLBI). In essence, VLBI is where a radio signal from an astronomical object is received at multiple radio telescopes and the distance between these telescopes is calculated from the time difference between the reception of the radio signal at the different telescopes.

This enables simultaneous observations of an object by many telescopes to be combined, thereby creating a virtual telescope with a diameter equal to the maximum distance between telescopes in the array. In this case, that array is almost the size of the Earth.

However, even with this vast network of radio telescopes there are still large gaps in the data that cannot be resolved. This loss of information occurs because, as a radio signal reaches two or more telescopes at slightly different times, the Earth's atmosphere also slows these radio waves down, exaggerating differences in arrival time and skewing the calculations on which the image created by the interferometry relies. The algorithm Bouman helped to develop, known as CHIRP (Continuous High-resolution Image Reconstruction using Patch priors), will help to rectify this problem.

By multiplying the measurements from just three telescopes, the extra delays created by the atmosphere cancel each other out. This increases the the number of telescopes required from two to three, but the increase in precision more than makes up for this. Though filtering out atmospheric noise is a big step, the next part of the mathematical process is to put together an image that is precise enough to match the data and accurate enough to meet expectations of what the image should look like. This is because numerous possible images could fit the data due to the sparsity of the telescopes scattered across the globe.

The traditional algorithm for making sense of astronomical interferometric data is built on the assumption that an image is made up of a collection of separate points of light. In this way, it tries to find the points with brightness and position that best match the known data, and then blurs together bright areas close to each other to create an image.

Bouman and her colleagues advanced this technique by using CHIRP to create a model a little more complex, where points of light are still used, but they are considered as a flat plane of varying gradations of light made up of uniformly-spaced cones of varying heights, but with bases of all the same diameter.

To align this model to the incoming interferometry data, the team adjusts the heights of the cones, which may even be zero for large areas, corresponding to a flat sheet. To interpret the resulting model into a visual image, the researchers see this as analogous to draping plastic film over it, where the film will be pulled tightly between adjacent peaks, but will angle down the sides of the cones leading into flat areas. The height of the film then corresponds to the brightness of the image and, as that altitude is continuously altered, the model renders a more natural, continuous image than a simple group of bright points.

To further refine these images, the team applied a machine-learning algorithm that helped recognize recurring visual patterns in 64-pixel patches of actual astronomical images, which they then used to refine the algorithm's image reconstruction formulae. As proof that this method was successfully rendering images as they should be, Bouman used synthetic astronomical images and the data they would display at different telescopes, with random fluctuations in atmospheric noise, telescope thermal noise, and other types of interference applied.

According to the researchers, the algorithm often fared better than previous methods in reconstructing the original image from the measurements and tended to be better at filtering out noise.

"There is a large gap between the needed high recovery quality and the little data available (the Event Horizon Telescope project)," said Yoav Schechner, a professor of electrical engineering at Israel's Technion, but not involved in the work. "This research aims to overcome this gap in several ways: careful modeling of the sensing process, cutting-edge derivation of a prior-image model, and a tool to help future researchers test new methods."

Boumann has made the test data available online for other researchers to use.

Source: MIT