MIT has developed an inexpensive sensor glove designed to enable artificial intelligence to figure out how humans identify objects by touch. Called the Scalable TActile Glove (STAG), it uses 550 tiny pressure sensors to generate patterns that could be used to create improved robotic manipulators and prosthetic hands.

If you've ever fumbled in the dark for your glasses or your phone, then you know that humans are very good at figuring out what an object is just by touch. It's an extremely valuable ability and one that roboticists and engineers would love to emulate. If that was possible, then robots could have much more dexterous manipulators and prosthetic hands could be much more lifelike and useful.

One way of doing this is to gather as much information as possible about how humans are actually able to identify by touch. The reasoning is that if there are large enough databases, then machine learning could be brought to bear to perform analysis and deduce not only how a human hand can identify something, but also to estimate its weight – something robots and prosthetic limbs have trouble doing.

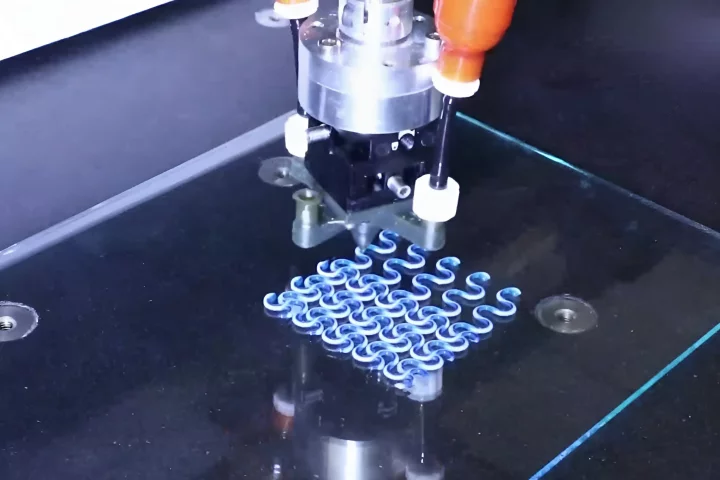

MIT is gathering this data by means of a low-cost knitted glove fitted with 550 pressure sensors. The glove is wired into a computer, which collects the data, where the pressure measurements are turned into a video "tactile map" and fed to a Convolutional Neural Network (CNN) that classifies the images to find specific pressure patterns and match them to specific objects.

The team gathered 135,000 video frames from 26 common objects like soda cans, scissors, tennis balls, spoons, pens, and mugs. The neural network then matched semi-random frames to specific grips until a full picture of an object was built up – much in the way a person will identify an object by rolling it in their hand. By using semi-random images, the network can be given related clusters of images so it won't waste time on irrelevant data.

"We want to maximize the variation between the frames to give the best possible input to our network," says CSAIL postdoc Petr Kellnhofer. "All frames inside a single cluster should have a similar signature that represent the similar ways of grasping the object. Sampling from multiple clusters simulates a human interactively trying to find different grasps while exploring an object."

Not only could this system identify objects with an accuracy of 76 percent, but it also helped researchers to understand how the hand grasps and manipulate them. To estimate weight with an accuracy of about 60 grams (2.1 oz), a separate database of 11,600 frames was compiled showing objects being picked up by finger and thumb before being dropped. By measuring the pressure around the hand as the object is held and then comparing it after the drop, the weight could be measured.

Another advantage of the system is its cost and sensitivity. Similar sensor gloves run to thousands of dollars and have only 50 sensors, while the MIT glove uses off-the-shelf materials and costs only 10 dollars.

The research was published in Nature.

Source: MIT