In conversations about artificial intelligence and the time when machines will be able to functions as well as — or better than — human beings, it's often said that one thing computers will never be able to do is create art and music the way we do. Well, that argument just lost a bit of steam thanks to a project that's been carried out by Microsoft and ING. Working with the Technical University of Delft and two museums in the Netherlands, the project, called "Next Rembrandt," used algorithms and a 3D printer to create a brand-new Rembrandt painting that looks like it could easily have been delivered by Dutch Master's own hand about 350 years ago.

To create the new painting, the team of experts used computer software and a deep learning algorithm to analyze 346 of Rembrandt's paintings. In addition to studying components of his work, such as how he drew an eye or how faces were proportioned, the project also included an analysis of the height of the paint from the surface of the canvas.

Because Rembrandt produced more portraits than any other kind of painting, the group decided to focus its efforts on that type of artwork — particularly, those created from the years 1632-1642, when he painted the largest number of them. After using software to analyze the portraits, the computer suggested that the perfect Rembrandt painting to produce would be a portrait of a white man with facial hair aged between 30 and 40. He should also be wearing black clothes and have a collar and a hat, said the analysis.

The next step, and the one that really lies at the crux of the project — and the idea that computers can produce art every bit as good as our revered masters — is that the team developed software that basically decoded how Rembrandt did what he did.

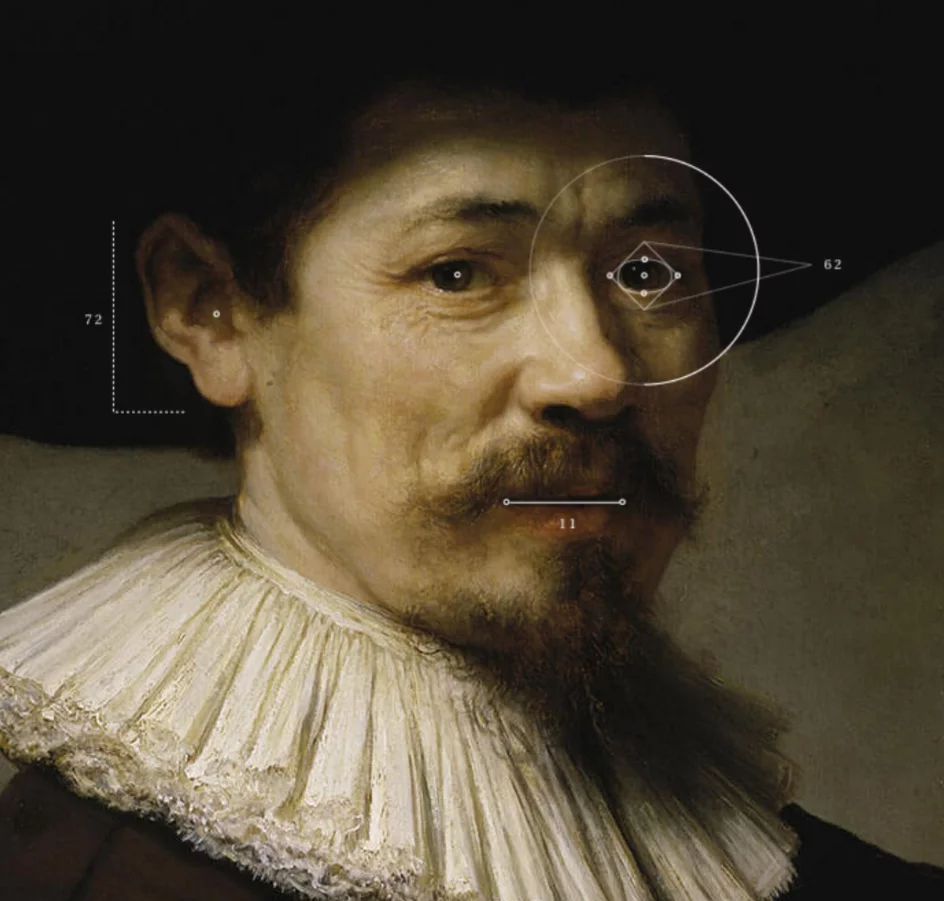

"To master his style, we designed a software system that could understand Rembrandt based on his use of geometry, composition, and painting materials," says the website on which the project is featured. "A facial recognition algorithm identified and classified the most typical geometric patterns used by Rembrandt to paint human features. It then used the learned principles to replicate the style and generate new facial features for our painting."

That means the computer was able to create eyes, a nose, and other facial features by mimicking Rembrandt's style. Then it was time to put those features together.

"An algorithm measured the distances between the facial features in Rembrandt's paintings and calculated them based on percentages," says the site. "Next, the features were transformed, rotated, and scaled, then accurately placed within the frame of the face. Finally, we rendered the light based on gathered data in order to cast authentic shadows on each feature." The rendering process took 500 hours.

Next came the moment for math and machine to do what only skin and bone had once done. Using the paint height map that had been created, a 3D printer laid down 13 layers of ink to create a textured work of art that may as well have been set to canvas by Rembrandt himself.

In the end, the painting took 18 months to create and consists of 148 billion pixels. It is on temporary exhibit at the Looiersgracht 60 gallery in Amsterdam. A permanent home has yet to be announced.

You can deeper insight into the artist, the process by which his style was recreated and the new painting itself on The Next Rembrandt website or through this accompanying video.

Sources: ING, The Next Rembrandt