If robots are ever going to earn their keep, they'll need to learn on the job. Factory supervisors have better things to do than program complex instructions, so what if they could just show robots what to do, the same way they would a new human worker? AI researchers from Nvidia have now demonstrated a system that lets robots do just that, learning how to perform a specific task by watching someone do it just once.

If past research is anything to go by, robots could one day learn through verbal instructions, and literally read our minds to correct themselves when they're making a mistake. After that, we could have robots teaching each other these skills, even sharing knowledge with each other through the cloud.

"For robots to perform useful tasks in real-world settings, it must be easy to communicate the task to the robot; this includes both the desired result and any hints as to the best means to achieve that result," the Nvidia researchers explain. "With demonstrations, a user can communicate a task to the robot and provide clues as to how to best perform the task."

For the new experiments, the researchers trained a series of neural networks to work together to complete a task demonstrated for them by a human. These neural networks are running on Nvidia's Titan X GPUs, and are hooked up to a camera that watches the human's actions, and a robot claw that mimics them.

The researchers trained the system to recognize a set of four colored blocks and a toy car. The human instructor would arrange these in a specific way, then scatter them. Then it was the robot's job to reassemble the scene as it was shown.

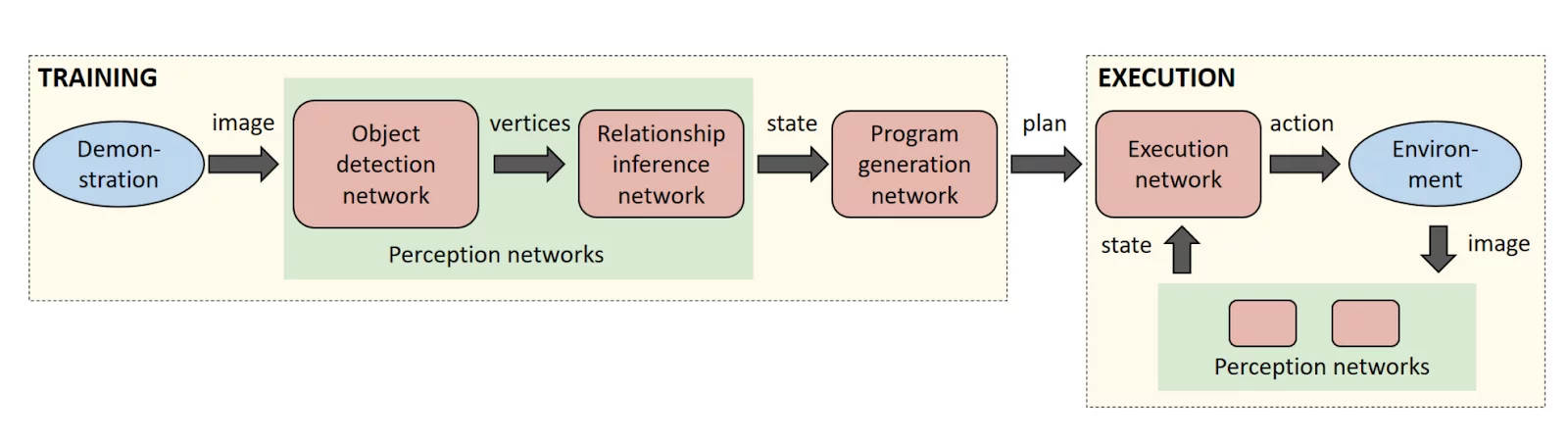

To do so, each of the neural networks has its own job. First, an object detection network figures out what the camera is looking at. Then, a relationship inference network figures out how those pieces fit together – the blue block is on top of the red block, for example. Then, a program generation network figures out what needs to be done to achieve the goal, and finally the plan is passed to an execution network that guides the claw to pull it off.

The robot automatically picks up on its own mistakes, too. If it messes up at any stage of the process, it realizes it hasn't achieved the goal yet and tries again. But before it does anything, the robot also produces a description of its plan designed to be readable by humans, so its supervisors can check that it hasn't misinterpreted the task, and reteach it if necessary.

The researchers say the new learning technique should really help speed up the process of teaching robots, because it doesn't need to be trained on a huge amount of labeled data.

The research paper has been published online, and the team will present it at the International Conference on Robotics and Automation (ICRA) in Brisbane, Australia this week. The robot can be seen in action in the video below.

Source: Nvidia