There’s a lot we can learn from the way different animals see the world, whether it's a mantis shrimp inspiring a new cancer-detecting camera, electric fish that could help us see through murky waters, or bug eyes leading to new kinds of wide-angle cameras. The ability of jumping spiders to correctly judge the distance of their leaps is another example, with scientists at Harvard tapping into these skills to develop a new kind of depth-perception sensor for cameras in small devices.

Depth sensors like the type used in the Xbox’s Kinect system or newer smartphones with features like Face ID use an array of cameras and light sources to gauge their surroundings. Their physical size allows for relatively powerful processors and batteries to make sense of all the data, but scaling this type of tech down for smaller devices such as wearables, tiny robots and augmented reality headsets is another matter.

The answer, it turns out, may lie in the incredible depth-sensing abilities of the jumping spider, despite its tiny brain. These creatures have evolved highly efficient systems to gauge the depth of objects around them, which enables them to pounce on their prey from several body lengths away.

This is thanks to the way the animal processes visual information. Each of a jumping spider's larger principal eyes feature a number of semi-transparent retinae layers, which actually feed its brain with multiple images with various levels of blurring. These differing amounts of blurring are what the spider uses to figure how far away its prey is, and enables it to perfectly judge its leap.

Scientists have been able to recreate this process in the lab, but not without involving big cameras and motorized equipment to generate images with different amounts of blurring. But an engineering team at Harvard now claims to have come up with a way of scaling things down in a big way.

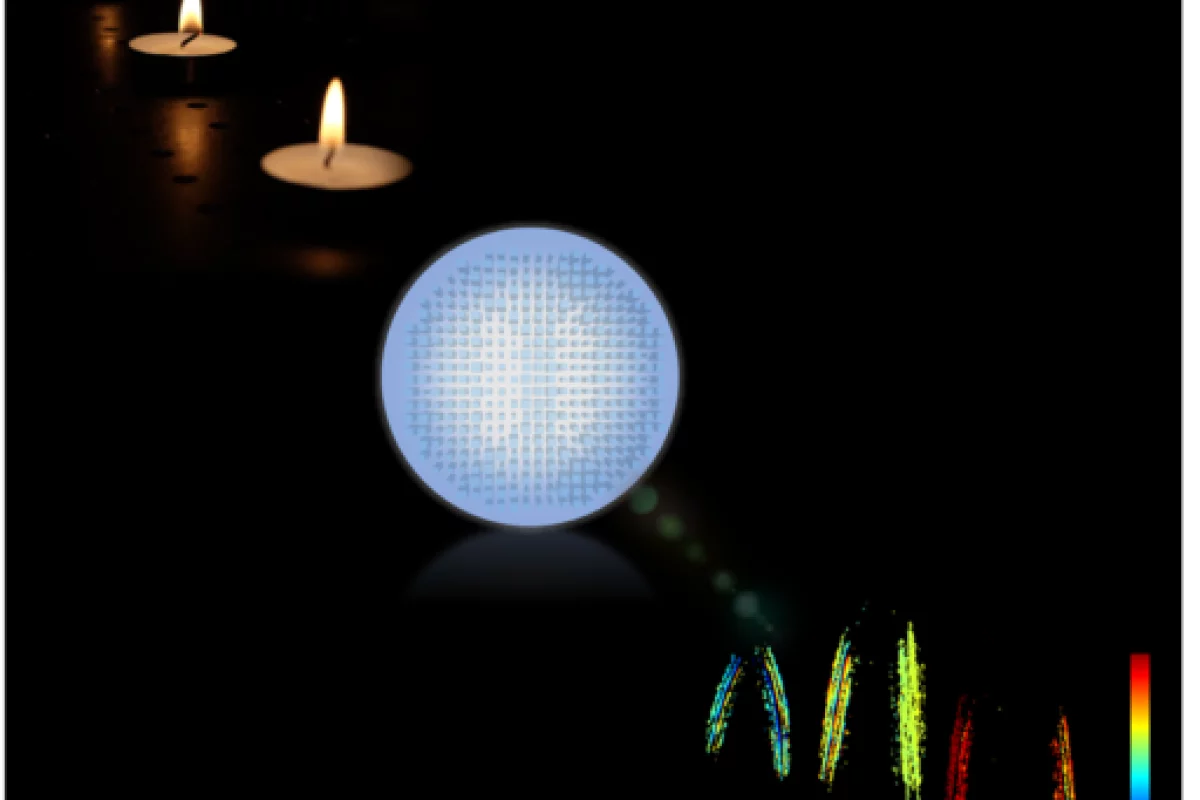

The scientists developed a new type of metalens, which is an ultra-thin lens featuring nanoscale structures on the surface that shape the way the light is focused as it passes through. The team designed a metalens to capture two images at the same time, but with different amounts of blurring. A computer vision algorithm then processes the two images and spits out a depth map that indicates the distance to an object.

“Instead of using layered retina to capture multiple simultaneous images, as jumping spiders do, the metalens splits the light and forms two differently-defocused images side-by-side on a photosensor,” says Zhujun Shi co-author of the paper.

With further development, the team imagines all kinds of applications for its compact new depth sensor. It could be built into tiny robots, for example, or wearables such as smartwatches or augmented reality headsets.

“Metalenses are a game changing technology because of their ability to implement existing and new optical functions much more efficiently, faster and with much less bulk and complexity than existing lenses,” says Capasso. “Fusing breakthroughs in optical design and computational imaging has led us to this new depth camera that will open up a broad range of opportunities in science and technology.”

The team has published its research in the Proceedings of the National Academy of Sciences.

Source: Harvard University