In a fight against the type of "offensive or clearly misleading" results that make up about 0.25-percent of daily search traffic, Google has outlined new efforts to stymie the spread of fake news and other low-quality content like unexpected offensive materials, hoaxes and baseless conspiracy theories.

The search giant's first line of defense against this uniquely 21st-century spread of misinformation is actually an old-fashioned one: human intelligence. You might think it's running completely on algorithms and AI these days, but Google actually has human evaluators that perform searches, assess the results and provide feedback back to the company. Google has updated the guidelines for this team of Search Quality Raters.

Now, raters have more detailed instructions for identifying low-quality search results. The improved feedback from these human testers will then inform changes to Google's search algorithms, so false and misleading content appears lower in the results.

Google has also fine-tuned underneath the hood. It has adjusted ranking signals specifically with the intention of demoting problematic content. As is typical of the more behind-the-scenes aspects of its ranking system, Google does not detail the specifics of these changes, but it did cite Holocaust denial results that appeared in December 2016 as an example of the type of problem it hopes to resolve.

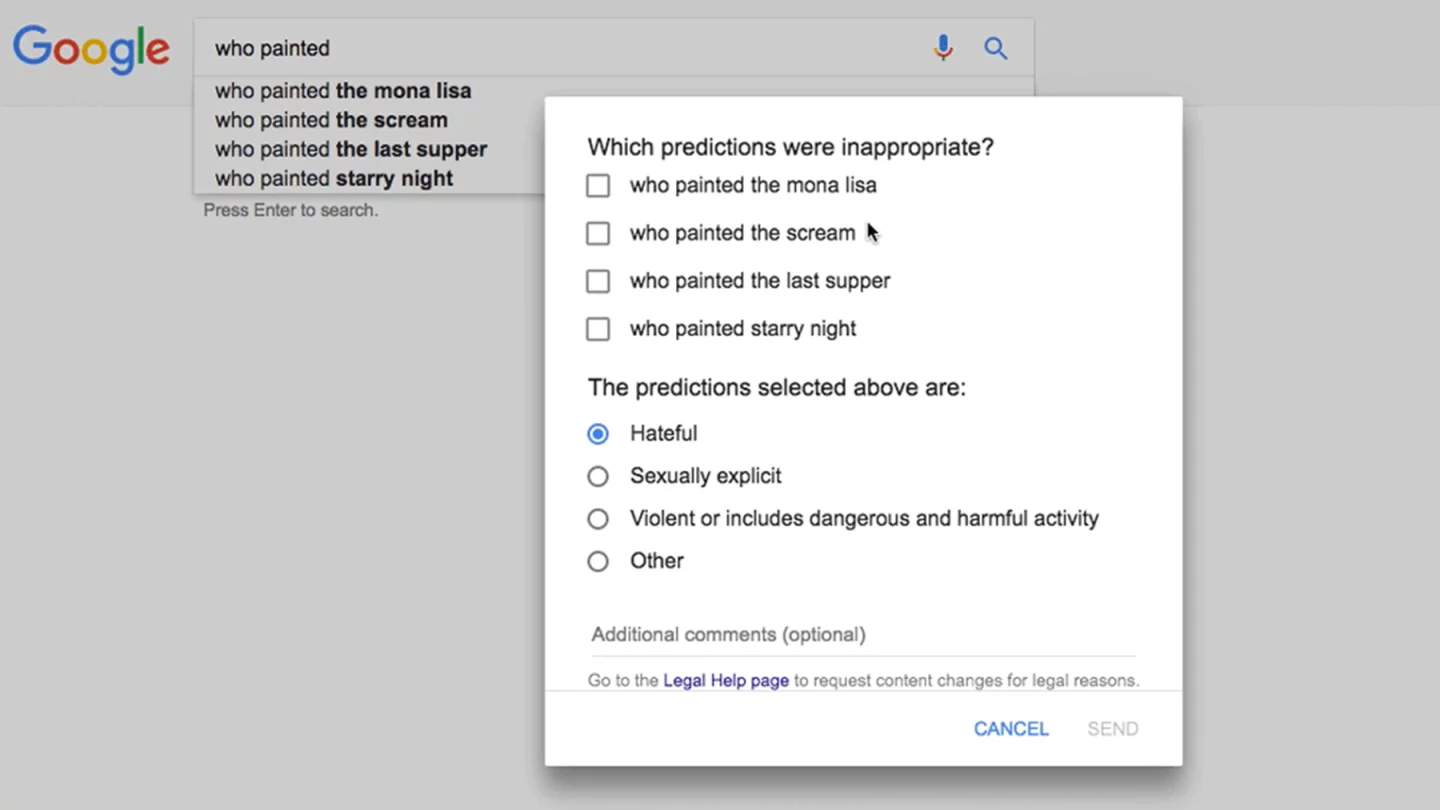

Lastly, there are also new direct feedback tools, through which users can flag problem content that appears in Google' Autocomplete and Featured Snippets features. These are tools that help you arrive more quickly at the content you're looking for, but since their content is generated automatically, it is sometimes problematic. A "Report inappropriate predictions" link now appears under the Google search box, and a "Feedback" option now appears below the featured Snippet.

Google isn't the only major platform battling fake news – social media giant Facebook and other major tech companies are also funding solutions to curb the problem.

Source: Google