Apple's decision to nix the headphone jack on this year's iPhones has certainly caused a stir. Whether you're a staunch Apple fan or you'd rather use Windows XP than send your cash to Cupertino, let's take a look at all the hardware that Apple's already killed. Then you can decide whether or not removal of the headphone jack is following a tradition of innovation – or something else altogether.

Apple puts down roots

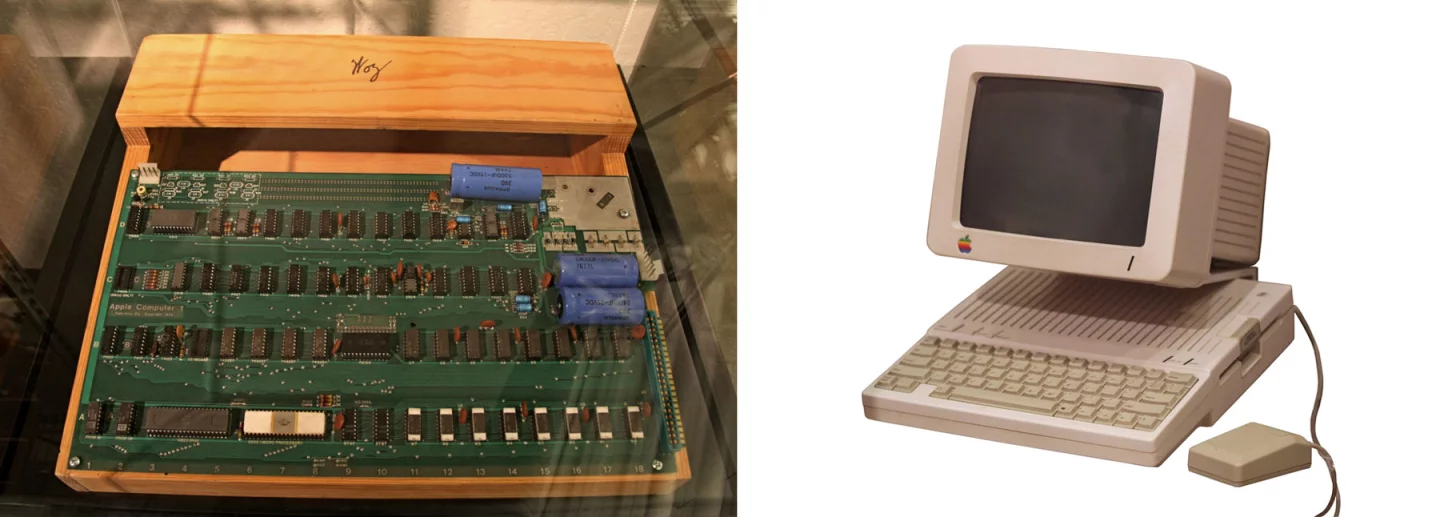

You may already know about the cash-strapped, homespun roots of Apple Computers – the genesis story has worked itself into public knowledge. Its first product, the Apple I computer, was sold from the back pages of a magazine. It was only a careless circuit board, and strictly a hobbyist's machine. Buyers had to connect it to a TV set and keyboard themselves.

Small-time sales were enough to drive the development of the Apple II, a plastic-cased computer closer to the PCs of today. The first Apple II had a built-in keyboard and in some cases connected to a TV display. The original retail price of the computer – in 1977 – was US$1,298. Adjusted for inflation, that's about $5,158 today. Yes, it had a staggering amount of capability for its era, but it was still an expensive device that on its own, solved exactly zero problems.

How on earth did it sell? Two main factors were 1) its ability to run VisiCalc, the first-of-its-kind spreadsheet software that revolutionized the business world, via floppy disk; and 2) it looked good. Individuals with reasonable technical aptitude could set it up and use it without being intimidated by wires, chips and overwhelming cords. The molded plastic case took care of all that.

In a sense, the first thing that Apple introduced – then took away – was a DIY mentality. To this day, the closed-off quality of Apple machines invokes both hostility and admiration. The closing-off process had roots in the Apple II's plastic case, and the first Macintosh took it even further, with Apple only permitting special service centers to open the computer's case, and with none of the parts or slots being upgradeable, an unheard-of move at the time.

What followed was the birth of modern personal computing, a period of growth and additions. But during this time, Apple underwent a tumultuous period that included failed projects, a temporary ousting of Steve Jobs, and near-decimation at the hands of its Windows-based competitors.

1998: iMac flips off the floppy disk

Fast forward to the late 1990s, with Steve Jobs back at the helm. In 1998, Apple released the first iMac, a candy-colored desktop that helped revive Apple and catapult it into the internet age. It sold well amidst acclaim and controversy, setting the tone for Apple product launches to follow.

What was missing? The floppy disk drive – the exact technology that allowed the Apple II to bridge the gap between a hobbyist's gadget to a daily use tool – along with mouse, keyboard and printer-specific ports. Instead, there was a CD drive and new USB technology for connecting peripherals.

An outcry erupted. Consumers were not happy about the idea of buying new USB-ready external hardware, while Apple argued that the internet and rewritable CDs were replacing floppy drives as the primary way to move around local files.

But there was another point of contention as well. The first iMac was announced with a 33.6k modem, a lagging speed even in 1998. Many were incredulous – the new iMac was being billed as a screamer, where "i" stood for "internet," yet it was only being offered with subpar connectivity. In a rare quick response to consumer demand, Apple boosted the modem to 56.6k before most of the devices started shipping. It stood firm on the existing ports and drives.

Original iMac sales were through the roof. For several months, it was the number one-selling computer in the United States. It was so well-received that a barrage of third-party USB-ready peripherals hit the market, and everything from external hardware to kitchen accessories were copycatting its colorful, translucent design.

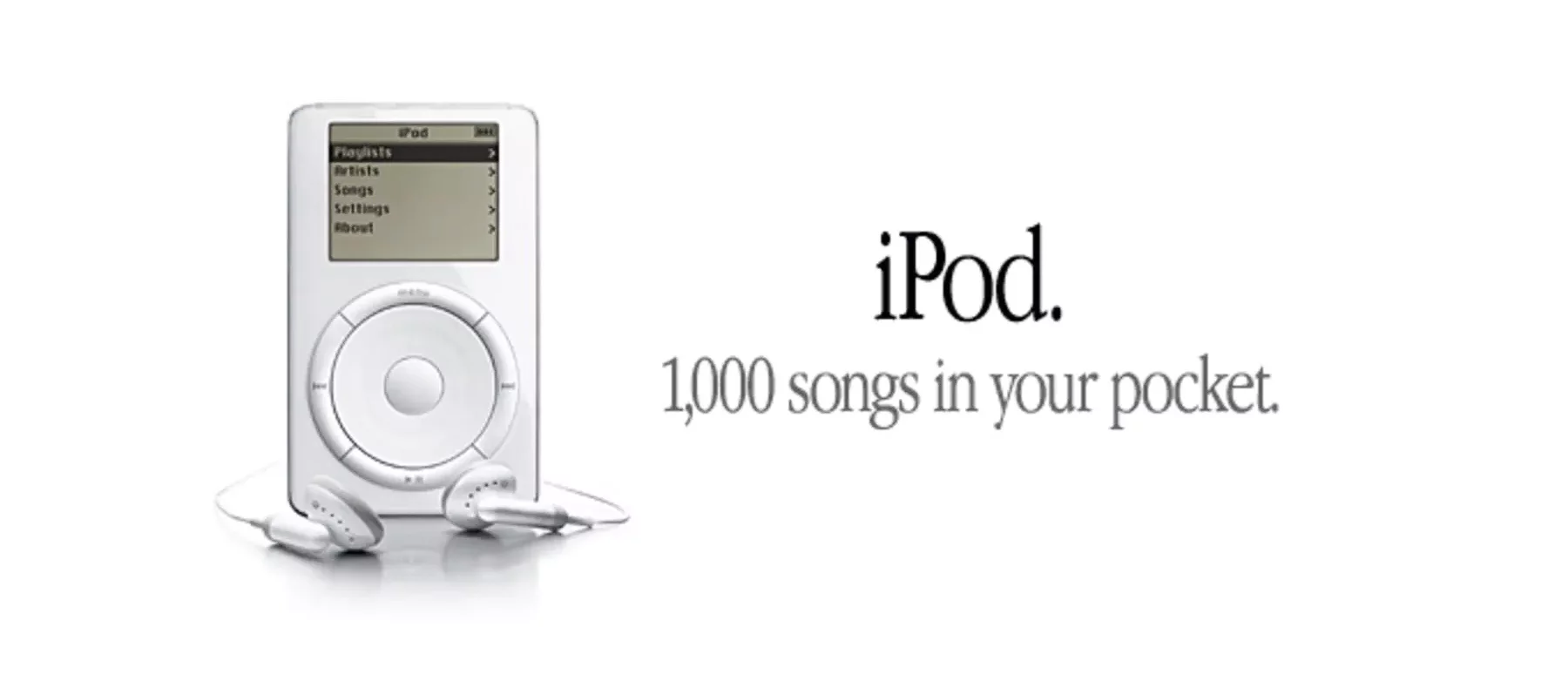

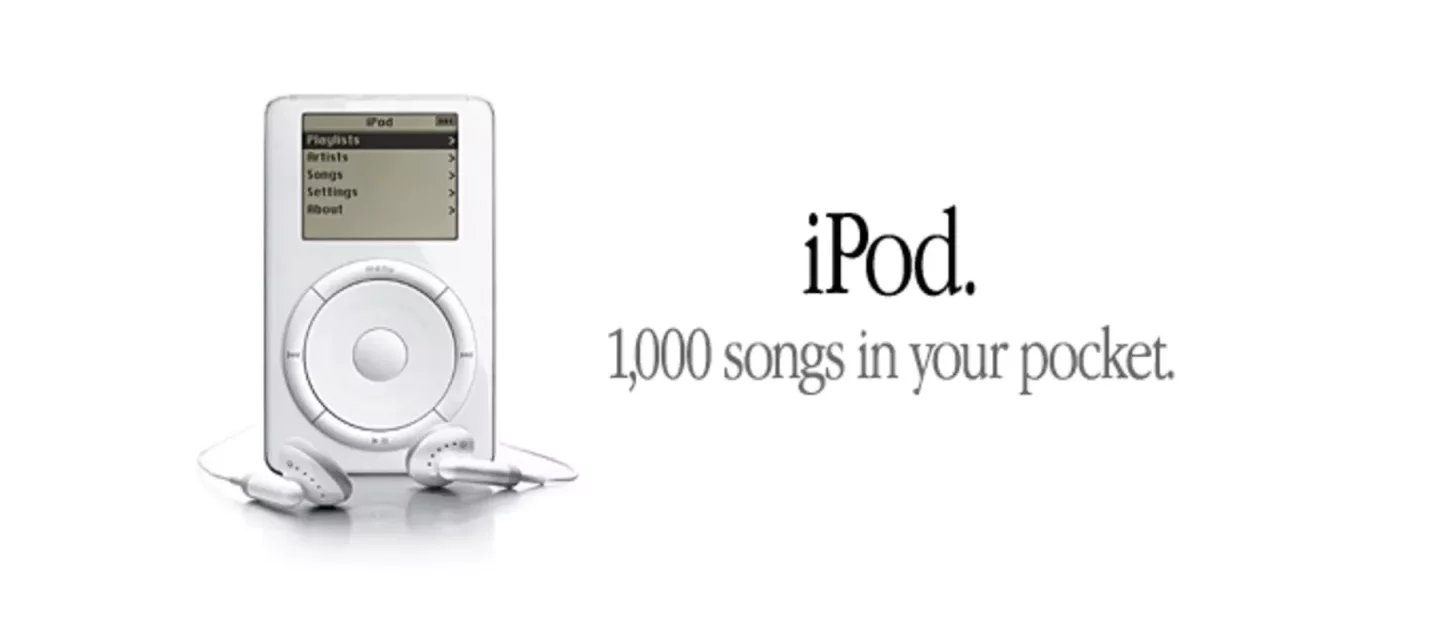

2001: iPod, a case study of simplicity

The iMac's success established the groundwork for much of Apple's modern identity, and by extension, the birth of the iPod in 2001. Several developments differentiated the iPod from its competitors, but for the sake of this article, let's note what the iPod was missing: visible construction elements like screws, a removable battery and an on/off switch.

These components, which would have seemed like necessary ingredients in any piece of consumer electronics, were hardly missed. Arguably, their removal bolstered the device's slick appearance, keeping the internal mechanisms out of sight and out of mind.

2007: iPhone bypasses a physical keyboard

If you were a cell phone power user in the pre-iPhone era, you were likely sporting either a snappy flip phone or a PDA, such as the stylus-navigated PalmPilot or the BlackBerry with its pocket-sized keyboard.

Of these, the first generation iPhone most closely resembled a PDA, yet it was without stylus or keyboard. Instead, it presented a dynamic onscreen keyboard for touch typing. Many consumers found it hard to imagine a pleasant touch typing experience (a notion that is echoed in some responses to the Lenovo Yoga launched last month), but as we now know, this became a mainstay in mobile technology.

The first-generation iPhone also lacked a removable battery and an SD card slot, omissions that are still present in today's iPhones.

2008: MacBook Air nixes the CD/DVD drive

When it was originally launched, the MacBook Air was positioned to be Apple's premium ultrathin laptop. But that super-slim sexiness came at a price: MacBook Air was the first Apple laptop to ditched the optical CD/DVD drive. It had one USB 2.0 port for connecting peripherals plus a headphone jack and micro-DVI port.

Critics acknowledged its good looks but also the utility of that attractiveness. Despite its expensive top-of-the-line placement, it seemed less than fully featured, being the first major laptop to pare down so much hardware. Many Apple products have been criticized as status symbols first, computing devices second, and the first generation MacBook Air received much of this ire.

It's interesting to note that since then, MacBook Air has evolved into one of Apple's entry-level laptops. It's still super svelte, but its price and performance are on the low end of Apple's notebook offerings. Furthermore, Apple has not included a built-in optical drive on any of its new products for a couple of years now.

2012: Lightning strikes the 30-pin charging dock

Few events are as fraught with superlative language as mobile technology launch events. Of these, Apple keynotes seem to have the most sycophants in attendance. But when Apple's marketing VP Phil Schiller announced that the iPhone 5 would use a Lightning charger that made the legacy 30-pin charging dock obsolete, there was a distinctly lukewarm reaction.

As expected, VP Phil lived up to his last name and shilled the phone within an inch of its life. The new Lightning connector, he said, took up less room and allowed the new flagship to offer more features at a smaller size: Weighing only 112 grams and measuring 7.6 millimeters thick, the iPhone 5 was light and thin even by today's standards.

A 30-pin-to-Lightning adapter sold separately for about $29, but it was not compatible with all accessories. Despite the benefits attributed to the switch to Lightning, few were excited about it – except perhaps companies that manufacture iPhone accessories. The original Lightning port controversy has since died down, but the 86ing of the headphone jack has brought this still-recent memory back into mind.

2015: 12-inch MacBook gets rid of all ports except one USB

The 12-inch MacBook is Apple's newest entry-level everyday laptop, but it has only one lonely USB-C port for all your peripherals and charging. For almost everyone, that demands the occasional use of an additional third-party USB hub.

If a single port is intended to create a streamlined experience, it's not doing its job if a bulky hub is a constant necessity. The single port is likely a show of Apple's belief in a fully wireless future. Even if the company is right, we're still waiting for the industry to catch up.

2016: iPhone 7 is sans-headphone jack

That brings us to the current controversy: Has Apple gone too far in its removal of the 3.5-mm headphone port from the iPhone 7 and 7 Plus? Should owners of wired headphones consider making the jump to Android? Will the omission be applauded in hindsight, like iMac skipping the floppy disk, or will public demand be quietly accommodated, like the relegation of the MacBook Air into a lower-end model?

Wherever your opinion falls, it does seem that Apple fares better when it's liberal with the axe: In the past, hardware removal has spurred industry innovation and generated mass amounts of publicity. What remains to be seen is whether Apple can be "innovative" to a fault – and whether or not the current round of hardware cuts are hollowed-out iterations of a formula, or setting the stage for something much bigger.