Engineers at Cornell University have developed a new wearable device that can monitor a person’s facial expressions through sonar and recreate them on a digital avatar. Removing cameras from the equation could alleviate privacy concerns.

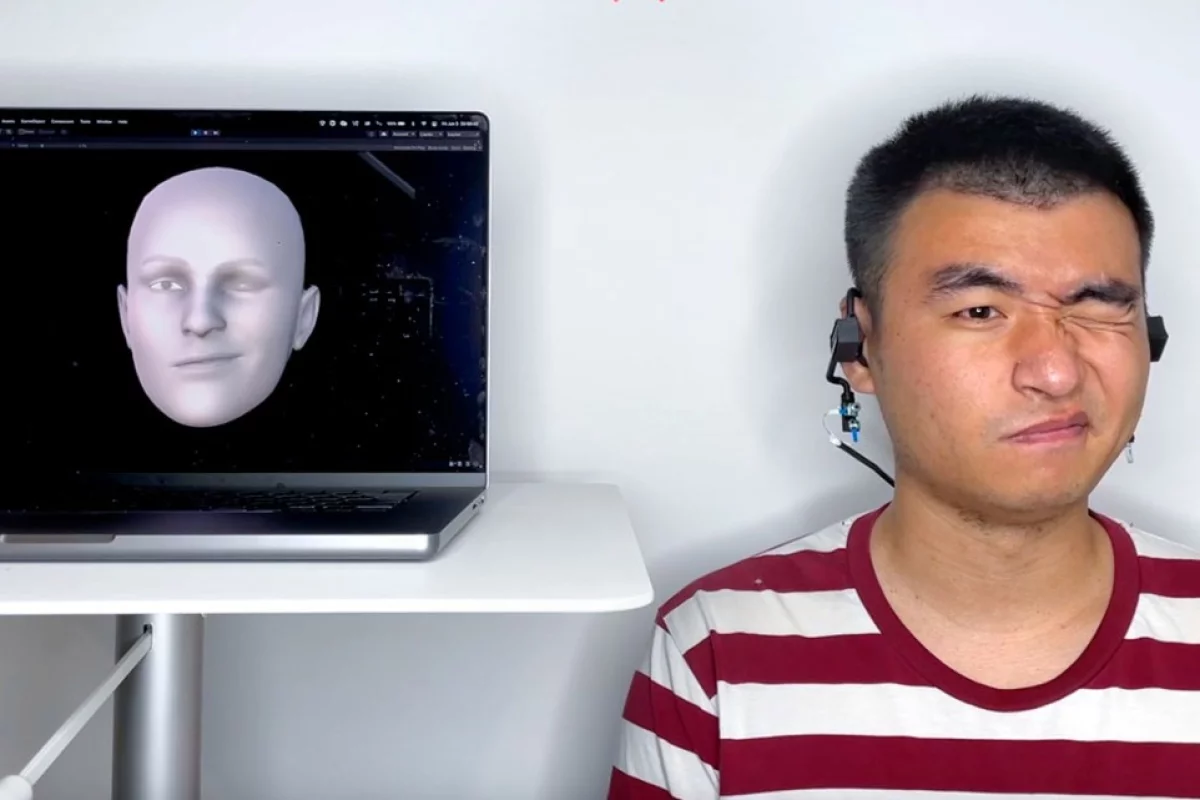

EarIO, as the team calls the device, is made up of an earphone with a microphone and a speaker on each side, and can attach to any regular headset. Each speaker fires off pulses of sound, outside the range of human hearing, towards the wearer’s face, and the echoes are picked up by the microphones.

The echo profiles will be slightly altered by the way the user’s skin moves, stretches and wrinkles up as they pull various facial expressions or while talking. Specially trained algorithms can recognize these echo profiles and rapidly reconstruct the expression on the user’s face, displaying it on a digital avatar.

“Through the power of AI, the algorithm finds complex connections between muscle movement and facial expressions that human eyes cannot identify,” said Ke Li, co-author of the study. “We can use that to infer complex information that is harder to capture – the whole front of the face.”

The team tested the EarIO system on 16 participants, running the algorithm on a regular smartphone. And sure enough, the device was able to reconstruct facial expressions about as well as a regular camera could. Background noise like wind, talking or street noise didn’t interfere with its ability to register faces.

Sonar has a few advantages over using a camera, the team says. Acoustic data requires far less energy and processing power, which also means the device can be smaller and lighter. Cameras can also pick up a huge amount of other personal details a user might not intend to share, so sonar could be more private.

As for what you might use this technology for, it could be a handy way to replicate your physical facial expressions on a digital avatar for games, VR or the metaverse.

The team says that further work will still need to be done to tune out other interference, like when the user turns their head, and streamline the training system for the AI algorithm.

The research was published in the journal Proceedings of the Association for Computing Machinery on Interactive, Mobile, Wearable and Ubiquitous Technologies.

Source: Cornell University