Driven by advances in artificial intelligence, doctored video content known as deepfakes present a serious and growing danger when it comes to the spread of misinformation. As these altered clips become more and more convincing, there is a pressing need for tools that can help distinguish them from real ones. Computer scientists at the University of Buffalo have just offered a compelling example of what this could look like, developing a technology they say can spot deepfakes in portrait-like photos with 94 percent effectiveness by analyzing tiny reflections in the eyes.

Deepfakes are artificial media created by training deep learning algorithms on real video footage of a person and mixing that with computer imagery to create fictional video footage of them instead. These have become increasingly realistic, but they are not all as benign as this doctored video of Game of Thrones character Jon Snow apologizing for the show's ending.

Experts are growing more fearful of the impact deepfake technology could have on democracy through doctored videos of politicians appearing to say things that they never in fact said. Or in Speaker Nancy Pelosi's case, video altered to make her appear to stumble over her words, which was subsequently tweeted by former President Donald Trump to his millions of followers.

The research by the University of Buffalo team was spearheaded by computer scientist Siwei Lyu, who sought to develop a new deepfake detection tool by leveraging tiny deviations in the reflections of the eyes. When we look at something in real life, its reflection appears in both our eyes as the same shape and color.

“The cornea is almost like a perfect semisphere and is very reflective,” says Lyu. “So, anything that is coming to the eye with a light emitting from those sources will have an image on the cornea. The two eyes should have very similar reflective patterns because they’re seeing the same thing. It’s something that we typically don’t typically notice when we look at a face."

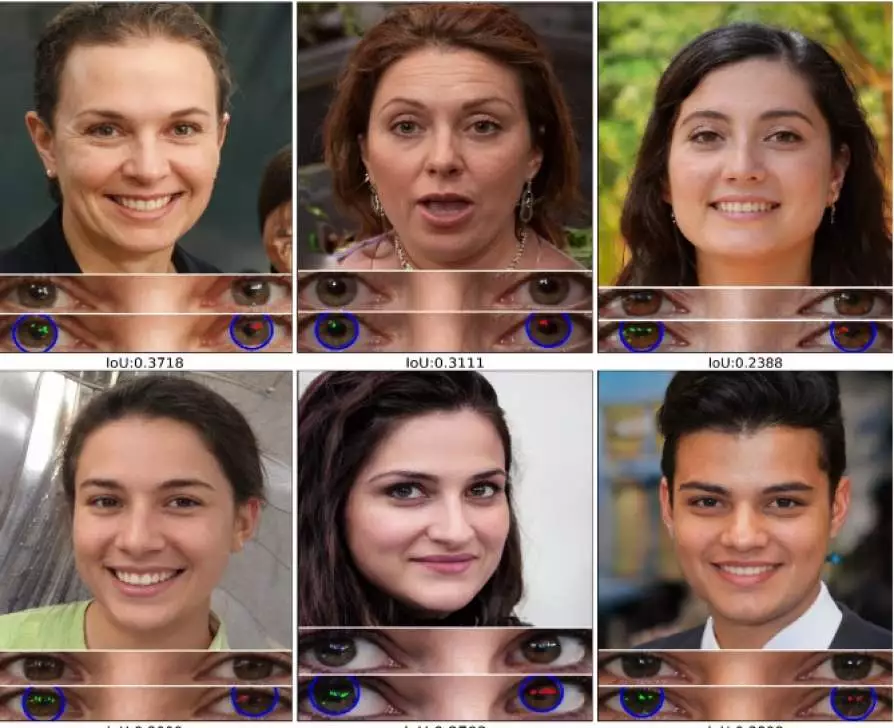

But this isn't the case with deepfakes, possibly due to the many different images used to create it. Lyu and his team built a computer tool that takes aim at this flaw by first mapping out the face, examining the eyes, then the eyeballs and then the light reflected in each eyeball. In very fine detail, the tool then compares differences in the shape, light and intensity of the reflected light.

Experimenting with their new tool on a combination of real portraits and deepfakes, the team found it was able to distinguish the latter with 94 percent effectiveness. Despite this promising figure, the team notes there are still several limitations to the approach. Among them is the fact that these deviations could be fixed with editing software and that the image must present a clear view of the eye for the technique to work.

A paper describing the research was available online here.

Source: University of Buffalo