One thing robots are pretty good at doing is, well, one thing over and over again. Confront them with objects of different shapes and sizes, and with different actions to perform and that's another story entirely. Looking to expand these possibilities, scientists at MIT's Computer Science and Artificial Intelligence Laboratory (CSAIL) have developed a versatile new robotic machine they say can learn to pick up and put down all kinds of things, even ones it has never seen before.

"Whenever you see a robot video on YouTube, you should watch carefully for what the robot is not doing," says MIT professor Russ Tedrake, senior author on a new paper about the project. "Robots can pick almost anything up, but if it's an object they haven't seen before, they can't actually put it down in any meaningful way."

The work echoes similar research projects from CSAIL and elsewhere that seek to build robots that can delicately handle a range of objects. These include conventionally shaped soft robotic grippers along with more unorthodox designs resembling green blobs, fish and just this week, a Venus fly trap.

But the CSAIL researchers say typical robotic grippers rely mostly on estimating an object's position and orientation, using algorithms based heavily on geometry to grab a hold of them. In their view, this as some limitations, particularly when grabbing with objects of vastly different shapes and trying to put them down again with any sort of delicacy.

Their new approach instead relies on a set of keypoints on an object, which it interprets as coordinates. They offer a mug as an example, which the system only needs three coordinates to grasp, with a keypoint in the center of the mug's side, bottom and handle enough to do the job.

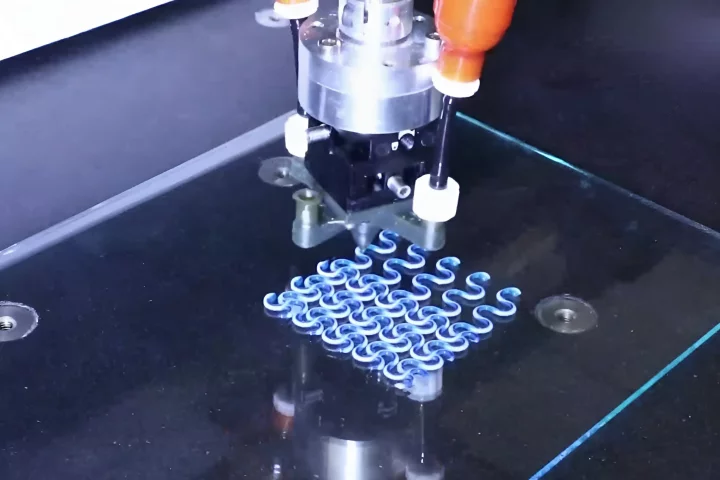

Called Keypoint Affordance Manipulation (KPAM), this control software is designed to afford robots far greater flexibility. By feeding the system six keypoints, the scientists were able to have a robot arm running KPAM pick up more than 20 different pairs of shoes including everything from slippers to boots. And though it ran into some trouble trying to pick up a pair of high heels, adding a few pairs to its neural network training data quickly saw it complete the task.

"Understanding just a little bit more about the object – the location of a few key points – is enough to enable a wide range of useful manipulation tasks," says Tedrake. "And this particular representation works magically well with today's state-of-the-art machine learning perception and planning algorithms."

With further work, the team hopes to improve the KPAM technology so that it can do even more generalized tasks, such as unstacking a dishwasher or cleaning kitchen counters. What's more, they say its way of learning on the job means it could easily form part of larger manipulation systems in factories and the like.

A paper describing the research is available online, and you can see KPAM go to work in the video below.

Source: CSAIL