Electronic systems don't work well in heat – which is a problem, because apart from a few exceptions, heat is a normal byproduct of electricity. Researchers have now developed a thermal diode: a computer component that runs on heat instead of electricity. This could be the first step towards making heat-resistant computers that can function in extremely hot places, like on Venus or deep inside the Earth, without breaking a sweat.

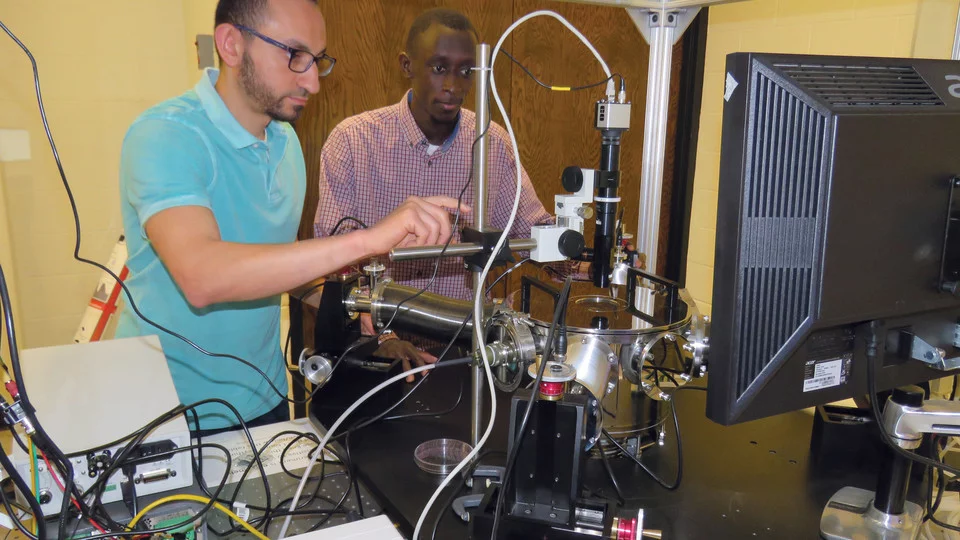

A regular diode is a key logic component in electronic circuits that allows electricity to flow freely in one direction but blocks it from moving back the other way. These crucial components often fail under high temperatures or when exposed to ionizing radiation, so to help make hardier computer systems, a team at the University of Nebraska-Lincoln have developed thermal diodes, powered by heat instead of electricity.

"If you think about it, whatever you do with electricity you should (also) be able to do with heat, because they are similar in many ways," says Sidy Ndao, co-author of the study. "In principle, they are both energy carriers. If you could control heat, you could use it to do computing and avoid the problem of overheating."

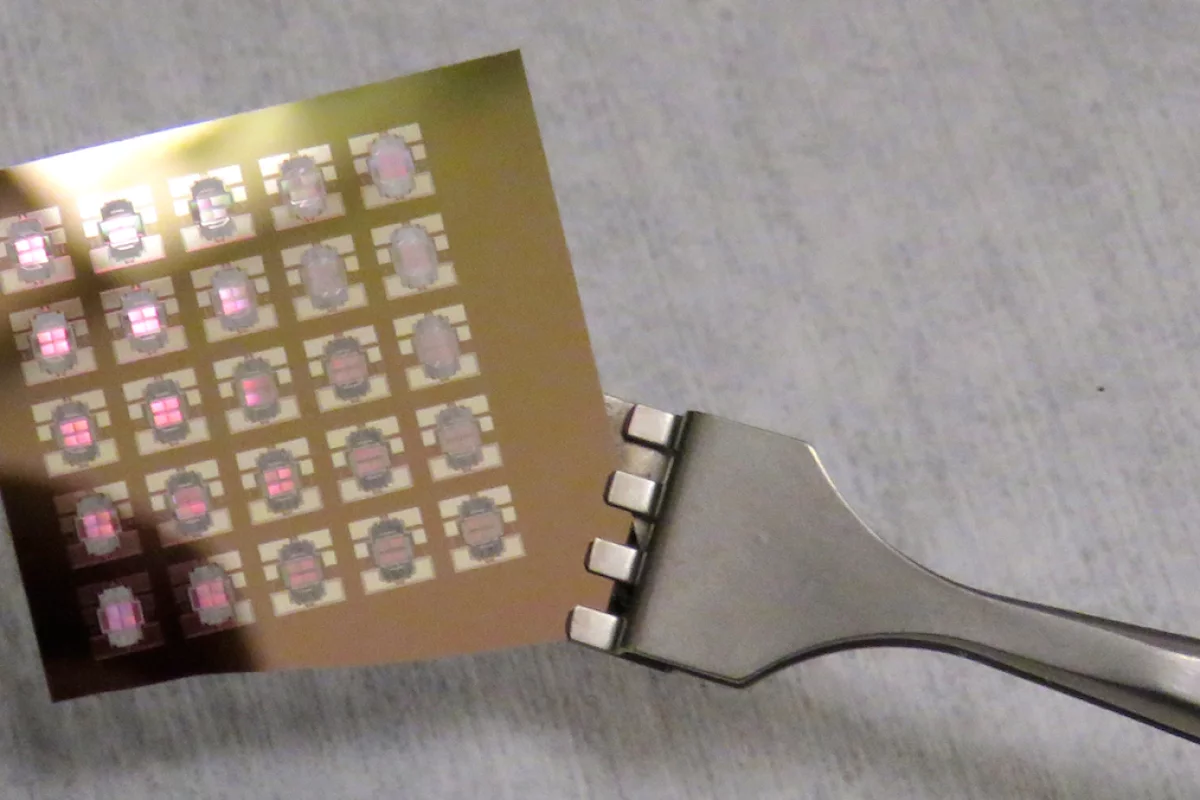

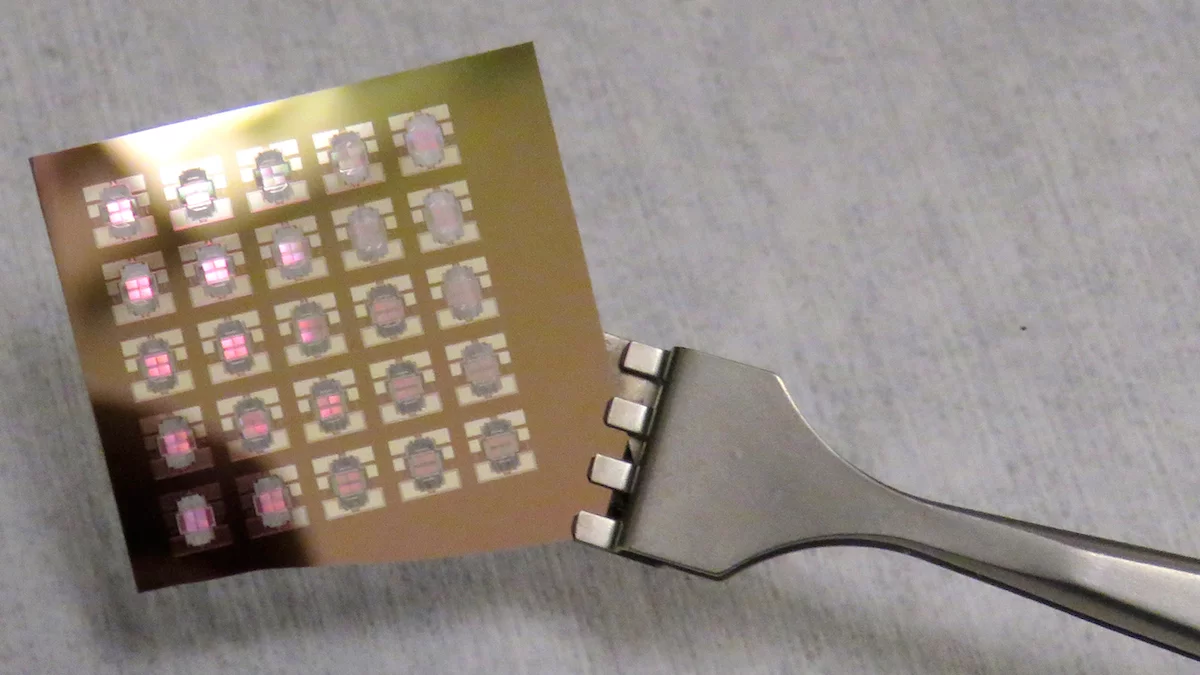

The team's thermal diode is made up of pairs of surfaces, where one is fixed and the other can be moved towards or away from its stationary partner. That movement is handled automatically by the system to maximize the transfer of heat: when the moving surface is hotter than the still one, it will actuate inwards, and increase the rate that heat moves to the cooler surface.

When performed at temperatures between 215° and 494° F (102° and 257° C), the thermal diode hit a peak heat transfer rate of about 11 percent, but the team reported that the device was able to function at temperatures as high as 620° F (327° C). Ndao believes that future versions could even operate at up to 1,300° F (704° C), potentially leading to computers that can work under extreme heat conditions.

"We are basically creating a thermal computer," says Ndao. "It could be used in space exploration, for exploring the core of the Earth, for oil drilling, (for) many applications. It could allow us to do calculations and process data in real time in places where we haven't been able to do so before."

Even when they're not running in the molten core of the planet, electronics can overheat and damage themselves if they aren't properly cooled by fans or water circulation systems. As heftier tasks are handed off to computers, more elaborate cooling tactics are needed, and to that end Lockheed Martin has tinkered with embedding microscopic water droplets inside chips, IBM developed the counter-intuitive technique of cooling with warm water, and Microsoft has turned to the power of the ocean itself to cool a large data center.

Using components like thermal diodes, the researchers say some of that wasted heat could instead be fed back into the system as an alternative energy source, improving its energy efficiency.

"It is said now that nearly 60 percent of the energy produced for consumption in the United States is wasted in heat," says Ndao. "If you could harness this heat and use it for energy in these devices, you could obviously cut down on waste and the cost of energy."

The researchers are now working on improving their thermal diode's efficiency. But since diodes aren't the only component in electronics, a true thermal computer would need the rest of its system to be able to withstand those temperatures as well.

"If we can achieve high efficiency, show that we can do computations and run a logic system experimentally, then we can have a proof-of-concept," Mahmoud Elzouka, co-author of the study. "(That) is when we can think about the future."

The research was published in the journal Scientific Reports.

Source: University of Nebraska-Lincoln