Image stacking has opened the door for clever post-focusing capabilities in modern cameras like the Lumix GH5, but a team at UC Santa Barbara (UCSB) has now used a similar technique that allows the composition of a photo to be adjusted after a shot has been taken. Working with researchers from Nvidia, the UCSB team says it has developed a way to create images that, in some cases, simply couldn't be captured with a normal camera.

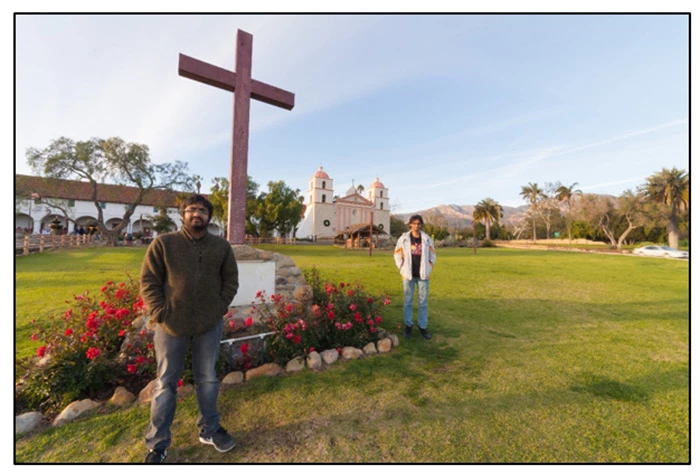

According to the researchers, their "Computational Zoom" system opens the door for novel image compositions by controlling the sense of depth in the scene, the sizes of different objects at different depths relative to each other, and the camera's perspective of them. This essentially allows photographers to manipulate the view of the foreground and background elements of an image without making the subjects look out-of-place.

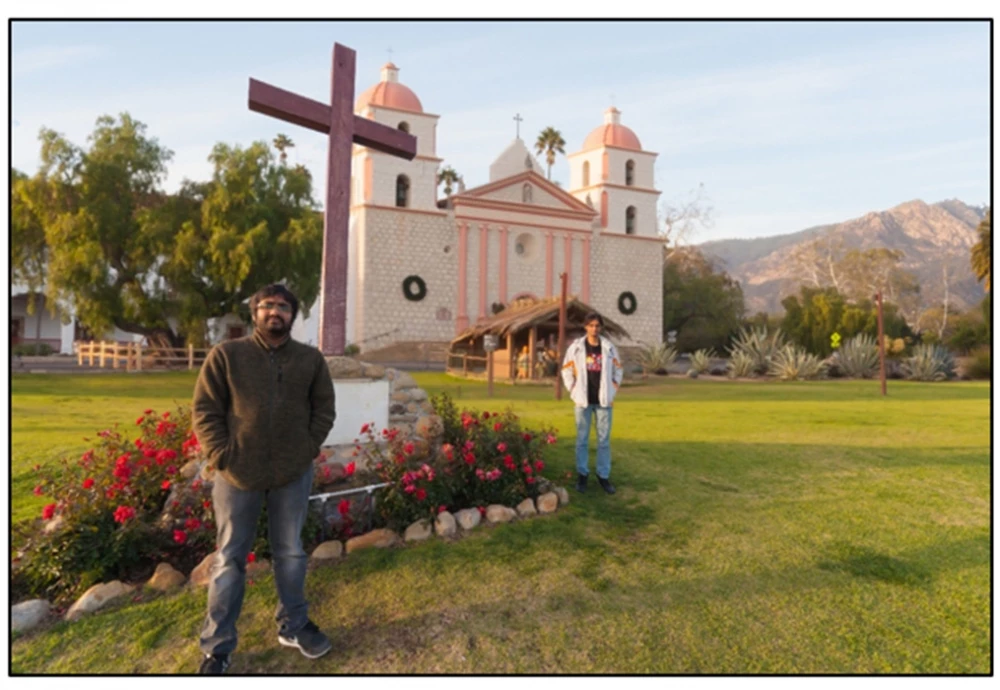

Achieving this is a three-step process. Photographers take successive images as they gradually move closer to their subject without adjusting the focal length of the lens. The system takes the stack and uses an algorithm to estimate camera position (or depth) and where it was pointing, before a "novel multi-view 3D reconstruction method" is used to create a depth map for each image.

All this information is combined to create multi-perspective images, each of which has a different composition. Photographers are able to explore the different perspectives using a simple user interface.

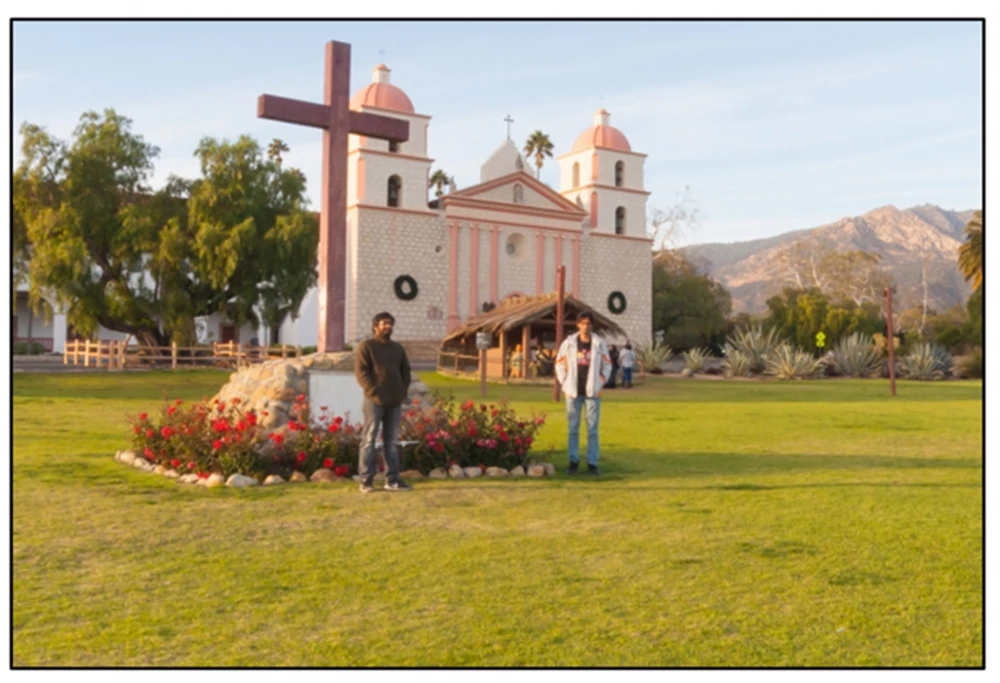

The end result is the ability to automatically combine wide-angle and telephoto (zoomed-in) perspectives into the one image. Imagine you're taking a photo of your partner standing in front of a beautiful old building. Zooming in will give you a great view of the person, but will leave out most of the church. Shooting at a wide angle might allow you to squeeze both subjects into the image, but fisheye or wide lenses tend to suffer significant distortion at short focal lengths.

By using computation capture, you're able to combine the wide-angle shot of the building with a closer shot of the person. It sounds a bit sci-fi, but there's no arguing with the results in the video above. Although it doesn't look as polished or clear as a single shot, the final image squeezes the foreground and background into the one frame in a way that wouldn't have been possible with a single image.

It's only a research project at the moment, but the researchers have bigger plans for Computational Zoom. They're hoping to turn it into a plug-in for existing image processing software at some point. Should it get off the ground, the system has the potential to change the way we think about photography.

Rather than forcing photographers to settle on one particular composition – and rather than having that composition dictated by the limitations of the lens they're using – the system could open the door for unique perspectives and angles through post-capture composition.

The team presented their paper describing the system, which is demonstrated in the video below, at the ACM SIGGRAPH 2017 conference.

Source: UC Santa Barbara